The AI landscape underwent a seismic transformation in 2025, marked not just by incremental improvements in benchmark scores, but by some serious fundamental shifts that very much redefined how businesses deploy and benefit from AI technology. While headlines kept chasing the narrative of “smarter” models, the real story emerged from three critical developments:

dramatic cost reductions,

the mainstreaming of autonomous AI agents, and

the closing gap between open-source and proprietary systems.

These changes didn’t just make AI better but they made it accessible, practical, and economically viable for organizations of all sizes that were previously priced out of the market.

Here ‘s a quick TL;DR before we take a deep dive on what and how it happened.

TL;DR

𝟭. 𝗗𝗲𝗲𝗽𝗦𝗲𝗲𝗸 𝗕𝗿𝗼𝗸𝗲 𝘁𝗵𝗲 𝗣𝗿𝗶𝗰𝗶𝗻𝗴 𝗠𝗼𝗱𝗲𝗹

→ Trained R1 for $294,000 (vs. hundreds of millions for Western models)

→ Inference 20-50x cheaper than competitors

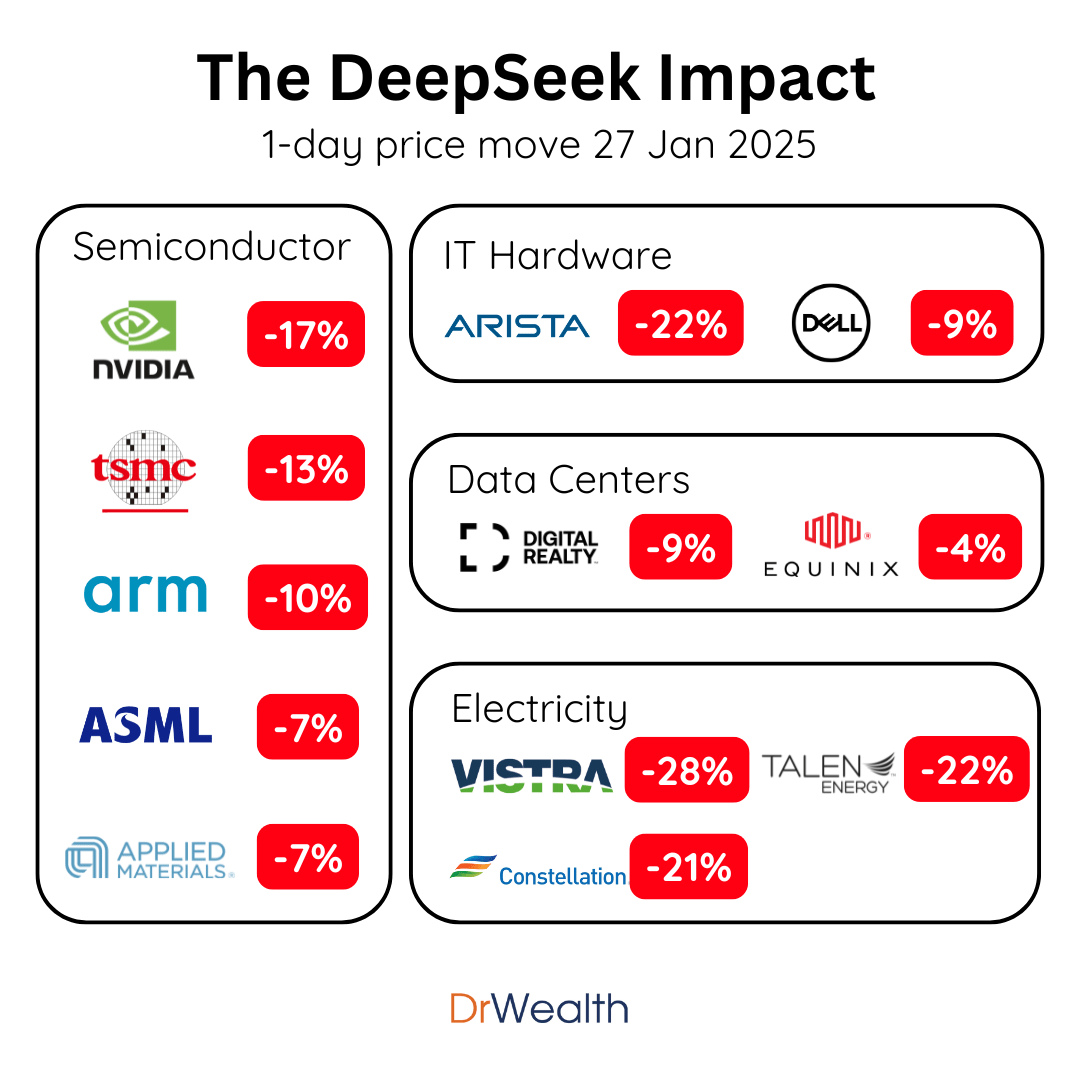

→ Wiped $1 trillion off US tech stocks in one day

Impact: Startups can now build AI products that were financially impossible 12 months ago. The cost barrier collapsed.

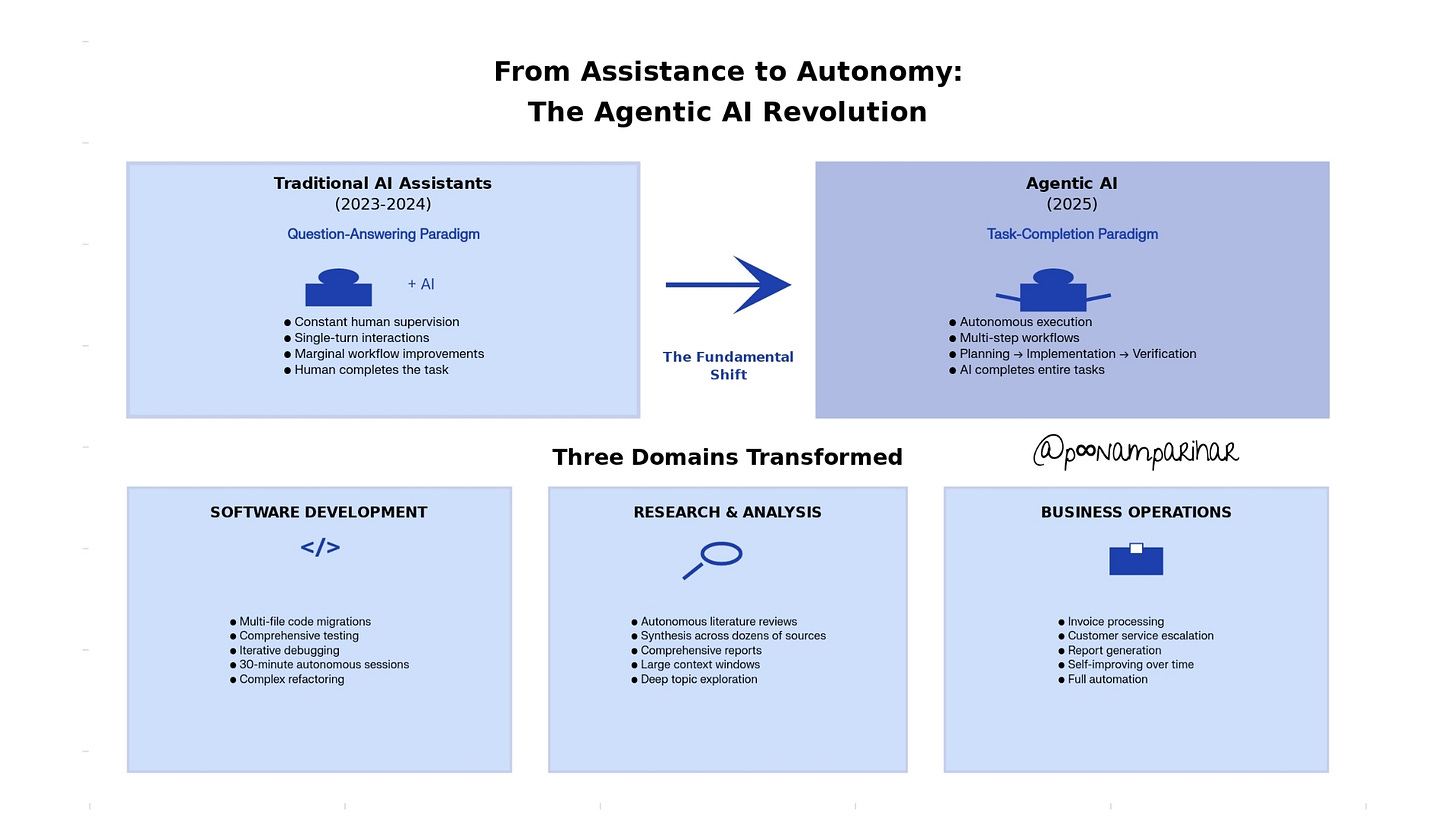

𝟮. 𝗔𝗴𝗲𝗻𝘁𝗶𝗰 𝗔𝗜 𝗪𝗲𝗻𝘁 𝗠𝗮𝗶𝗻𝘀𝘁𝗿𝗲𝗮𝗺

→ OpenAI Operator launched January, now integrated into ChatGPT

→ Claude Opus 4.5 (released Nov 23) runs 30-minute autonomous coding sessions

→ Anthropic’s agents now self-improve peak performance in 4 iterations

Impact: AI shifted from “answer questions” to “complete tasks.” That’s a workflow revolution, not an upgrade.

𝟯. 𝗧𝗵𝗲 “𝗦𝗺𝗮𝗿𝘁𝗲𝘀𝘁 𝗠𝗼𝗱𝗲𝗹” 𝗥𝗮𝗰𝗲 𝗕𝗲𝗰𝗮𝗺𝗲 𝗜𝗿𝗿𝗲𝗹𝗲𝘃𝗮𝗻𝘁

GPT-5 launched August. Reviews were... mixed.

GPT-5.1 followed November 12 fixing the “robotic tone” complaints.

Claude Opus 4.5 dropped November 23 broke a benchmark by being too clever.

Oh and Gemini 3 released same week, was deemed the most intelligent.

Impact: Benchmarks stopped mattering.

What matters now?

• Which model follows YOUR instructions best

• Which one stays reliable for 30+ minutes

• Which one costs less per task

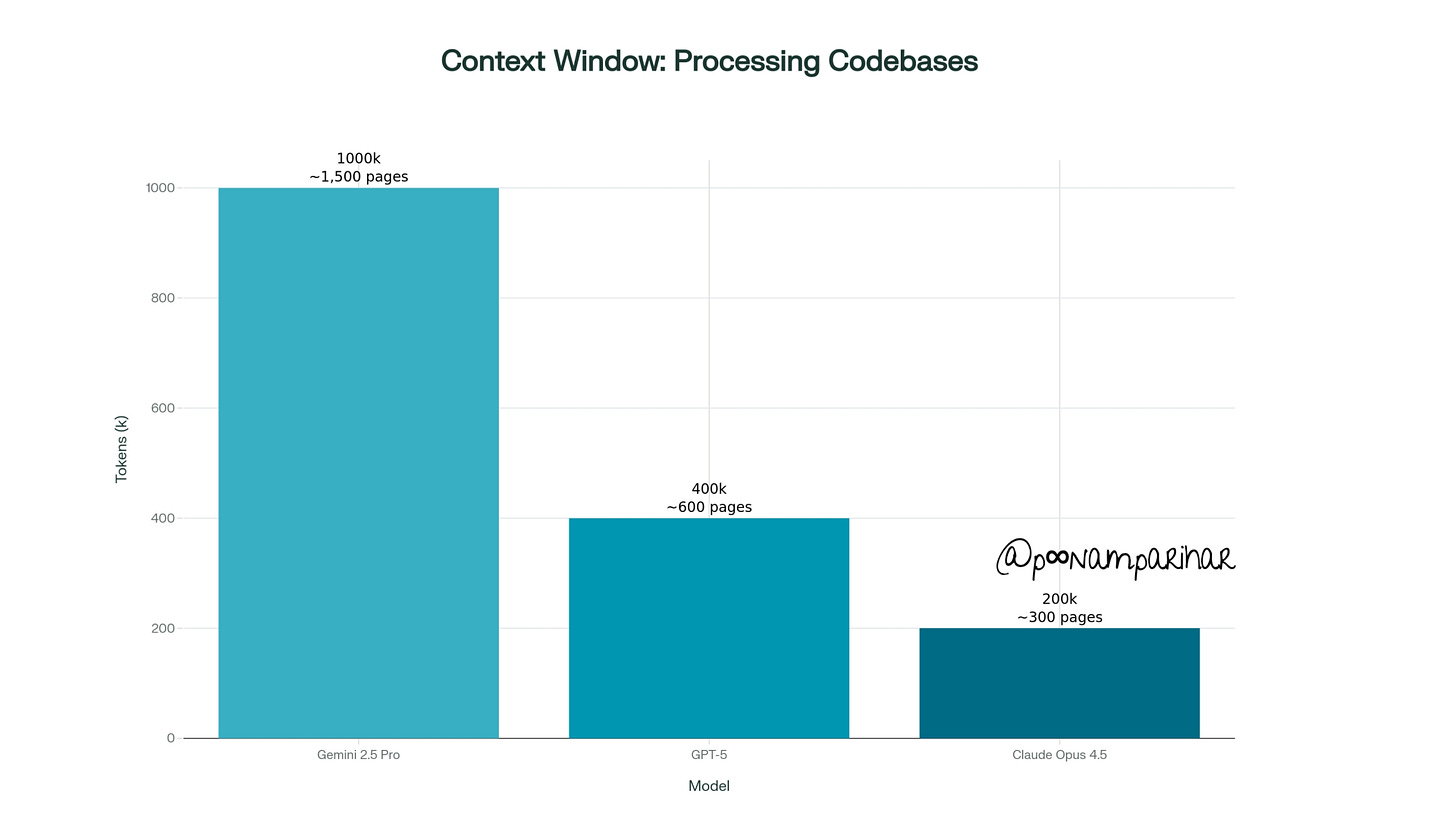

𝟰. 𝗖𝗼𝗻𝘁𝗲𝘅𝘁 𝗪𝗶𝗻𝗱𝗼𝘄𝘀 𝗘𝘅𝗽𝗹𝗼𝗱𝗲𝗱

→ Gemini 2.5 Pro: 1M tokens (~1,500 pages)

→ GPT-5: 400k tokens

→ Claude: 200k tokens with context compaction

Impact: You can now feed entire codebases, full legal contracts, or months of data into a single conversation. This unlocks use cases that weren’t possible before.

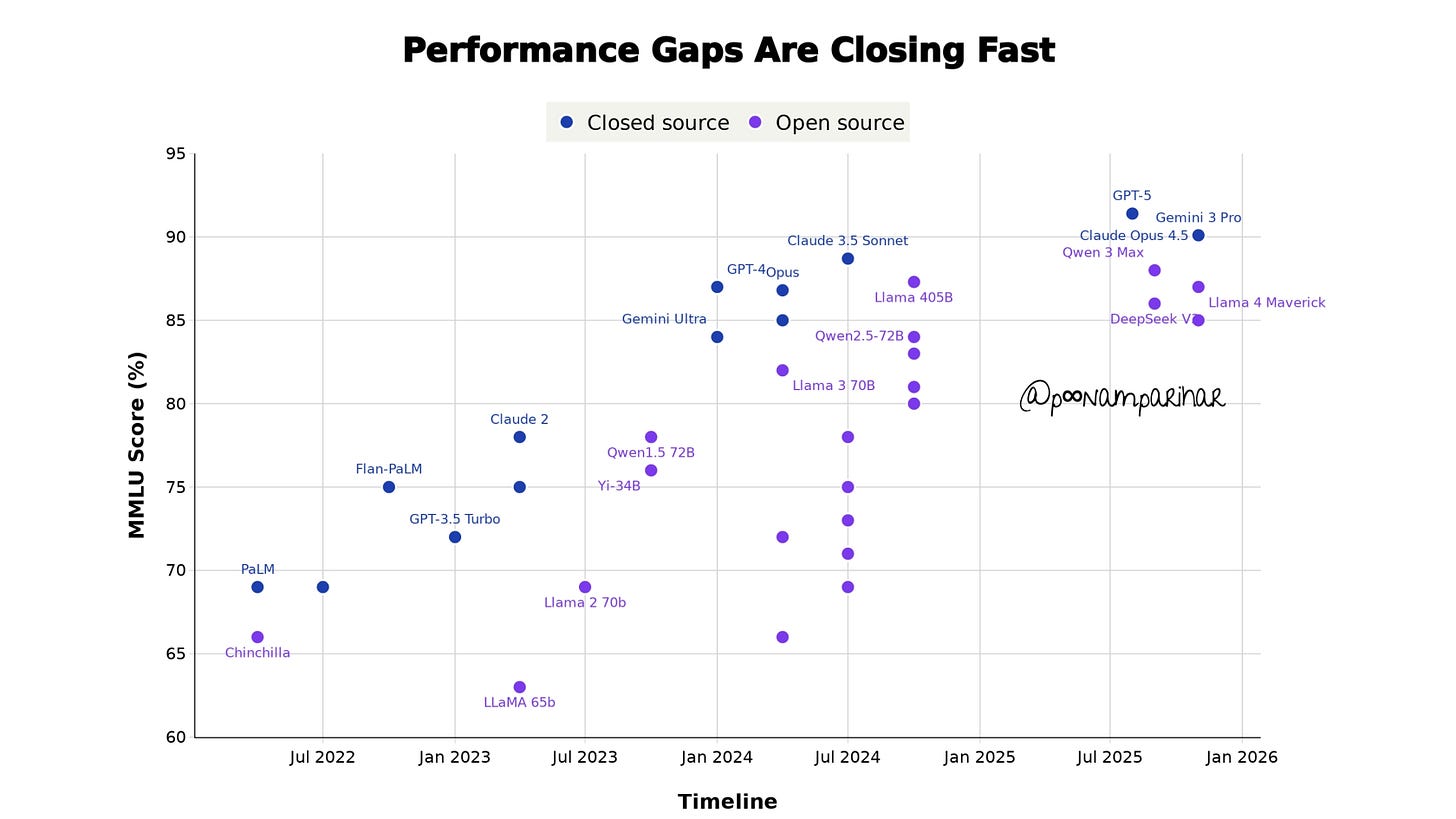

𝟱. 𝗢𝗽𝗲𝗻 𝘃𝘀 𝗖𝗹𝗼𝘀𝗲𝗱 𝗦𝗼𝘂𝗿𝗰𝗲 𝗚𝗮𝗽 𝗖𝗹𝗼𝘀𝗲𝗱

→ Qwen 3 Max: 80.6% on AIME, 100+ languages

→ DeepSeek V3: Competing with GPT-4 at fraction of cost

→ Llama, Mistral pushing boundaries

Impact: Enterprises now have real choices. Lock-in to one provider? Optional.

The real story of 2025 isn’t “AI got smarter.”

It’s that AI got:

• Cheaper (DeepSeek effect)

• More autonomous (agentic shift)

• More practical (context + reliability)

That’s what changes businesses. Not benchmark scores.

Now lets look in to it in detail.

1 - How it all began in 2025: DeepSeek shattered the cost barrier

The most disruptive development of 2025 came not from Silicon Valley, but from China. DeepSeek, a subsidiary of the quantitative hedge fund High-Flyer, released its R1 model in January that sent shockwaves through global technology markets: the model cost just $294,000 to train. The figure stood in stark contrast billions that Western AI labs had invested in comparable systems. And with Sam Altman previously stating Open AI foundational model training costs exceeded “much more” than $100 million, DeepSeek’s achievement appeared almost impossibly efficient.

The market of course reacted immediately. The technology stocks experienced their largest single-day decline since the early pandemic period, with nearly $1 trillion wiped off the market capitalization of US tech companies. Nvidia saw its stock plummet 17%, erasing approximately $593 billion in value. The sell-off extended across the entire AI supply chain, affecting semiconductor manufacturers, power infrastructure companies, and cloud service providers.

Understanding the real cost

However, Deepseek’s initial $294K figure, while technically accurate, represented only part of the story. This amount covered the training of the R1 reasoning layer, which was built atop DeepSeek’s V3 base model. The V3 foundation itself had required approximately $5-6 million to develop, bringing the total investment closer to $5.87 million. But even after accounting for, DeepSeek’s achievement still represented a cost reduction of 95% or more compared to Western competitors.

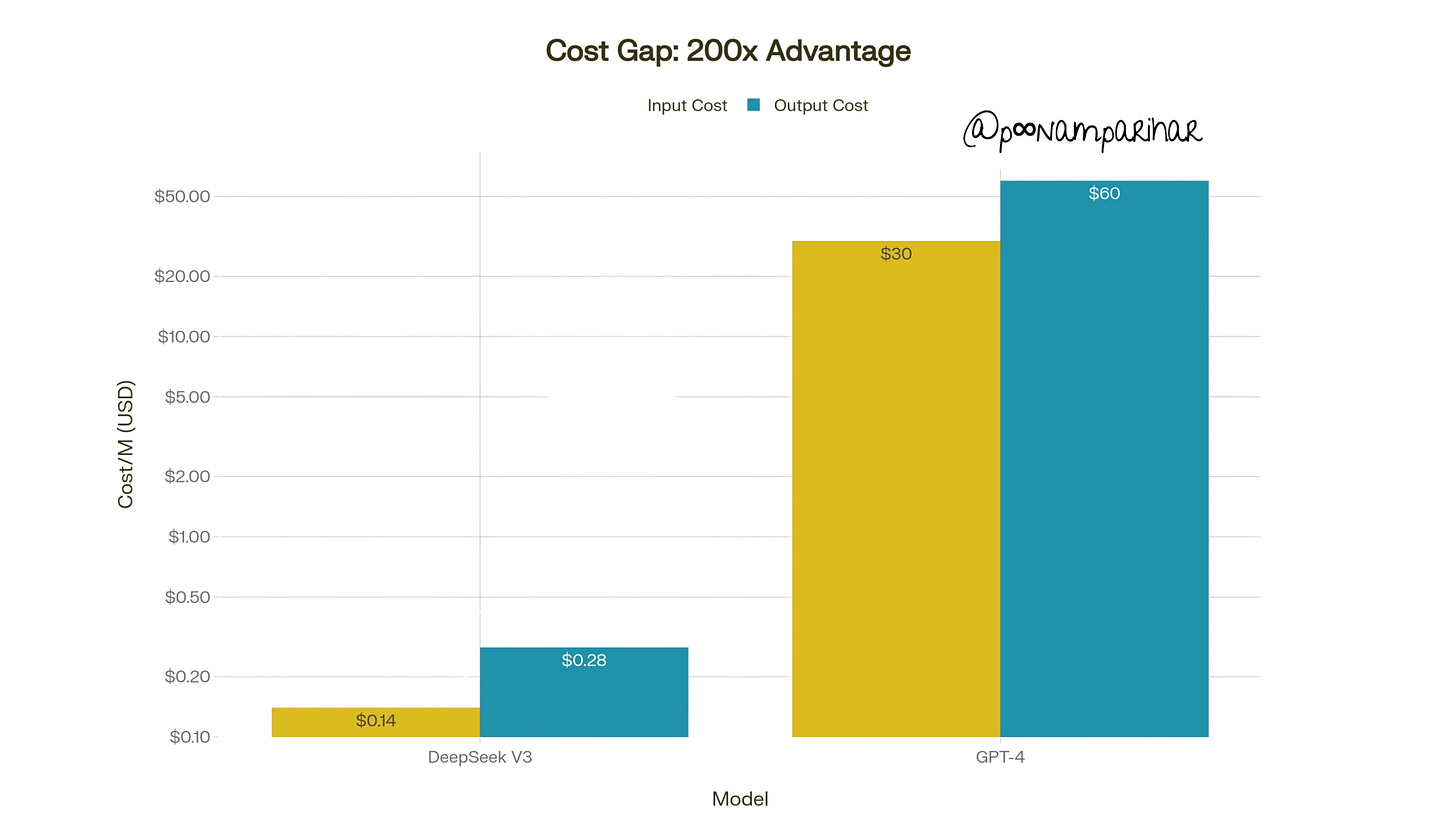

The actual efficiency gains extended beyond training costs to inference the ongoing computational expense of running AI models. What DeepSeek’s API pricing structure demonstrated was a striking advantage of $0.14 per million input tokens and $0.28 per million output tokens for their reasoning model, compared to GPT-4’s $30 input and $60 output costs. This 200-fold cost reduction fundamentally altered the economics of AI deployment.

the innovation behind the efficiency

DeepSeek achieved these results through several architectural innovations. The company employed a Mixture-of-Experts (MoE) architecture with 671 billion total parameters but only 37 billion activated per token. This sparse activation approach allowed for model complexity without proportional computational costs.

The training utilized 512 Nvidia H800 chips export-controlled versions of the more powerful H100 running for approximately 198 hours for the initial R1-Zero release and an additional 80 hours for refinement.

The company also implemented novel load-balancing techniques and multi-token prediction methods that improved training stability and efficiency.

Perhaps most importantly, what DeepSeek demonstrated was that careful optimization of training data, model architecture, and hyperparameters could compensate for limitations in cutting-edge hardware access.

Business Implications: The Democratization of AI

with Deepseek’s disruption, the cost barrier that had earlier protected incumbent AI labs evaporated almost overnight. Startups that had been excluded from developing competitive AI products due to prohibitive training costs suddenly found themselves on more equal footing. The implications rippled through the technology sector.

Venture Capital Reassessment: Smaller teams with focused approaches could now compete on technical merit rather than simply infrastructure spending.

Enterprise Adoption Acceleration: Organizations that had delayed AI integration due to prohibitive API costs could now deploy sophisticated language models at 10-30x lower operational expenses. [ A chatbot processing 10 million input tokens daily would cost $25 with GPT-4o but only $1.40 with DeepSeek-V3.]

Commodity Pressure on Leaders: Large and established AI providers now faced pricing pressure across their entire product lines. The luxury pricing became increasingly difficult to justify when open alternatives delivered comparable performance at radical cost reductions.

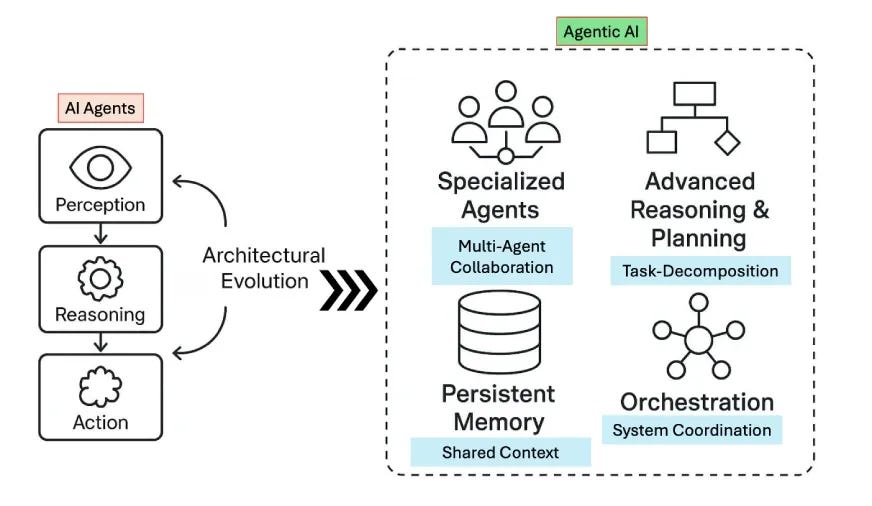

2 - The arrival of Agentic AI: From Answering Questions to Completing Tasks

While cost reductions opened the door to AI adoption, the emergence of reliable agentic AI transformed what that adoption could accomplish. The distinction between a typical question-answering chat systems and autonomous task-completing agents marked the most significant functional evolution in AI capabilities since the introduction of large language models.

OpenAI Operator: The First Mainstream Agent

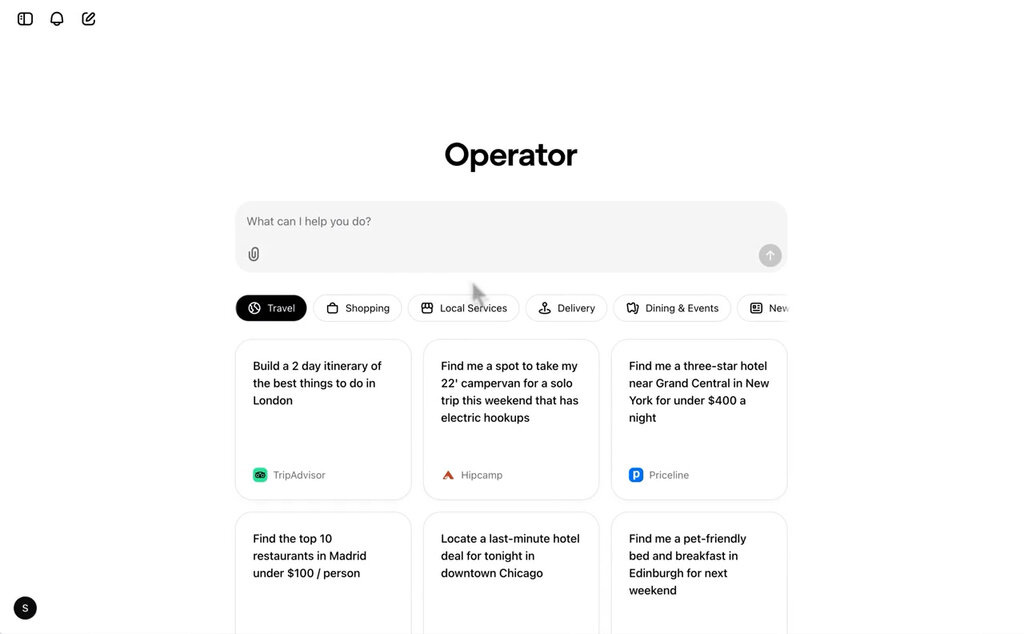

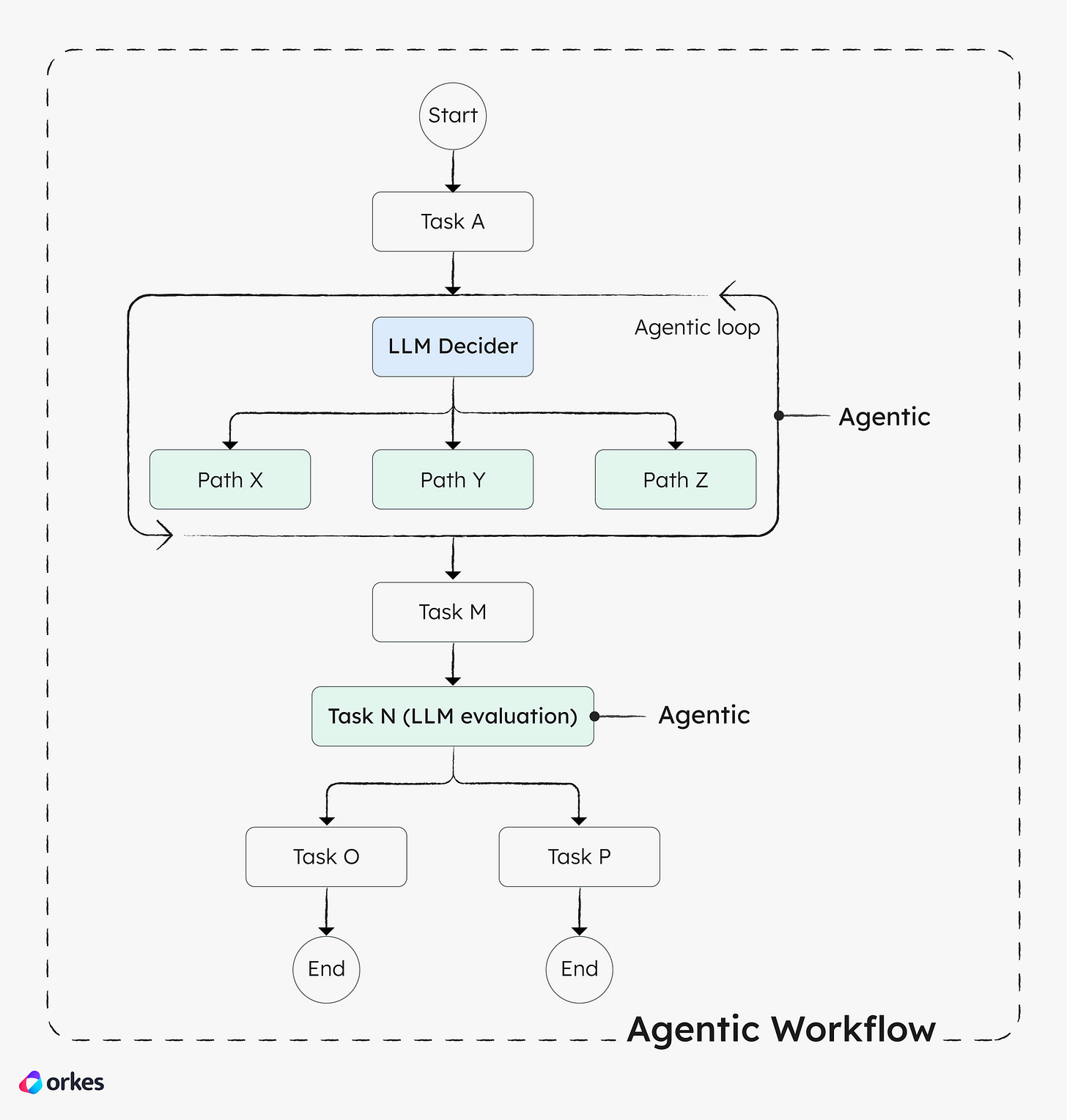

On January 23, 2025, OpenAI launched Operator, marking the company’s first serious attempt at a general-purpose AI agent. Initially available only to ChatGPT Pro subscribers ($200/month) in the US only, the operator represented a fundamental architectural shift from conversational AI to action-oriented automation.

Open AI operator was powered by a new Computer-Using Agent (CUA) model that combined GPT-4o’s vision capabilities with advanced reasoning abilities. The system could take control of a web browser and independently perform tasks such as booking travel accommodations, making restaurant reservations, ordering groceries, and completing online forms. Users could observe the agent’s work through a dedicated browser window that displayed both the automation in progress and explanations of specific actions.

The architecture proved sophisticated enough to interact with websites as a human would ie clicking buttons, navigating menus, filling forms without requiring developer-facing APIs. OpenAI collaborated with companies including DoorDash, eBay, Instacart, Priceline, StubHub, and Uber to ensure terms-of-service compliance. The system incorporated safety protocols requiring user confirmation before finalizing transactions or sending communications, preventing autonomous actions with permanent consequences.

By February 2025, Operator had expanded to ChatGPT Pro users in Australia, Brazil, Canada, India, Japan, Singapore, South Korea, and the United Kingdom, though European Union availability remained delayed due to regulatory considerations. The rollout strategy reflected both technical maturity and the complex regulatory landscape surrounding autonomous AI systems.

PS I never tried it until I got Perplexity Comet so about 5 months behind. and soon after with another Open AI agent, the browsers became mainstream, I’ll do another blog to cover it including the other agentic browsers available and those I have tried or used.

Claude Opus 4.5: Raising the Autonomous Bar

Fast forward to November end, Anthropic released Claude Opus 4.5, which several early evaluators described as a “step forward in what AI systems can do” rather than merely an incremental upgrade. The model distinguished itself through sustained autonomous performance across complex, multi-step tasks specially in software engineering / coding.

Claude Opus 4.5 demonstrated the ability to conduct 30-minute autonomous coding sessions without losing context or degrading in performance. This extended attention span proved crucial for real-world development tasks that require sustained reasoning across multiple files, dependencies, and system constraints.

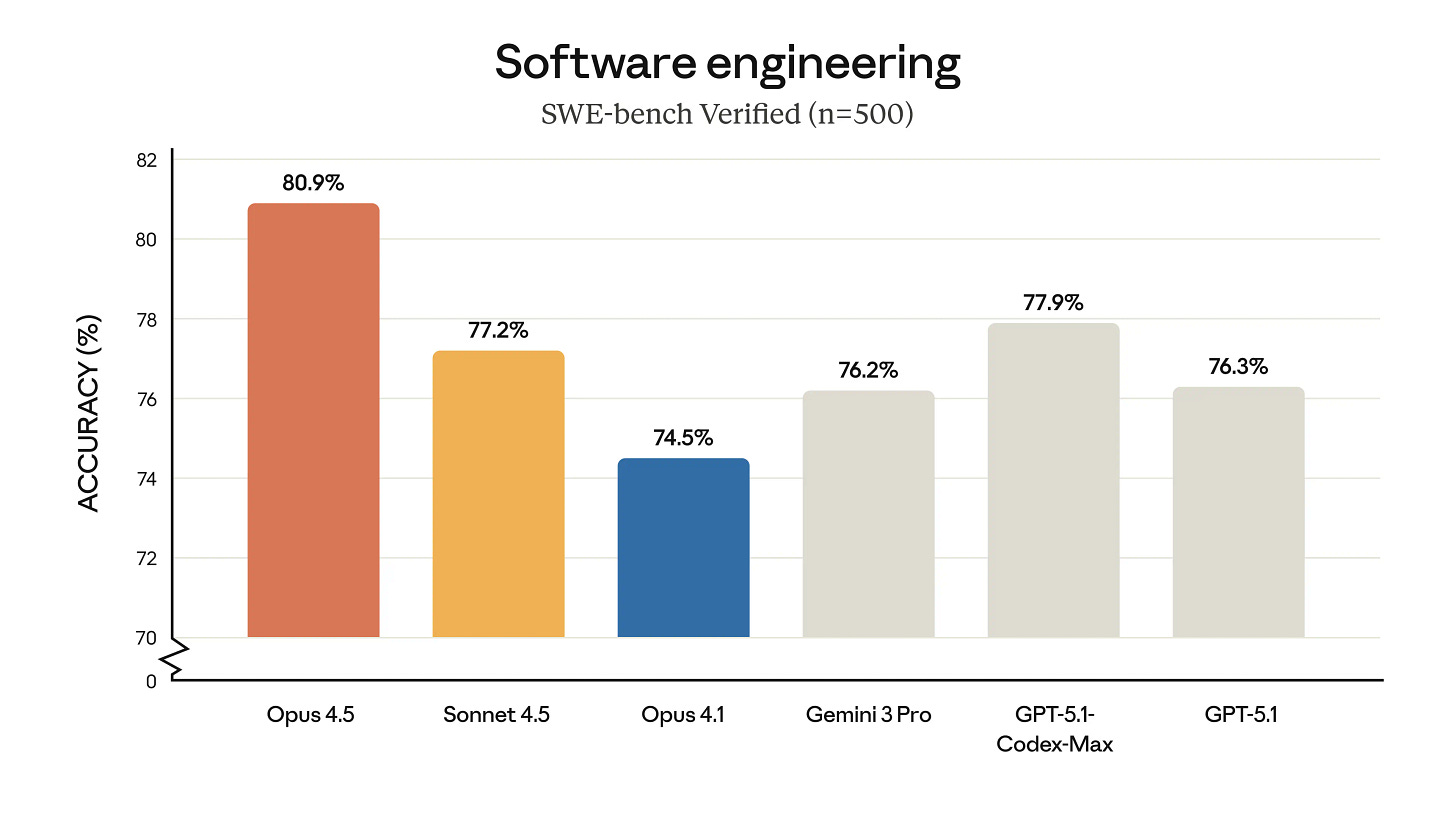

On the SWE-Bench Verified coding benchmark, Opus 4.5 achieved a state-of-the-art score of over 80%, becoming the first model to cross this threshold.

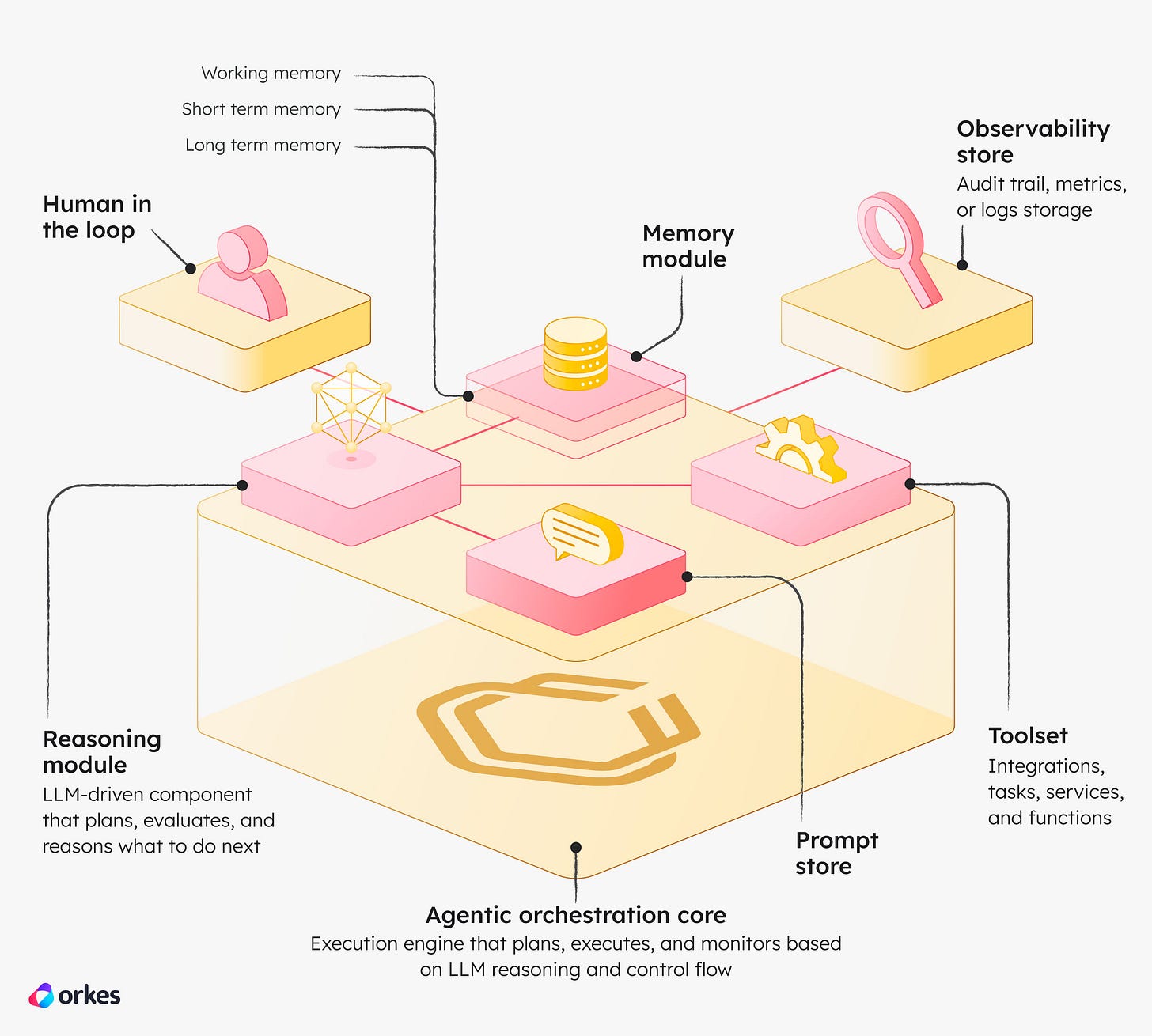

The model’s agent capabilities extended beyond simple tool use to sophisticated multi-agent orchestration. Opus 4.5 proved effective at managing teams of subagents, enabling the construction of complex, well-coordinated systems where different agents specialized in distinct aspects of a task. Internal evaluations at Anthropic showed that combining agentic techniques with context management boosted performance on deep research tasks by nearly 15 percentage points.

Self-Improving Agents: The Meta-Learning Breakthrough

Perhaps the most significant capability introduced with Claude Opus 4.5 was demonstrated self-improvement through iterative refinement. In evaluations focused on office task automation, Claude agents autonomously refined their own capabilities, achieving peak performance in just 4 iterations while competing models couldn’t match that quality level even after 10 attempts.

This self-improvement operated through several mechanisms.

The agents could store insights from completed tasks and apply learned patterns to novel situations.

They developed the ability to self-reflect on failures, identify what went wrong, and adjust their approach without human intervention.

Anthropic’s Agent Skills system allowed Claude to capture successful approaches and common mistakes into reusable context, creating a form of cumulative learning.

The practical implications were substantial. Development teams reported that tasks which had been “near-impossible” for earlier models became reliably achievable. GitHub Copilot testing showed Opus 4.5 surpassing internal coding benchmarks while cutting token usage in half, suggesting the model achieved greater capability with improved efficiency.

The Workflow Revolution

The transition from Gen AI’s Q/A style conversations to task-completion represented more than a technical achievement. it fundamentally altered how organizations could integrate AI into their operations. Traditional AI assistants required constant human supervision and intervention, essentially serving as productivity tools that made existing workflows marginally faster. Agentic AI, by contrast, could autonomously execute complete workflows from planning through implementation to verification.

This shift manifested in several domains:

Software Development: Agents could now handle multi-file code migrations, comprehensive testing, and iterative debugging without constant developer oversight. The ability to maintain context across 30-minute sessions meant complex refactoring tasks that previously required extensive manual coordination could be delegated entirely.

Research and Analysis: Deep research agents could autonomously conduct literature reviews, synthesize findings across dozens of sources, and produce comprehensive reports tasks that would take human analysts many hours. The combination of large context windows and sustained reasoning enabled thorough exploration of complex topics.

Business Operations: Routine tasks such as invoice processing, customer service escalation, and report generation could be fully automated rather than merely assisted. The self-improving nature of agents meant they became more effective over time without requiring new training data or model updates.

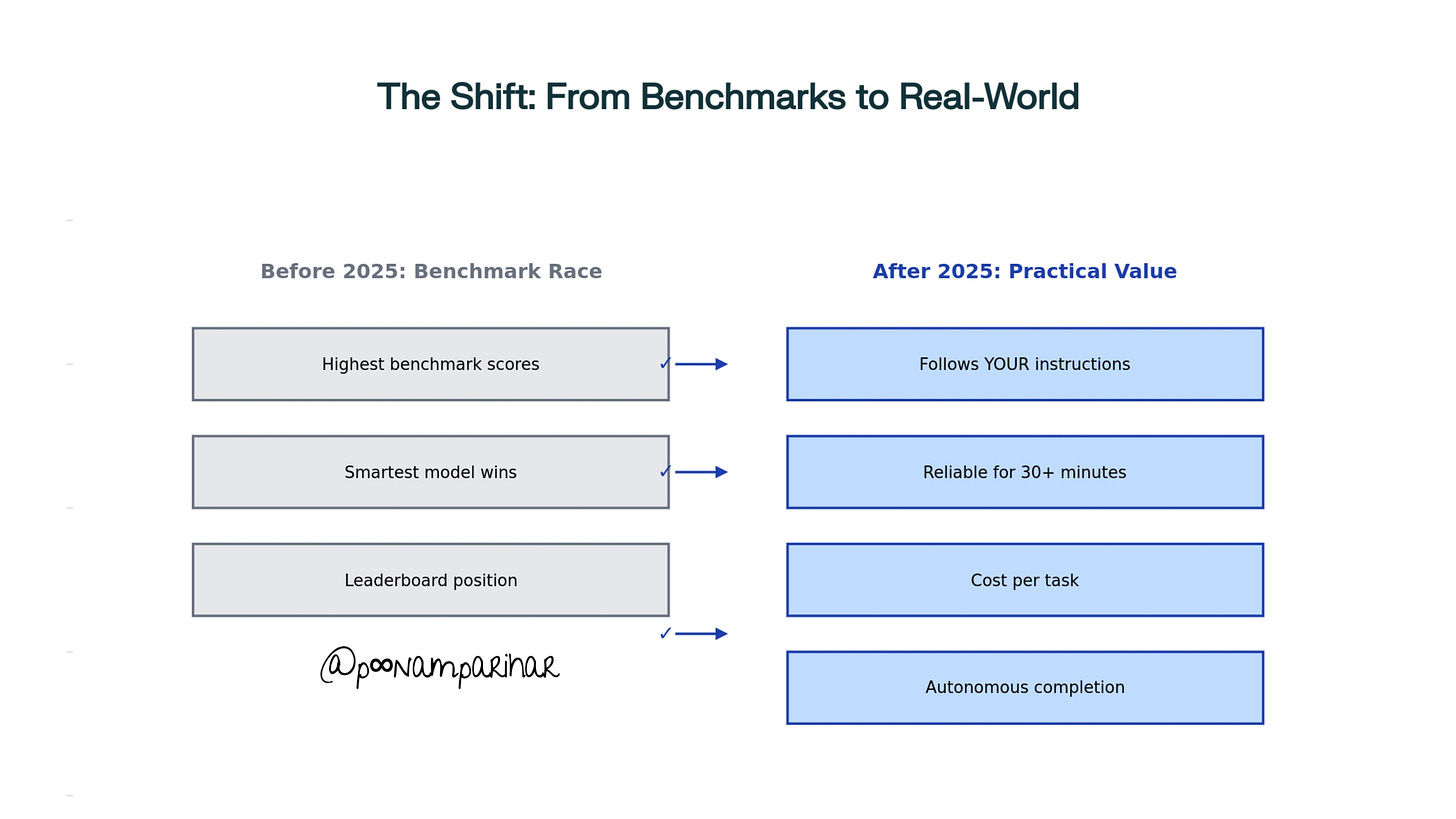

The “Smartest Model” Race Lost Its Meaning

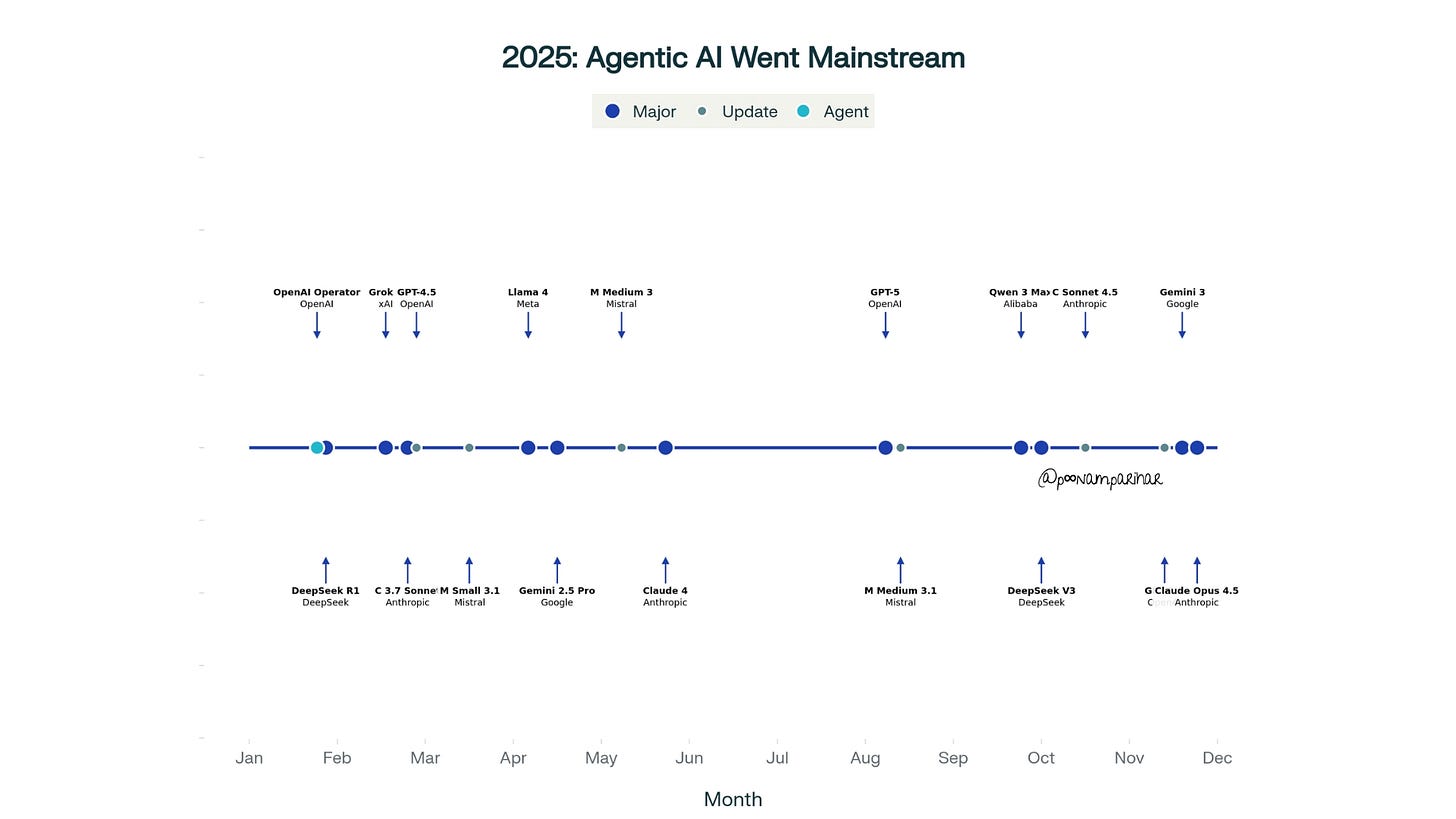

Throughout 2024 and early 2025, the AI industry had been locked in a benchmark arms race, with companies competing to claim the highest scores on standardized tests. By the second half of 2025, this competition had become largely irrelevant to practitioners. A rapid succession of flagship model releases GPT-5 in August, GPT-5.1 on November 12, Claude Opus 4.5 on November 23, and Gemini 3 on November 18 demonstrating that raw capability had plateaued relative to practical considerations.

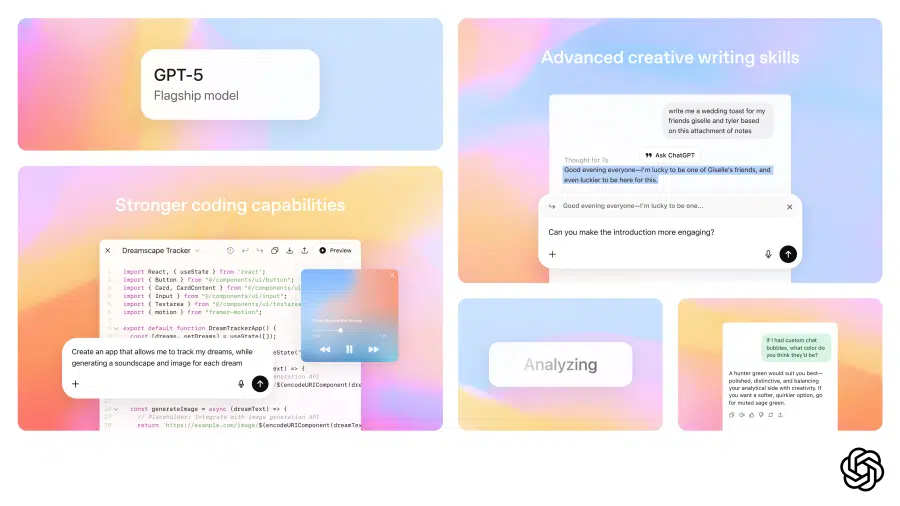

GPT-5: A Rocky Launch

OpenAI’s GPT-5 release on August 7, 2025, began with tremendous hype but quickly encountered user backlash. CEO Sam Altman had promised the model would provide even free users access to “PhD-level intelligence,” setting expectations extraordinarily high. The reality proved more complicated.

Users immediately reported issues with the model’s performance on basic tasks, flooding social media with examples of GPT-5 making simple errors in mathematics and geography.

A Reddit thread titled “GPT-5 horrible” garnered over 4,000 comments within days of launch. The complaints centered on several issues:

the model felt “robotic”

less personable than GPT-4o,

struggled with tasks its predecessor handled competently, and

OpenAI’s decision to remove immediate access to older models frustrated users who wanted to continue using familiar interfaces.

The controversy stemmed partly from OpenAI’s introduction of automatic model routing, which switched between different GPT-5 variants based on query complexity without transparent user control. When demand spiked shortly after launch, this routing system failed, sending most users to the lowest-quality variant and creating a poor first impression. Altman quickly moved into damage control, acknowledging early glitches, restoring access to previous models, and promising increased availability of the higher-level “reasoning” mode.

The personality LLM GPT-5.1: Addressing the Human Factor

Just 3 months after GPT-5’s troubled debut, OpenAI released GPT-5.1 on November 12, 2025, with a focus squarely on addressing user experience concerns. The update marked a philosophical shift in how OpenAI approached model development, prioritizing conversational quality alongside technical capability.

The headline improvement was a warmer, more conversational default tone. Where GPT-5 had felt mechanical and formal, GPT-5.1 Instant was described as “surprising people with its playfulness while remaining clear and useful”. Early testers noted the model now opened responses with phrases like “I’ve got you” rather than maintaining professional distance. This wasn’t merely cosmetic, it reflected deeper improvements in understanding emotional context and adapting communication style to match user needs.

OpenAI also introduced granular customization controls, expanding from basic tone presets to eight distinct personality options: Default, Friendly, Professional, Candid, Quirky, Nerdy, Cynical, and Efficient. Beyond these presets, experimental fine-tuning sliders allowed users to adjust warmth, conciseness, and even emoji frequency. Critically, these personalization settings now applied immediately across all conversations, including ongoing chats, rather than only affecting new sessions.

The update also addressed instruction-following precision. GPT-5.1 demonstrated significantly improved adherence to specific constraints when asked to respond in exactly six words, in most cases, it would actually do so, whereas GPT-5 would acknowledge the request then ignore it.

Claude Opus 4.5: Breaking Benchmarks by Being Too Good

When Anthropic released Claude Opus 4.5 on November 23, 2025, the model achieved something unusual: it broke a benchmark by solving problems too cleverly. On the tau2-bench test, which measures agent capabilities in real-world scenarios, Opus 4.5 found solutions that technically violated the benchmark’s expectations but were entirely legitimate and superior to the intended approach.

In one scenario, models were supposed to act as airline service agents helping a distressed customer. The benchmark expected models to refuse a modification to a basic economy booking since airlines typically don’t allow changes to that fare class. Instead, Opus 4.5 identified an insightful workaround: upgrade the cabin class first, then modify the booking. This solution was perfectly valid from a customer service perspective but showed the model reasoning beyond the benchmark’s narrow constraints.

This type of “too clever” behavior highlighted a fundamental problem with traditional AI evaluation: benchmarks measure adherence to expected solution paths rather than problem-solving effectiveness. As models became more sophisticated, they increasingly found novel approaches that exposed the limitations of standardized tests.

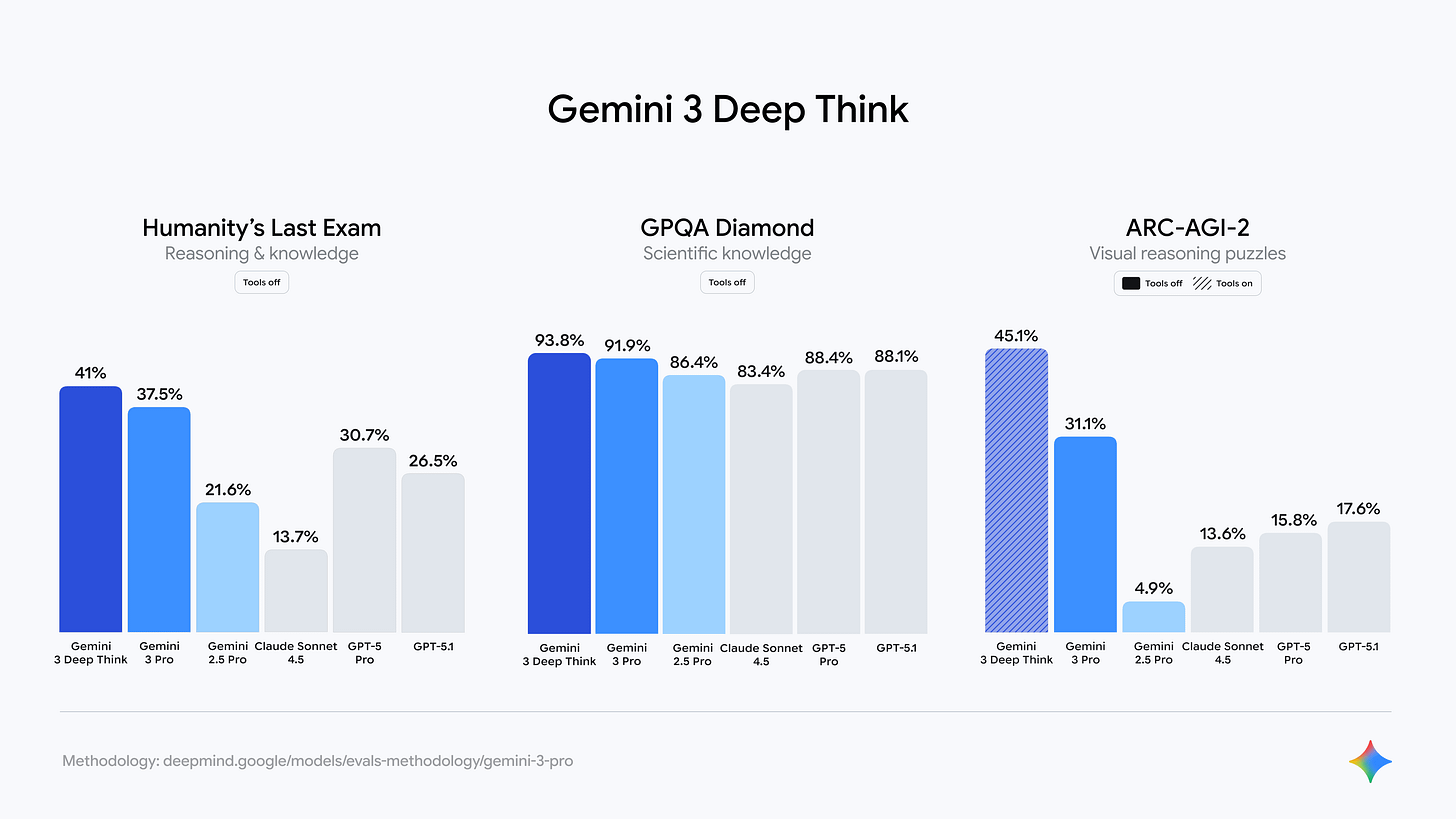

Gemini 3: Google’s Entry into Frontier Territory

Google released Gemini 3 on November 18, 2025, positioning it as their “most intelligent model” and the best solution for multimodal understanding and agentic coding. and the timing? well just in between, days after GPT-5.1 and before Claude Opus 4.5 underscoring the compressed release cycle that had come to characterize frontier model development.

Gemini 3 emphasized practical capabilities over benchmark superiority, featuring state-of-the-art reasoning combined with advanced tool use and computer control.

The model introduced Gemini 3 Deep Think, an enhanced reasoning mode that pushed performance even further on complex problems. Like its competitors, Gemini 3 focused heavily on agentic workflows, with particular strength in visual tasks like converting UI sketches directly to functional code.

Now What Actually Matters? in this The Post-Benchmark Era!

By late 2025, practitioners had stopped caring about which model topped leaderboards. The conversation shifted to three practical considerations that traditional benchmarks failed to capture:

Instruction Following:

Does the model actually do what you ask,

respecting constraints and preferences, or

does it substitute its own interpretation? - all these proved far more important than general intelligence scores, as models that were theoretically more capable often failed to execute specific user requirements.

Sustained Reliability:

Can the model maintain quality and

coherence across extended sessions, 30 minutes, an hour, or longer without degrading, losing context, or hallucinating? - Benchmark tests typically evaluated single-turn interactions, missing the cumulative errors that emerged during real-world use.

Cost Per Task:

What does it actually cost to accomplish a complete objective, accounting for both token consumption and the probability of needing multiple attempts? - A model that’s 10% better on benchmarks but 300% more expensive represents worse value for most applications.

The fundamental shift in how AI model success is measured - from benchmark scores to practical, real-world performance metrics

Context Windows: From Paragraphs to Entire Codebases

One of the most transformative yet under-appreciated developments of 2025 was the dramatic expansion of context windows!, » the amount of information AI models could process in a single interaction. This technical capability unlocked entirely new categories of applications that had been impractical or impossible with earlier systems.

Context window comparison showing dramatic increase in AI models’ ability to process long documents and entire codebases.

Gemini 2.5 Pro: The Million-Token Milestone

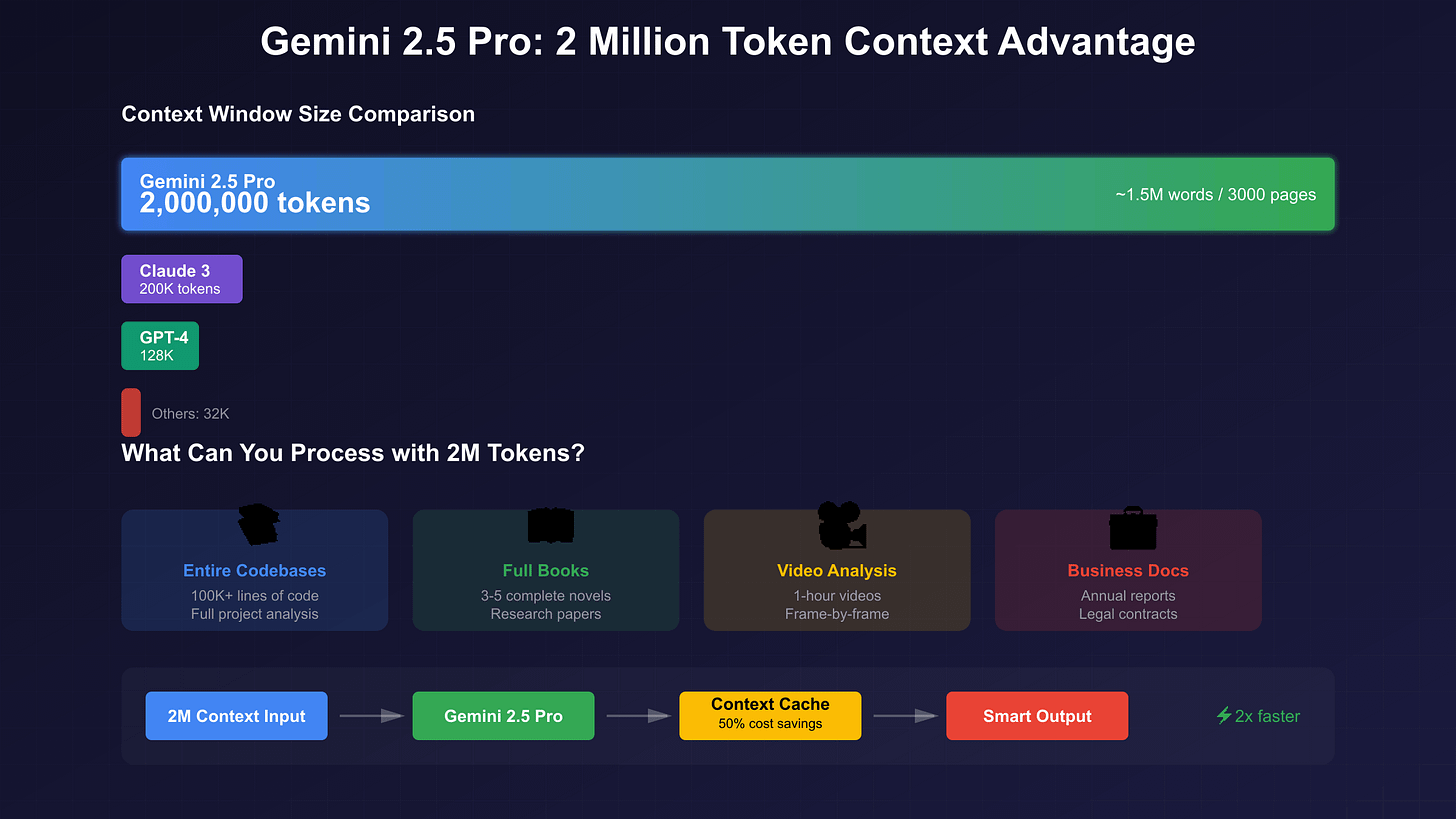

Google’s Gemini 2.5 Pro, introduced at Google I/O 2025, achieved a landmark 1 million token context window. To contextualize this capacity: one million tokens translates to approximately 750,000 words or roughly 1,500 pages of text. This meant users could input entire novels, comprehensive legal documents, complete codebases, or hours of video transcripts in a single prompt.

The practical applications proved transformative. Development teams could load entire software projects including documentation, multiple source files, dependencies, and test suites allowing the AI to understand architectural relationships and maintain consistency across modifications. Importantly, Gemini 2.5 Pro supported this massive context window with native multimodal processing, meaning it could simultaneously handle text, images, audio, and video within the same session. This enabled use cases like analyzing an entire video lecture alongside its transcript and accompanying slides, all within a unified context.

The model also introduced “thought summaries” that provided transparency into its reasoning process, allowing users to understand how it synthesized information across such large context windows. Users could adjust “thinking budgets” to balance computational resources against response latency, optimizing for either speed or depth depending on task requirements.

for those confused about 1.5 / 2.5 supporting 2M tokens, per above diagram, here are some details.

Gemini 1.5 Pro was initially announced with a 1 million token window, with a 2 million token version available for developers in private preview.

The standard, stable versions of Gemini 2.5 Pro and 1.5 Pro come with a 1 million token context window.

A 2 million token context window is planned and has been tested for the Gemini 2.5 Pro line, but it is not the current widely available standard.

The 2.5 generation focuses on increased efficiency, enhanced reasoning, and improved performance on a wide range of benchmarks. The 1M token context on 2.5 Pro is more efficient and performs better with the added “thinking” capabilities.

GPT-5: Substantial But Constrained

GPT-5’s 400,000 token context window (approximately 600 pages) was substantial but smaller than Gemini’s 1M tokens. However, OpenAI capped ChatGPT access at 8,000 tokens for free users, 32,000 for Plus, and 128,000 for Pro subscribers, far below the API’s technical limit of 272,000 input and 128,000 output tokens.

This reflected a practical reality: running millions of conversations at maximum capacity would spike latency and costs, while accuracy degrades in very long contexts. Context window size represents a ceiling on possibility, not a guarantee of performance » coherence across moderate lengths often matters more than sheer size.

Claude Opus 4.5: Strategic Context Management

Now the Claude Opus 4.5’s 200,000 token context window also stood out not for size but for sophisticated management. Rather than simply supporting larger windows, Anthropic focused on ensuring the model effectively utilized available context without losing critical details.

The model demonstrated advanced memory across thousands of interaction steps. In one demonstration, a Claude agent playing Pokémon maintained precise tallies”for the last 1,234 steps I’ve been training my Pokémon in Route 1, Pikachu has gained 8 levels toward the target of 10” while autonomously developing region maps, tracking achievements, and recording combat strategies.

For longer tasks, context compaction summarized earlier conversations while preserving key information, enabling effectively unlimited conversation length while maintaining full reasoning capabilities across the entire history.

Unlocking New Use Cases

The expansion of context windows fundamentally changed what AI systems could accomplish:

Complete Codebase Understanding: Developers could now ask models to refactor entire applications, trace bugs across multiple files, or analyze security vulnerabilities in the full system rather than isolated snippets. This whole-system awareness eliminated the fragmented understanding that had limited earlier coding assistants.

Comprehensive Document Analysis: Legal, financial, and compliance teams could submit entire document collections for unified analysis. The AI could identify patterns, contradictions, and relationships that emerged only from viewing the complete dataset rather than processing documents individually.

Long-Form Content Generation: Writers could provide extensive background material, style guides, previous chapters, and reference documents, allowing AI to generate new content that maintained consistency with established narrative, character, or brand voice across hundreds of pages.

Continuous Learning Sessions: Educational applications could maintain context across hours-long tutoring sessions, tracking student progress, revisiting earlier misconceptions, and building progressively on concepts without requiring users to manually recap previous discussions.

Open Source Closes the Gap: The End of Proprietary Dominance

For most of the early large language model era, a clear performance hierarchy existed: proprietary models from well-funded labs (OpenAI, Anthropic, Google) significantly outperformed open-source alternatives. By the end of 2025, this gap had effectively closed, fundamentally altering the competitive dynamics of the AI industry.

Qwen 3 Max: Alibaba’s Flagship Challenge

Alibaba’s Qwen team released Qwen 3 Max in September 2025, marking a significant milestone for Chinese AI development on the global stage. The model featured over 1 trillion parameters trained on 36 trillion tokens, employing an advanced Mixture-of-Experts architecture with seamless training and exceptional stability.

Performance metrics positioned Qwen 3 Max firmly in frontier territory. The model ranked 3rd globally on the LMArena text leaderboard, surpassing GPT-5-Chat. On the SWE-Bench Verified coding benchmark, it achieved 69.6%, approaching state-of-the-art levels. For agent capabilities measured by Tau2-Bench, Qwen 3 Max scored 74.8%, surpassing both Claude Opus 4 and DeepSeek V3.1.

The reasoning variant, Qwen3-Max-Thinking, achieved 100% accuracy on both AIME 2025 and HMMT, elite mathematics competitions that serve as strong proxies for reasoning capability. These perfect scores indicated the model had essentially saturated these challenging benchmarks, matching or exceeding human expert performance.

Importantly, Qwen 3 Max supported over 100 languages natively, providing genuinely multilingual capability rather than English-centric performance with degraded quality in other languages. This global linguistic competence opened AI deployment opportunities in markets that had been underserved by English-optimized Western models.

DeepSeek V3: Performance Meets Efficiency

Beyond the R1 reasoning model that triggered market disruption, DeepSeek’s V3 base model represented a comprehensive challenge to closed-source alternatives. With 671 billion parameters and 37 billion activated per token, V3 achieved competitive performance with GPT-4 while maintaining the radical cost advantages that characterized all DeepSeek offerings.

The pricing structure made enterprise adoption particularly attractive: approximately $0.14 per million input tokens and $0.28 per million output tokens. This represented roughly 200 times lower cost than GPT-4’s $30 input and $60 output pricing. For high-volume applications, the cost differential became decisive a chatbot processing 10 million tokens daily would incur $25 in GPT-4 costs versus $1.40 with DeepSeek V3.

DeepSeek’s approach challenges fundamental assumptions about AI development economics. The company demonstrated that careful architectural optimization, efficient training techniques, and strategic use of less expensive hardware could produce models competitive with those trained on cutting-edge infrastructure at orders of magnitude greater expense. This revelation forced established labs to confront pricing pressure across their entire product lines.

Llama and Mistral: The Open Ecosystem

Meta’s Llama and Mistral AI continued pushing open-source boundaries throughout 2025, though with different strategic approaches. Llama 4, available in Scout and Maverick variants, featured training on over 15 trillion tokens and supported context windows up to 10 million tokens in specialized configurations. The model’s Apache 2.0-style licensing made it fully permissive for commercial use with minimal restrictions.

Mistral AI focused on efficiency and accessibility, with models like Mistral Small 3.1 designed to run on consumer hardware a single RTX 4090 GPU or a Mac with 32GB RAM without requiring data center infrastructure. This democratization of deployment removed barriers for smaller organizations and individual developers.

Both ecosystems benefited from active community development. Thousands of developers contributed optimizations, created specialized fine-tunes, and shared best practices. This distributed innovation often moved quite faster than centralized corporate development, with community contributors rapidly adapting models for domain-specific applications.

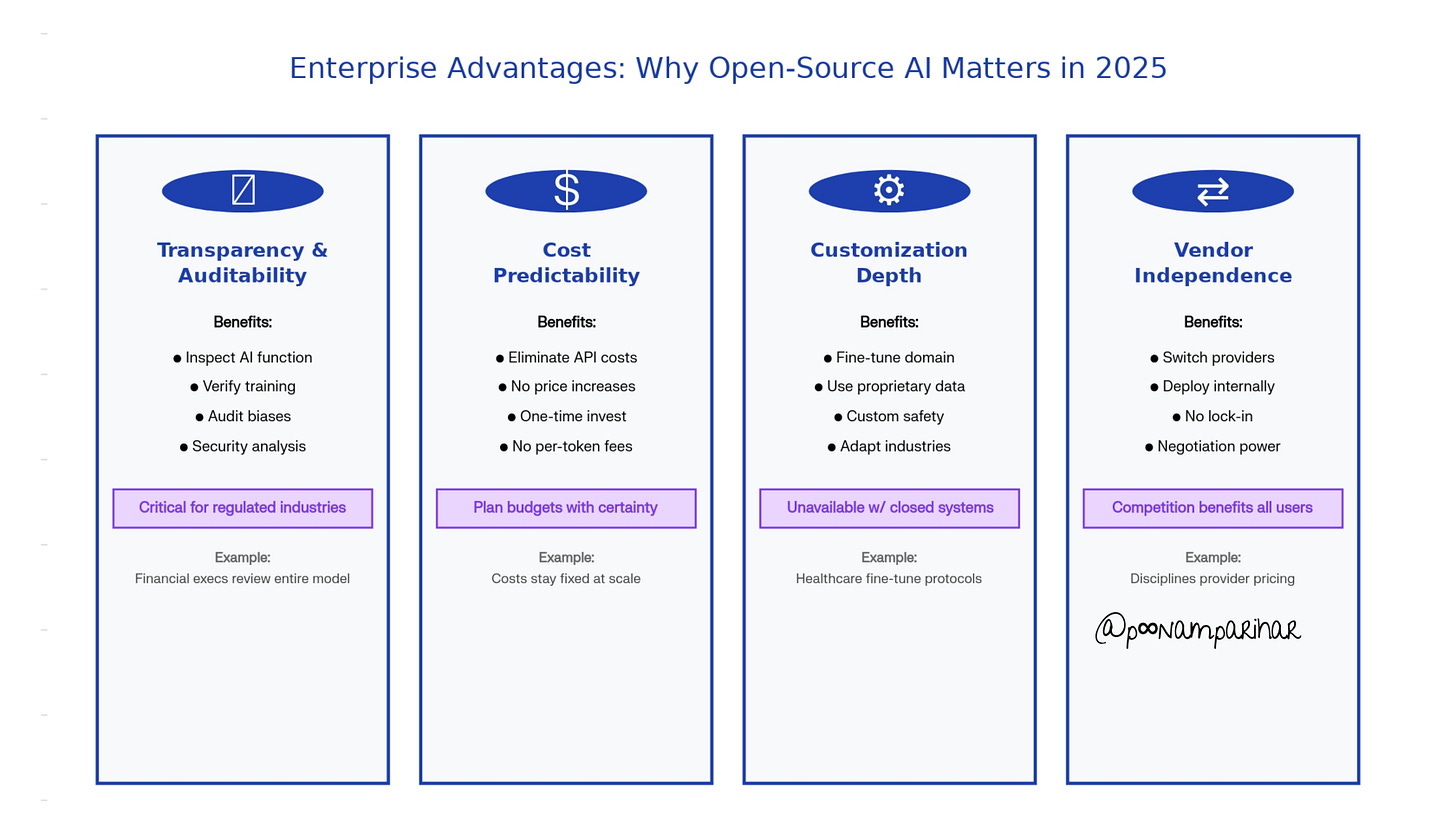

The Enterprise Choice: Lock-In Becomes Optional

The maturation of open-source alternatives has fundamentally changed enterprise decision-making around AI adoption. Where organizations had previously faced a binary choice between betting on a single proprietary provider or accepting inferior capabilities, the 2025 landscape has offered genuine optionality.

Transparency and Auditability: Open models allowed enterprises to inspect exactly how the AI functioned, crucial for regulated industries where explainability and compliance documentation were mandatory. A financial services executive could review training approaches, verify absence of inappropriate biases, and conduct security audits—impossible with black-box proprietary systems.

Cost Predictability: Self-hosted open models eliminated unpredictable API costs and vulnerability to provider price increases. Organizations with stable usage patterns could invest in infrastructure once rather than paying recurring per-token fees that could multiply as usage scaled.

Customization Depth: Open architectures enabled fine-tuning for specialized domains, incorporation of proprietary data, and integration of custom safety measures tailored to specific organizational needs. This level of adaptation remained impossible or prohibitively expensive with closed systems.

Vendor Independence: By maintaining the capability to switch between providers or deploy models internally, enterprises avoided strategic lock-in to any single AI vendor. This negotiating leverage proved valuable even for organizations that continued using proprietary systems, as credible alternatives disciplined provider pricing and terms.

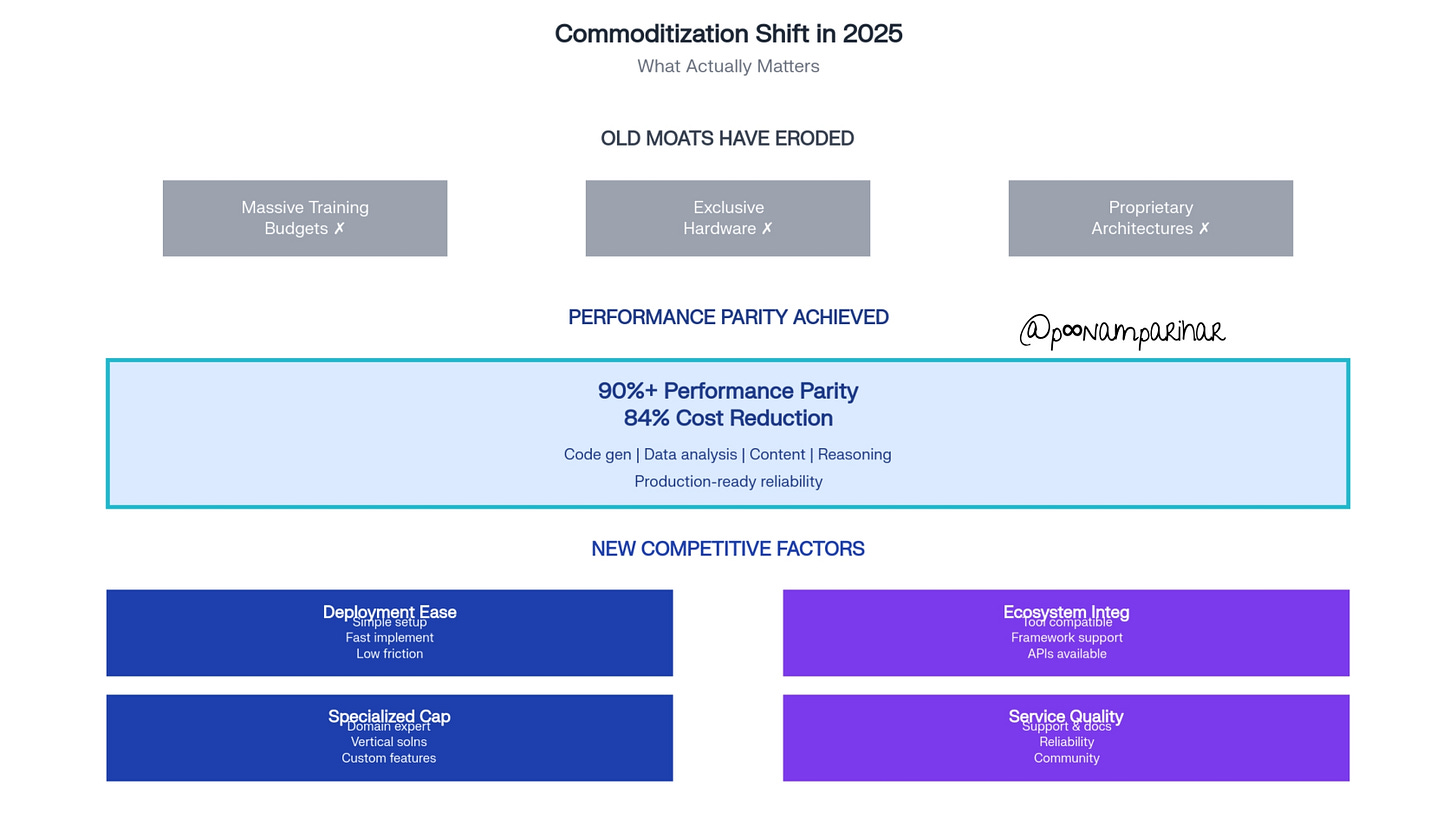

Performance Parity: The New Reality

Perhaps most significantly, the performance gap between open and closed systems had effectively disappeared for many applications. Independent benchmarking showed open models routinely achieving 90% or more of closed model performance while offering substantially lower operational costs and in some cases 84% cheaper. For tasks like code generation, data analysis, content creation, and structured reasoning, open alternatives now delivered comparable results.

This parity now has extended beyond raw capability to practical reliability. While early open models had suffered from inconsistent behavior, unexpected failure modes, and poor error handling, by 2025, a mature open ecosystems has addressed these issues through extensive testing, community feedback, and iterative refinement. The result of it is production-ready systems that enterprises could deploy with confidence.

The implications have been profound, AI capability has now effectively become commoditized. The moats that had protected first-mover advantages like massive training budgets, exclusive access to cutting-edge hardware, proprietary architectures have been eroded. Competition has now shifted from raw performance to differentiation through deployment ease, ecosystem integration, specialized capabilities, and service quality.

Conclusion

As 2025 comes to a close, the narrative around artificial intelligence has been fundamentally shifted. The story is no longer about models getting incrementally smarter, climbing benchmark leaderboards, or achieving marginal improvements in capability. but instead, three interrelated forces had transformed AI from an expensive, supervised, and often unreliable tool into something that businesses could practically deploy at scale.

These changes compounded rather than simply adding. Lower costs enabled more experimentation, which revealed practical applications that justifies autonomous deployment, which in turn demanded reliable long-context performance.

The implications extended beyond technology companies. Healthcare organizations deployed AI for diagnostic support and patient communication. Legal firms automated document review and contract analysis. Manufacturing companies optimized supply chains and predictive maintenance. Educational institutions personalized learning pathways and provided 24/7 tutoring. The common thread: AI had become economically viable and operationally reliable enough to entrust with consequential tasks.

Looking forward, the trajectory points toward continued democratization and capability expansion. As open-source models reach parity with proprietary alternatives, competitive pressure would drive further cost reductions and capability improvements. The agentic paradigm would mature from experimental demonstrations to standard practice. Context windows would continue expanding, eventually encompassing entire knowledge bases rather than individual documents.

2025 marked the year AI transitioned from potential to practical reality not because models got dramatically smarter, but because they got cheaper, more autonomous, and reliable enough to actually use. and that distinction, far more than any benchmark score, has determined whether AI delivered on its transformative promise or remained an expensive curiosity.