Are You Fluent or Just Flashy? The Deep Tech AI Skills Divide - The 7 Deadly Sins

Part 1 of the 3 part series: What Real AI Fluency Looks Like Beyond the Buzzwords!

Most people see AI literacy as those short courses teaching trendy buzzwords or showing how to use a tool for ads and social media. But that’s just scratching the surface, and not even close to real AI fluency, maybe only 5-10% of what’s that actually useful. True AI fluency goes much deeper: it’s about working with AI to solve everyday problems, thinking critically about what it tells you, and knowing how to use it in real work.

You’ll also see a lot of “AI experts” online. some are genuinely experienced and want to help, but many are simply cashing in with flashy courses or social media tips. Just because someone knows the latest keywords or tools or can run an automated workflow doesn’t mean they understand how AI works behind the scenes, or what really makes it valuable. Real AI skills go beyond marketing talk, and that requires knowing the tech, understanding its limits, and using it smartly for real impact. If all you know are a few tricks or keywords, you’re missing the bigger picture - AI fluency means knowing how, when, and why to put AI to work. It means you can apply, adapt, and innovate. and the real challenge is moving from surface skills to deep understanding, which is where genuine AI fluency begins.

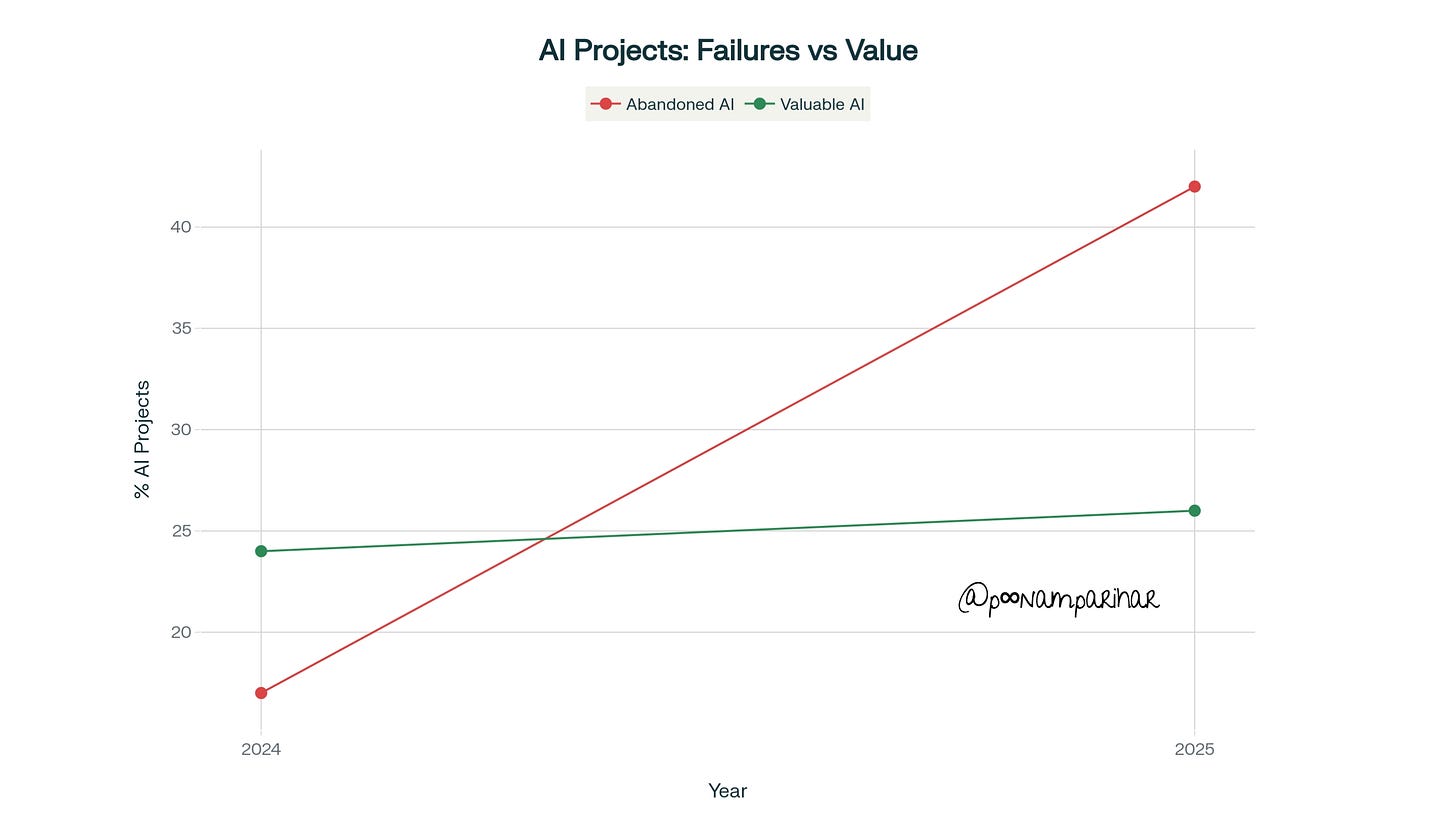

At a moment when artificial intelligence promises to reshape entire industries, a sobering reality confronts organizations worldwide: 42% of companies abandoned most of their AI initiatives in 2025, up from just 17% in 2024. Even more striking, between 70-85% of generative AI deployments fail to meet their expected return on investment. These failures stem not from technological limitations but from a more fundamental deficit, the absence of AI fluency combined with deep tech mastery.

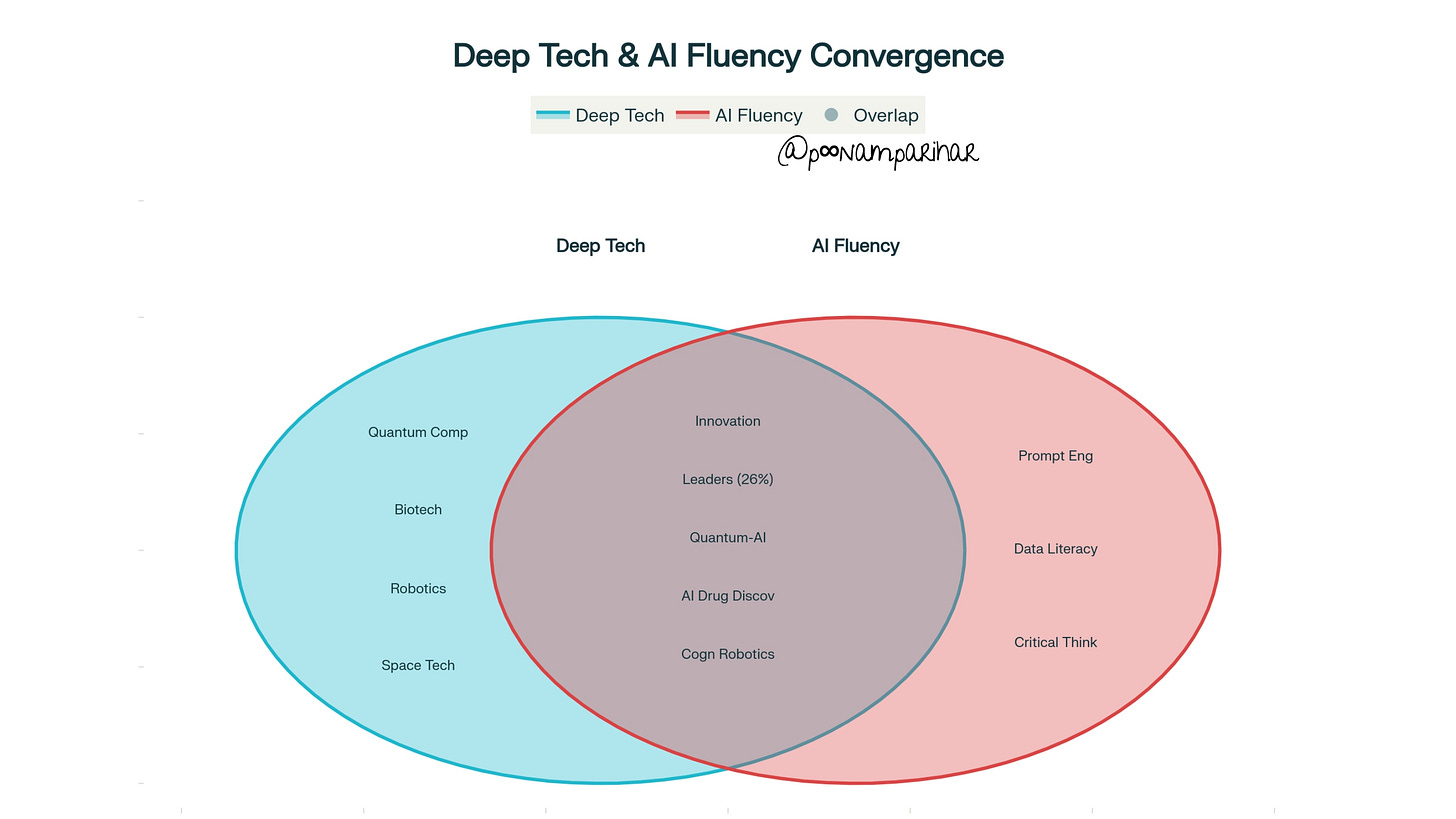

The intersection of AI fluency and deep tech represents the next frontier of competitive advantage. While AI fluency empowers individuals to work effectively with artificial intelligence systems, deep tech encompasses the scientific breakthroughs and engineering innovations that solve humanity’s most complex challenges. Organizations that master both dimensions understanding how to leverage AI responsibly while navigating the lengthy development cycles and capital-intensive nature of deep tech and position themselves among the elite 26% achieving tangible value from their AI investments.

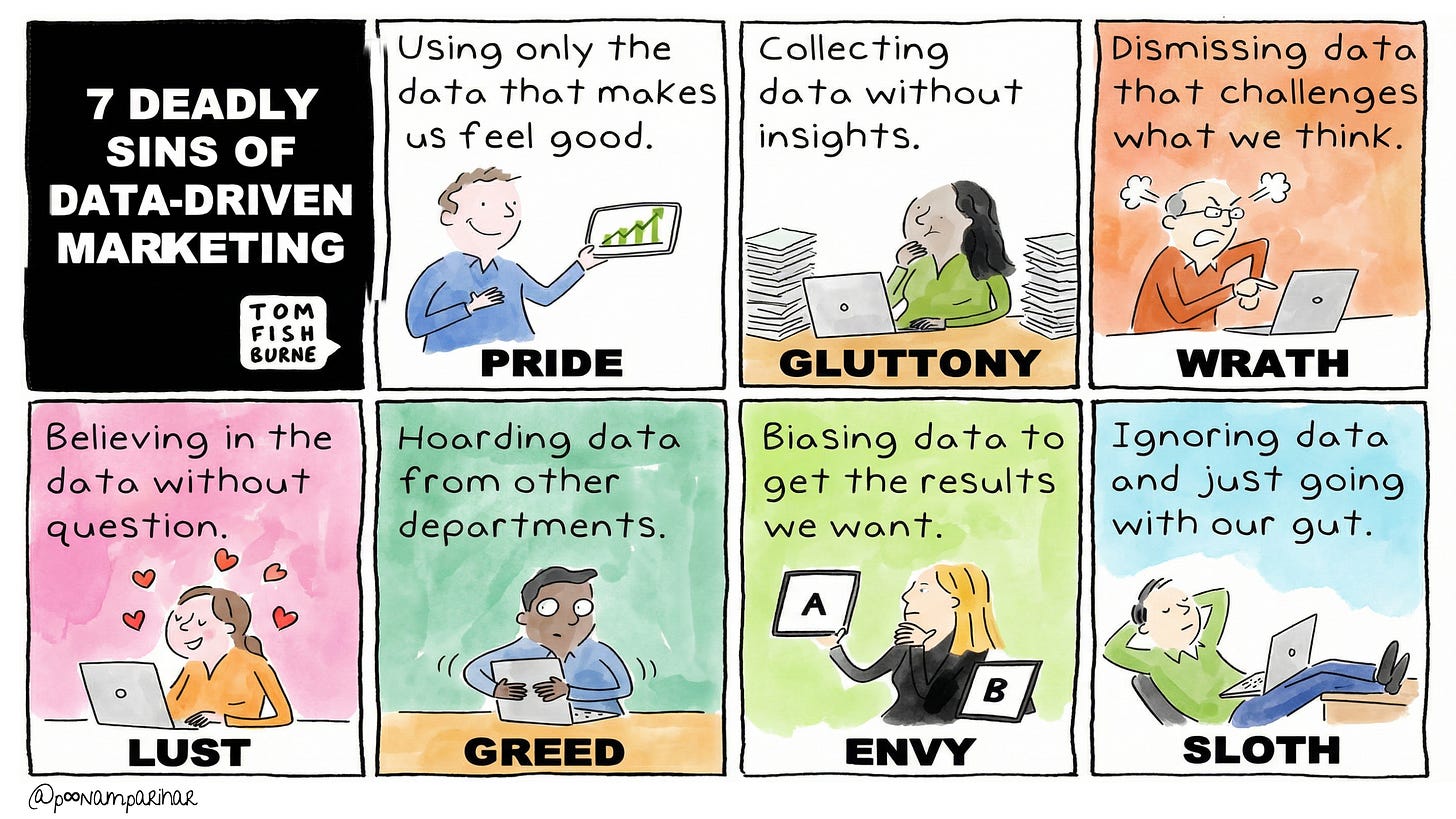

The other day I was looking at this Tom Fishburne’s “Seven Deadly Sins of Data-Driven Marketing”illustration and I thought this doesn’t just apply in marketing but if we convergence deep tech and AI fluency, this framework very much illuminates the critical missteps that sabotage innovation and the proven strategies that drive transformational success.

In the sections ahead, we’ll dive into what real AI fluency looks like when paired with cutting-edge deep tech, the mistakes organizations make, and how you can build lasting, practical skills to truly lead in this new era.

Defining Deep Tech in the Modern Innovation Landscape

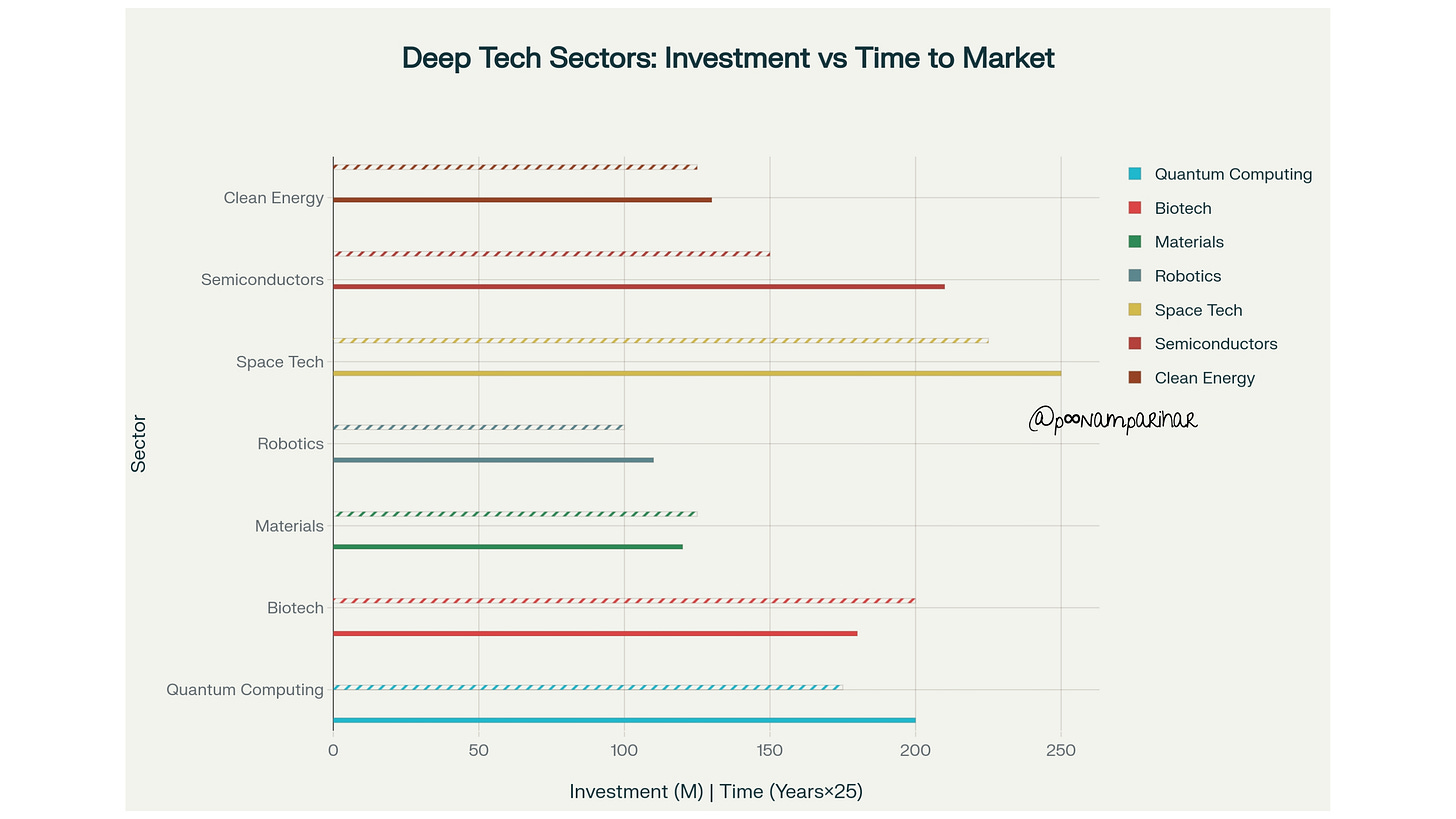

Deep tech refers to technologies built on substantial scientific research or meaningful engineering innovation, characterized by lengthy development cycles (typically 5-10 years), high capital requirements, and high barriers to entry. Unlike typical software startups that can iterate rapidly with minimal capital, deep tech ventures tackle fundamental challenges in fields ranging from quantum computing and biotechnology to advanced materials and space technology.

The European Deep Tech Talent Initiative identifies fifteen critical sectors including

quantum computing,

biotechnology,

artificial intelligence,

robotics,

semiconductors,

clean energy, and

brain-computer interfaces.

These technologies share common attributes: they emerge from years of research, create defensible intellectual property, and possess the potential to transform entire industries while addressing global challenges like climate change and healthcare accessibility.

Hardware-focused deep tech startups deliver a gross internal rate of return of 27%, significantly outperforming software counterparts at 13%, challenging the long-standing venture capital preference for software investments. This paradigm shift reflects deep tech’s inherent defensibility “patents, domain expertise, and physical infrastructure” create sustainable competitive advantages that pure software businesses struggle to replicate.

The AI Fluency Imperative

AI fluency transcends basic digital literacy, representing the ability to understand, work with, and strategically integrate artificial intelligence technologies into decision-making, problem-solving, and business processes.

As of February 2025, the EU AI Act mandates AI literacy for all employees working with AI systems, transforming fluency from a competitive advantage into a regulatory requirement.

Research from the Georgia Institute of Technology identifies over a dozen competencies comprising AI literacy, including

recognizing AI’s strengths and limitations,

understanding how AI decisions are made,

detecting AI bias, and

evaluating ethical implications.

Yet despite this imperative, 52% of workers report not knowing how to use AI effectively, and nearly half of businesses surveyed have had fewer than five hours of AI training.

The consequences of this skills gap manifest dramatically: MIT analysis reveals that 95% of GenAI pilots fail because companies attempt to eliminate the very friction that generates value. Organizations pursuing generic AI tools achieve high adoption but low transformational impact, while the successful 5% invest in custom-built enterprise solutions that embrace necessary complexity.

Technology Convergence as the Fourth Wave of Innovation

The convergence of deep tech domains previously considered unrelated defines the current innovation landscape. The World Economic Forum’s 2025 Technology Convergence Report identifies AI as the primary catalyst, acting as connective tissue that enhances nearly every domain it touches from optimizing spatial environments through digital twins to enabling decentralized decision-making through agentic systems.

This convergence manifests in breakthrough applications.

Cognitive robotics combines agentic AI, spatial intelligence, and robotic systems to enable intelligent, autonomous action in complex environments. Hybrid quantum-classical computing harnesses quantum power while anchoring it in classical reliability for practical applications in finance and molecular simulation. Materials informatics employs predictive models to virtually test material combinations before laboratory synthesis, dramatically accelerating R&D cycles.

China’s 14th Five-Year Plan exemplifies strategic convergence, listing quantum information and brain-like intelligence in the same policy paragraph to explicitly tie quantum research to artificial general intelligence ambitions. This integrated approach recognizes that breakthrough innovations emerge not from isolated technologies but from their synergistic combination.

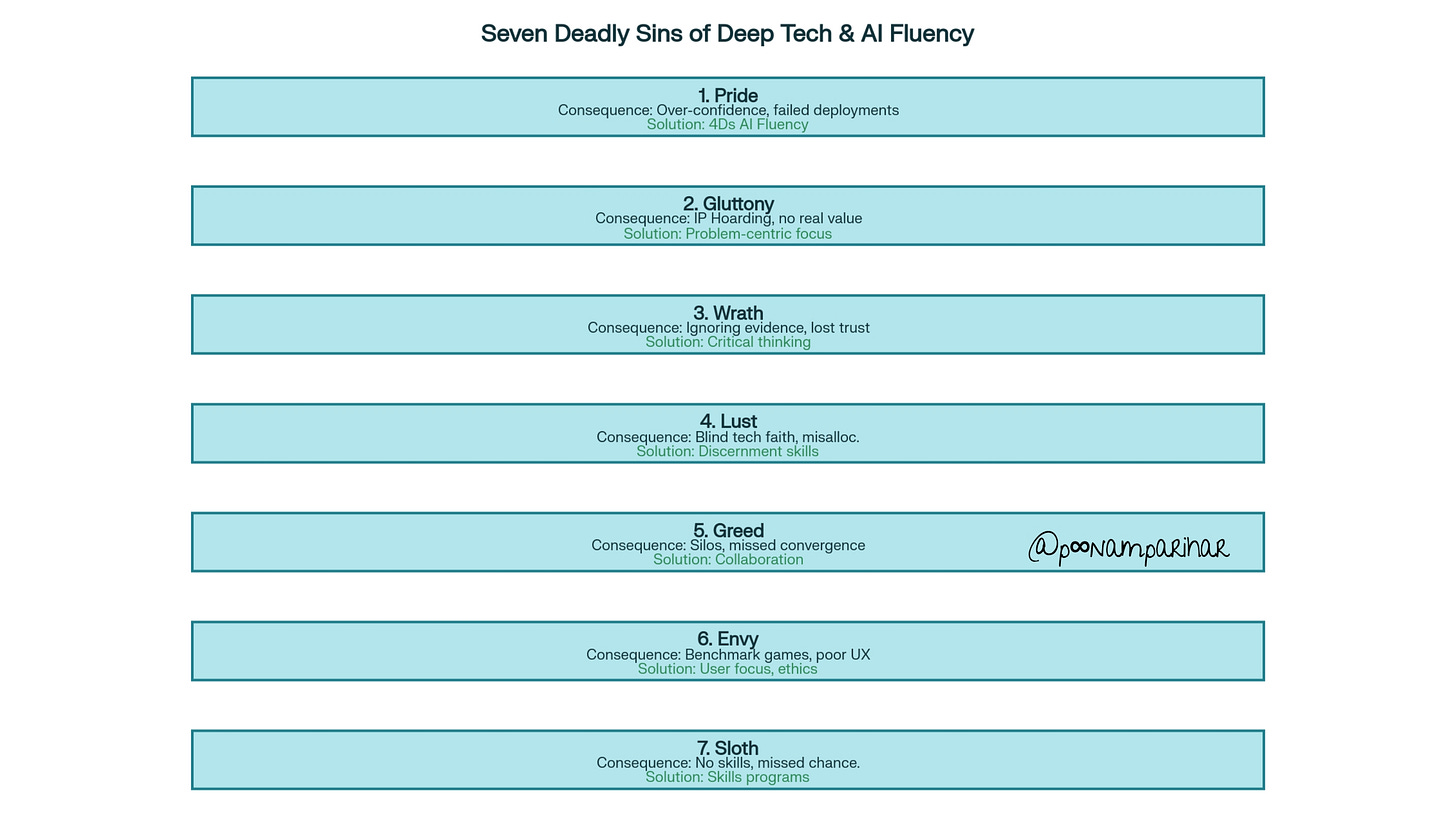

The Seven Deadly Sins Framework: Deep Tech and AI Fluency Edition

Sin #1: Pride

Over-Confidence in AI Capabilities Without Understanding Technical Limitations

Pride manifests when organizations deploy AI systems with insufficient understanding of their technical constraints, limitations, and failure modes. This deadly sin appears when leaders showcase impressive AI demos that fail catastrophically in production environments, or when teams prioritize AI adoption speed over comprehension.

The impact proves devastating: S&P Global Market Intelligence reports that companies abandoning most AI initiatives jumped from 17% in 2024 to 42% in 2025, with the average organization scrapping 46% of AI proof-of-concepts before reaching production. These failures often stem from over-estimating AI’s current capabilities while under-estimating the human expertise required for successful deployment.

In deep tech contexts, pride drives organizations to pursue quantum computing or AI-driven drug discovery without the foundational scientific expertise to evaluate feasibility. MIT’s analysis reveals that sanctioned GenAI pilots appear polished in presentations but fail in real-world applications due to inability to retain context or handle edge cases.

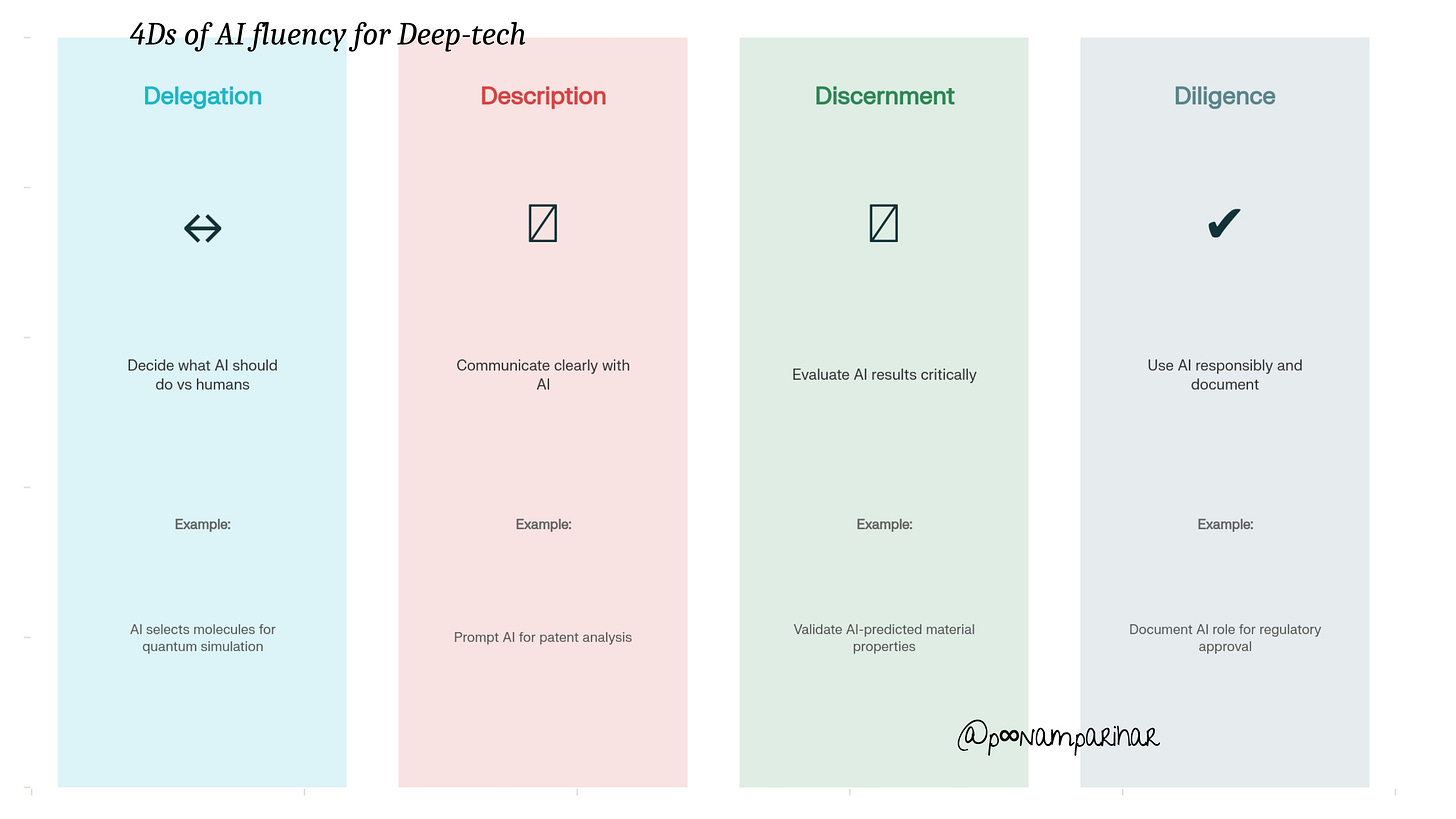

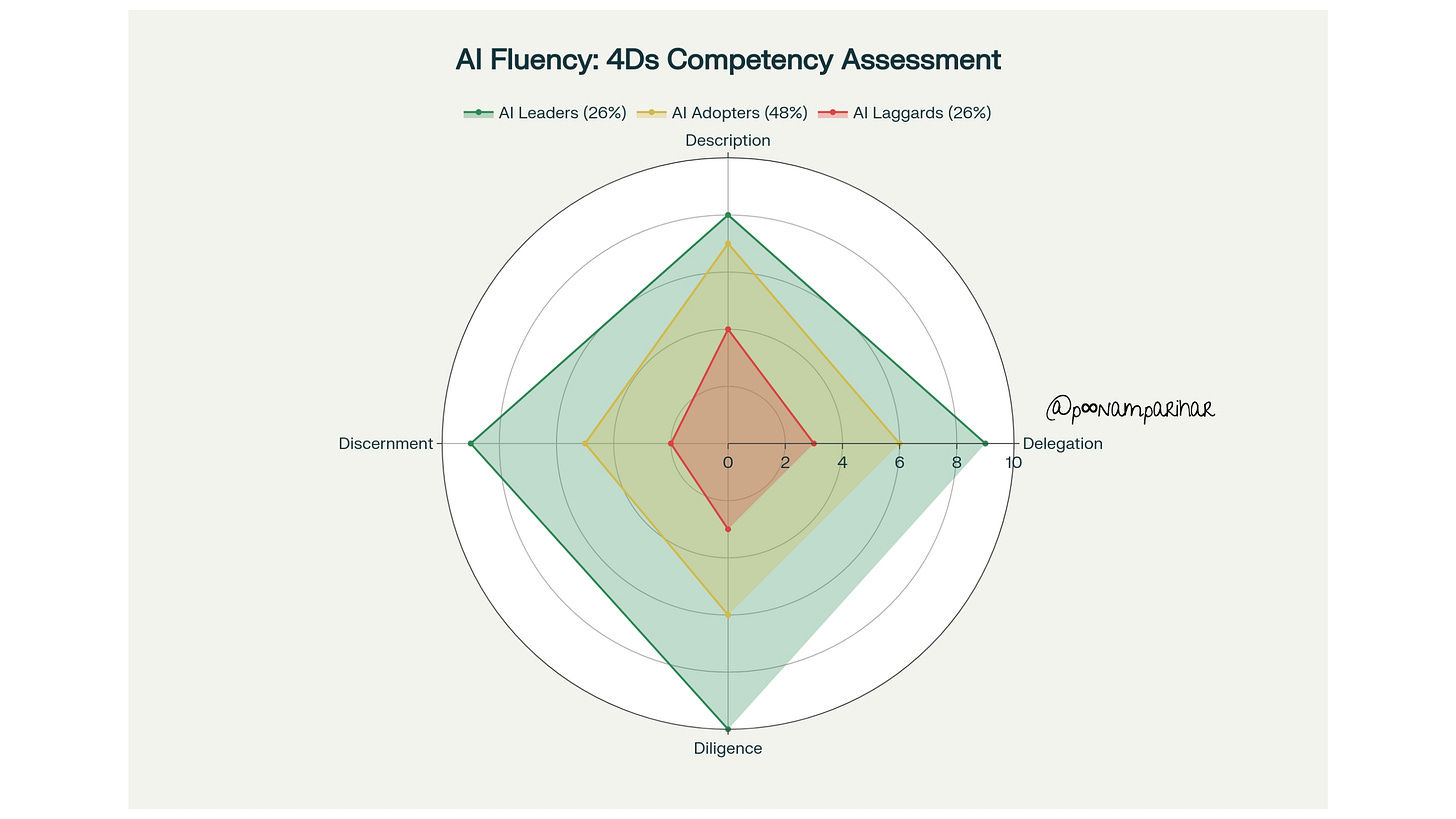

Solution: The 4Ds Framework for AI Fluency

Anthropic’s AI Fluency Framework provides an antidote through four core competencies—Delegation, Description, Discernment, and Diligence (the “4Ds”). These competencies enable practitioners to make appropriate decisions about when and how to use AI tools, effectively communicate desired outputs, accurately assess quality, and ensure ethical practice.

Delegation requires understanding what work to do independently, collaboratively with AI, or let AI handle autonomously. This competency begins with problem awareness (clearly understanding goals before involving AI) and platform awareness (comprehending capabilities and limitations of different systems). In quantum computing research, effective delegation means using AI to pre-screen molecular structures before expensive quantum simulations, rather than blindly automating all analysis.

The Financial Times implemented a company-wide progression framework moving employees from “AI Beginner” to “AI Fluent” across four domains: Tools, Productivity & Innovation, Critical Thinking, and Ethics. This structured approach acknowledges that AI fluency develops gradually through deliberate practice, peer learning, and continuous education rather than assuming instant competence.

Sin #2: Gluttony

Hoarding Deep Tech Patents and Research Without Practical Application

Gluttony in the deep tech context represents the excessive accumulation of intellectual property, research publications, and technical capabilities without translating them into commercial products or societal value. Organizations guilty of this sin measure success by patent counts and R&D budgets rather than real-world impact.

Innovation Valley of Death

This sin perpetuates what investors call the “Innovation Valley of Death” the gap between research breakthroughs and market-ready products that claims many deep tech ventures. Deep tech startups face 5-10+ year development cycles, and without deliberate focus on commercialization pathways, even promising technologies languish in laboratory limbo.

The consequences extend beyond wasted capital. When deep tech remains siloed in research institutions or corporate labs, society forgoes the transformative benefits these innovations could deliver. Carbon capture technologies that never leave pilot stage, quantum algorithms that never process real-world data, and biotechnology breakthroughs that never reach patients represent tragic examples of gluttony’s toll.

Solution: Problem-Centric Approaches and Real-World Applications

The antidote to gluttony lies in adopting problem-centric rather than technology-centric development models. Deep tech ventures must begin by identifying pressing challenges and unmet needs in business and the broader economy, then assess whether cutting-edge technologies can meaningfully address them.

Moderna’s partnership with IBM exemplifies this approach. Rather than pursuing quantum computing for its own sake, Moderna identified mRNA secondary structure prediction as a critical bottleneck in their development pipeline - a computationally intractable problem for classical computers. They strategically applied quantum algorithms (CVaR VQE) to this specific challenge, benchmarking performance against classical solvers and establishing clear criteria for success.

Building AI fluency requires addressing real-world challenges with practical applications. In IT operations, AI analyzes server logs to predict and prevent system failures, ensuring smoother workflows and reducing downtime. In healthcare, AI processes pathology scans to increase pathologist productivity, accelerate diagnosis, and reduce errors in pilot studies.

Organizations must also prioritize adaptability in their deep tech systems, designing them to evolve based on user feedback and changing needs. A quantum computing platform that cannot integrate with existing workflows or adapt to new use cases exemplifies gluttony impressive technical achievement divorced from practical utility.

Sin #3: Wrath

Ignoring Technical Feasibility Data When Pursuing Ambitious Deep Tech Visions

Wrath emerges when organizations dismiss or suppress technical feasibility assessments that challenge their preferred narratives. This sin manifests in leadership teams that punish bearers of bad news about AI limitations, or in research teams that cherry-pick data to support predetermined conclusions about their deep tech innovations.

The business impact proves severe: 70-85% of GenAI deployments fail to meet ROI expectations, often because organizations ignored fundamental issues with data quality, governance structures, and technical infrastructure. BCG research reveals that approximately 70% of AI implementation challenges stem from people and process issues, 20% from technology problems, and only 10% from AI algorithms, yet organizations disproportionately focus resources on the algorithmic challenges.

In deep tech ventures, wrath leads to pursuing technically impossible projects or continuing investments in approaches that evidence has invalidated. When quantum computing startups ignore decoherence data, or when biotechnology companies dismiss negative trial results, they waste precious resources while eroding stakeholder trust.

Solution: Critical Thinking, Data Literacy, and Balanced Assessment

Overcoming wrath requires cultivating organizational cultures that reward evidence-based decision-making and critical evaluation. AI fluency demands critical thinking and data literacy—the ability to interpret data to derive meaningful insights, assess the accuracy and reliability of AI outputs, and make data-driven decisions.

Discernment, the third “D” of the AI Fluency Framework, provides the competency structure for critical evaluation. It encompasses three dimensions:

product discernment (evaluating output quality for accuracy and relevance),

process discernment (examining how AI arrived at conclusions), and

performance discernment (assessing whether AI’s communication style effectively serves user needs).

Materials scientists at companies employing AI for materials discovery exemplify proper discernment by verifying AI-predicted material properties through experimental validation before committing to manufacturing. This iterative cycle of prediction and validation prevents costly errors while building confidence in AI recommendations.

Balancing technical innovation with commercial viability requires what venture capitalists call “de-risking.” Deep tech investment firm Deepbright Ventures emphasizes the importance of Technology Readiness Level (TRL) progression—measurable technical advancement that demonstrates a startup is systematically reducing technical risk. Organizations must establish clear metrics for assessing both technical feasibility and market viability, refusing to advance projects that cannot meet evidence-based thresholds.

The successful 26% of companies achieving AI value target meaningful outcomes on cost and revenue, prioritize core function transformation over diffuse productivity gains, and invest strategically in a few high-priority opportunities to scale. They pursue on average only half as many AI initiatives as less successful peers, demonstrating the discipline to focus resources where technical and commercial feasibility align.

Sin #4: Lust

Blind Faith in Quantum Computing or AI to Solve All Problems

Lust represents the uncritical infatuation with cutting-edge technologies, manifesting when organizations treat quantum computing, generative AI, or other deep tech innovations as universal solutions applicable to every challenge. This sin appears in technology roadmaps that list AI or quantum as solutions before identifying the problems they should address.

Real-world examples abound

The 2024 failures of hardware AI assistants, Humane’s Ai Pin and Rabbit R1 demonstrate lust’s consequences. Both products attempted to solve problems that didn’t actually exist, offering wearable ChatGPT interfaces that users neither needed nor wanted. Critical reviews revealed slow, buggy performance, yet the companies proceeded to market based on technological enthusiasm rather than validated user needs.

MIT’s research reveals that 37% of employees were more concerned than excited about AI in 2021, rising to 52% by 2023, while excitement declined from 18% to just 10%. This growing skepticism reflects experience with overhyped AI solutions that failed to deliver promised benefits, breeding cynicism that undermines legitimate AI initiatives.

In deep tech sectors, lust drives wasteful investments in quantum computing for applications where classical computers suffice, or AI drug discovery platforms that ignore the biological complexity their algorithms cannot capture. Organizations mesmerized by technological novelty neglect to ask the fundamental question:

is this the right tool for this specific problem?

Solution: Discernment Skills and Understanding When AI Is the Right Tool

The antidote to lust begins with developing discernment—the ability to critically evaluate not just AI outputs but whether AI application is appropriate in the first place. Launch Consulting’s AI Ready program emphasizes helping employees understand “when and how to use AI” as a core learning objective, recognizing that knowing when not to use AI proves equally important.

Effective delegation, the first “D” of the AI Fluency Framework, requires determining the optimal division of labor between AI and humans while preserving human oversight where it matters most.

Enterprise architects must ask:

Which tasks are ripe for automation or augmentation?

When should human judgment override AI’s output?

What prompts will yield genuine value versus busywork?

BCG’s analysis of AI leaders reveals they focus on revenue-generation from AI (over one-third prioritize this) compared with only a quarter of other companies, and they target meaningful outcomes rather than chasing every AI opportunity. Decision-makers who chase every AI opportunity are likely to have more projects fail—knowing when AI is the right tool proves critical to avoiding wasted investments.

Qubit Pharmaceuticals demonstrates appropriate AI application in deep tech. Rather than claiming quantum computing or AI individually solve drug discovery, they created FeNNix-Bio1, a foundation model trained on quantum-accurate data from exascale supercomputers. This hybrid approach acknowledges that neither quantum nor classical AI alone suffices—the optimal solution combines their complementary strengths for reactive molecular dynamics at unprecedented scale.

Organizations must establish clear criteria for AI adoption:

Does this application leverage AI’s genuine strengths in pattern recognition, optimization, or prediction?

Do we have the data quality and volume to support AI?

Can we validate outputs effectively?

Is the problem well-defined with clear success metrics?

Answering “no” to any question should trigger reconsideration of AI’s appropriateness for that use case.

Sin #5: Greed

Siloed Deep Tech Development Without Cross-Functional Collaboration

Greed manifests when departments, research teams, or business units hoard data, expertise, and resources rather than sharing them for collective benefit. In deep tech organizations, this sin creates parallel efforts that duplicate work, miss opportunities for technology convergence, and prevent the cross-pollination essential for breakthrough innovation.

The deep tech landscape demands convergence combining physical, biological, and digital sciences to solve systemic global challenges. Yet organizational structures often fragment these disciplines into separate silos that communicate poorly. When quantum physicists never interact with AI researchers, when biotechnology teams ignore materials science advances, or when hardware engineers develop robotics without input from AI specialists, organizations forfeit the synergistic possibilities that define modern deep tech success. Data silos prove particularly pernicious.

When marketing teams cannot access customer insights held by sales, when R&D data remains locked in research databases inaccessible to product development, or when different business units maintain incompatible data systems, organizations miss patterns and opportunities that integrated data would reveal.

The “Seven Deadly Data Sins” framework identifies this as failing to adequately share data programmatically, recognizing it as a barrier that prevents successful data strategy implementation.

Solution: AI Centers of Excellence and Cross-Functional Communities of Practice

Overcoming greed requires intentional organizational design that facilitates knowledge sharing and cross-functional collaboration. AI Centers of Excellence serve as central hubs for sharing AI use cases, toolkits, prompt libraries, and enabling departments to build on each other’s successes rather than starting from scratch.

These centers operate as force multipliers, allowing organizations to scale AI adoption efficiently. Rather than each department independently discovering best practices through trial and error, Centers of Excellence capture institutional knowledge and disseminate it broadly. They also facilitate the communities of practice. regular meet-ups, workshops, and virtual forums are where technical specialists and business experts co-create AI solutions.

The Financial Times’ approach exemplifies effective knowledge sharing. Their company-wide AI fluency framework emphasizes peer learning and collaborative workshops, recognizing that employees learn most effectively from colleagues facing similar challenges. This horizontal knowledge transfer accelerates capability building while fostering a culture where sharing expertise becomes the norm rather than the exception.

Deep tech convergence requires similar collaborative structures. Cognitive robotics combining agentic AI, spatial intelligence, and robotic systems demands collaboration between AI researchers, mechanical engineers, and domain experts. Hybrid quantum-classical computing requires quantum physicists to work alongside classical computer scientists and application developers. Organizations must create forums, incentive structures, and project teams that span traditional disciplinary boundaries.

Microsoft’s 1,000+ AI transformation case studies reveal that successful organizations embed AI literacy across all departments from data analysts and marketing teams to customer service representatives and HR professionals. Each function brings unique domain expertise that, when combined with AI capabilities, unlocks innovations that siloed teams could never discover independently.

Sin #6: Envy

Manipulating Technical Benchmarks to Compete With Competitors Rather Than Serve Users

Envy drives organizations to prioritize competitive positioning over user value, manifesting when companies manipulate benchmarks, cherry-pick metrics, or design products primarily to match competitor features rather than address genuine user needs. This sin appears in AI leaderboards where models optimize for narrow benchmark performance that doesn’t translate to real-world utility.

The competitive AI landscape incentivizes this behavior. When organizations see competitors announce new AI capabilities or achieve impressive benchmark scores, envy compels them to match or exceed those numbers regardless of whether doing so serves their users. Marketing departments demand parity on every published metric, even when those metrics poorly correlate with actual business outcomes or user satisfaction.

In deep tech sectors, envy manifests as pursuing quantum supremacy demonstrations that lack practical applications, or developing AI drug discovery platforms primarily to match competitor capabilities rather than to solve specific therapeutic challenges. Companies become so focused on competitive positioning that they lose sight of the fundamental purpose: creating value for customers and society.

Solution: Focus on User Needs and Ethical AI Practices

The antidote to envy requires reorienting organizational focus from competitors to users.

Problem-centric approaches that begin with user pain points and unmet needs naturally resist the envy trap, when the primary question is “how do we solve this specific user problem?” rather than “how do we match competitor X’s capabilities?”, decisions align with value creation.

Diligence, the fourth “D” of the AI Fluency Framework, encompasses taking responsibility for AI collaborations across critical dimensions including creation diligence (being thoughtful about which AI systems to use), transparency diligence (honest disclosure of AI’s role), and deployment diligence (verifying outputs before sharing). This competency inherently prioritizes responsible, user-focused AI development over competitive one-upmanship.

Ethical AI practices provide guardrails against envy. When organizations commit to explainability, fairness, privacy protection, and accountability, they establish principles that supersede competitive pressure. The EU AI Act’s mandatory AI literacy requirements explicitly emphasize responsible AI deployment and awareness of potential harm, legislating ethical considerations into organizational DNA.

Leading organizations balance competitive awareness with user focus. They monitor competitor developments to identify emerging opportunities and threats, but they filter those insights through the lens of user value. BCG’s research shows that AI leaders focus two-thirds of their effort and resources on people-related capabilities, recognizing that technology excellence without user-centered design yields hollow victories.

Moderna’s quantum computing partnership demonstrates proper prioritization. Rather than pursuing quantum capabilities to match other pharmaceutical companies, they identified mRNA structure prediction as their specific bottleneck and evaluated whether quantum-enhanced AI could address it better than alternatives. The decision criterion was therapeutic impact, not competitive positioning.

Sin #7: Sloth

Lack of Investment in AI Literacy and Deep Tech Skills Development

And the final sin Sloth represents organizational complacency regarding skills development, manifesting when companies expect employees to master AI tools and deep tech concepts without providing training, resources, or time for learning. This deadly sin appears in organizations that deploy AI platforms while offering fewer than five hours of training, or that hire deep tech talent without investing in continuous learning to keep pace with rapidly evolving fields.

The consequences prove catastrophic. 52% of workers don’t know how to use AI effectively, and the average employee now experiences 10 planned enterprise changes annually (up from just two in 2016), creating change fatigue that undermines even well-designed AI initiatives.

When organizations fail to build AI fluency, employees respond with fear, resistance, and workarounds creating the “shadow AI” economy where over 90% of companies see employees using personal AI tools because official solutions fail to meet their needs.

Deep tech sectors face compounded challenges. The European Deep Tech Talent Initiative aims to skill, upskill, and reskill 1 million people by end of 2025, acknowledging the severe shortage of workers with quantum computing, biotechnology, and advanced materials expertise. Machine learning job postings doubled from 7% to 14% in 2025, while demand far outpaces supply. Organizations that neglect skills development cannot staff their deep tech initiatives, regardless of available capital.

Solution: Comprehensive AI Fluency Programs and Continuous Upskilling

Overcoming sloth demands systematic investment in learning and development. Scenario-based learning proves most effective . Moving beyond theoretical instruction to embed real-world tasks and continuous feedback into fluency programs is one way to do it. ( refer Anthropic’s AI Fluency Framework)

Organizations must tailor training to different roles and skill levels.

Other AI Fluency Programs

Zapier’s AI fluency model demonstrates one approach: making AI fluency mandatory for all new hires with assessment-driven evaluation, but applying tiered difficulty where entry-level roles require basic tool proficiency while senior roles face tests on workflow integration and strategic thinking. This ensures universal baseline competence while developing advanced capabilities where they create most value.

The Financial Times’ progression framework offers another model: competency mapping that tracks employees’ journey from “AI Beginner” to “AI Fluent” across Tools, Productivity & Innovation, Critical Thinking, and Ethics domains. Clear progression pathways motivate learning by showing employees how skill development connects to career advancement and organizational impact.

Launch Consulting’s custom AI Ready program addressed a common challenge: existing technical AI training proved too intensive for business consultants, while generic training lacked relevant use cases. Their solution combined AI fundamentals, impact education, practical use cases and applications, strategic communications, and a Change Champions network to drive grassroots adoption.

The result: organizational AI fluency that delivered 10-15% productivity gains and competitive advantages in client engagements.

The New Normal.

Continuous learning must become the organizational norm. IBM’s Chief Impact Officer predicts that lifelong learning in AI and technical subjects will become the “new normal,” as the immediate need for AI skills will soon expand to quantum computing, with enduring demand for cybersecurity expertise. Organizations must build learning cultures where skill development receives dedicated time, resources, and leadership support rather than being treated as optional or pursued only outside work hours.

Part 2 & 3 next.

Understanding the seven deadly sins of deep tech and AI fluency is just the first step toward transformation. In Part 2 of this series, we’ll dive deeper into the unique challenges facing each deep tech sectors from quantum computing and biotech to robotics and clean energy and uncover what it takes to navigate their long development cycles, capital intensity, and innovation bottlenecks.

Then, in Part 3, we’ll explore how organizations can build effective AI fluency and efficiency models using frameworks like the 4Ds, with actionable strategies for upskilling teams, fostering collaboration, and turning technical expertise into real-world impact.