Jensen Huang had a week. well, I had a week too, I was traveling so missed entire last week here. Sorry. but I am back now. so lets go back to Jenson who comparatively had more? fun. definitely more interesting.

The NVIDIA CEO spent seven days dropping statements at his own GPU Technology Conference, the Morgan Stanley TMT Conference, and finally on the All-In Podcast and by Thursday the internet had a new topic it couldn’t stop arguing about.

The clip that went viral?

Huang said and I quote.

Jensen Huang:

“We’re trying to.” “Let me give you the thought experiment: Let’s say you have a software engineer or AI researcher and you pay them $500,000 a year. We do that all the time.” “That $500,000 engineer, at the end of the year, I’m going to ask them, how much did you spend in tokens?” “If that person said, ‘$5,000,’ I will go ape… something else.” “If that $500,000 engineer did not consume at least $250,000 worth of tokens, I’m going to be deeply alarmed.

He confirmed NVIDIA is trying to spend $2 billion annually on tokens for its engineering team. He compared engineers who don’t use AI to chip designers still insisting on paper and pencil instead of CAD software.

The take was everywhere by Friday.

But most of what was written about it, including the Reddit thread with 937 upvotes and 362 comments, only captured half the story. Here’s the full picture.

🎙️ In this podcast episode, we go deeper on everything above, including.

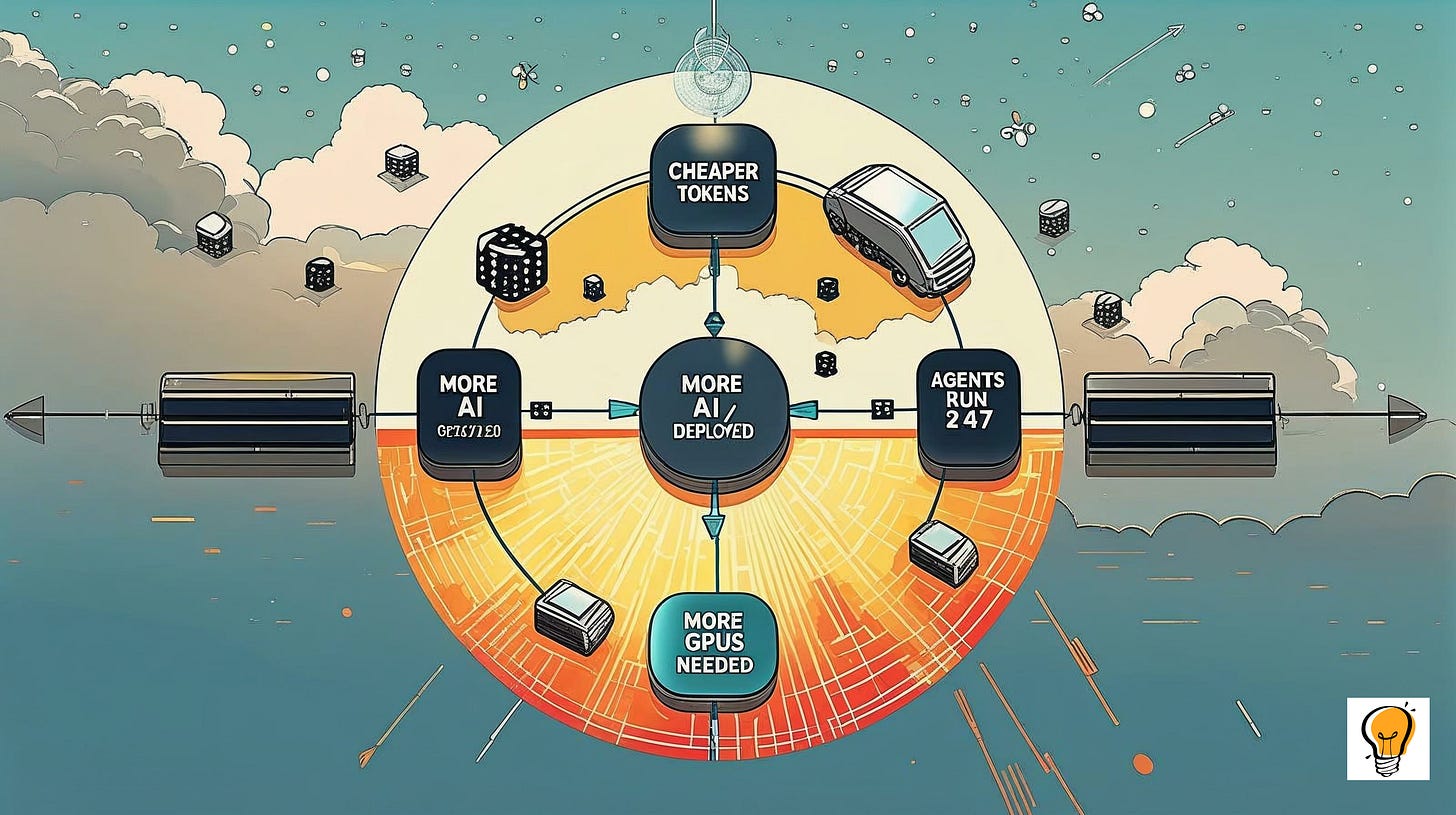

🔁 The Jevons Paradox explained from scratch — why cheaper tokens always means more spending, not less

🤖 What an AI agent actually is — and why it consumes 1,000x more tokens than a simple question

⚠️ Goodhart’s Law in practice — how token burn rate becomes a metric engineers will game

💼 The 4th pillar of compensation unpacked — what tokens as pay actually means for your financial security

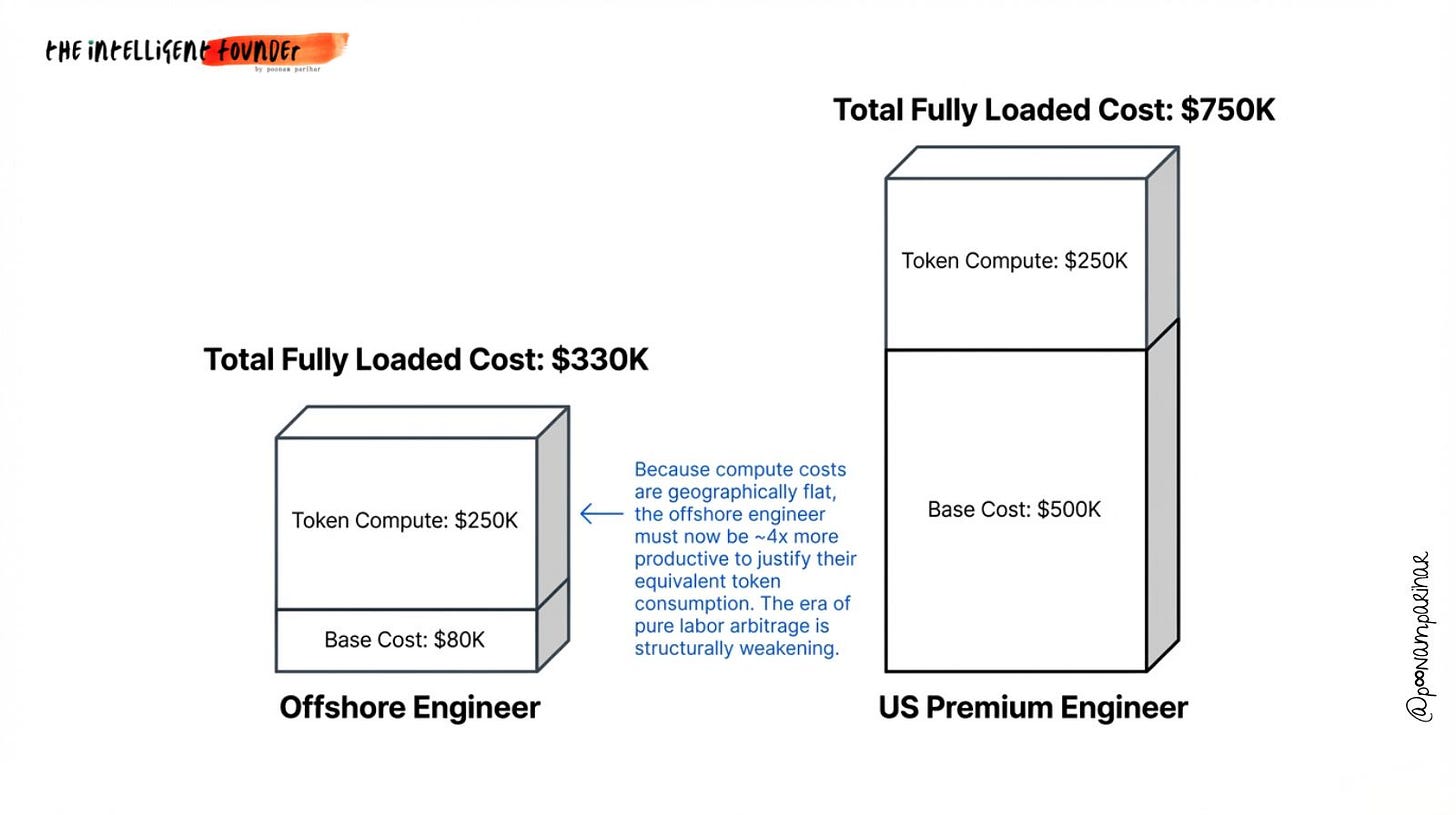

🌍 The offshoring disruption nobody’s talking about — why flat token costs globally are reshaping hiring maths

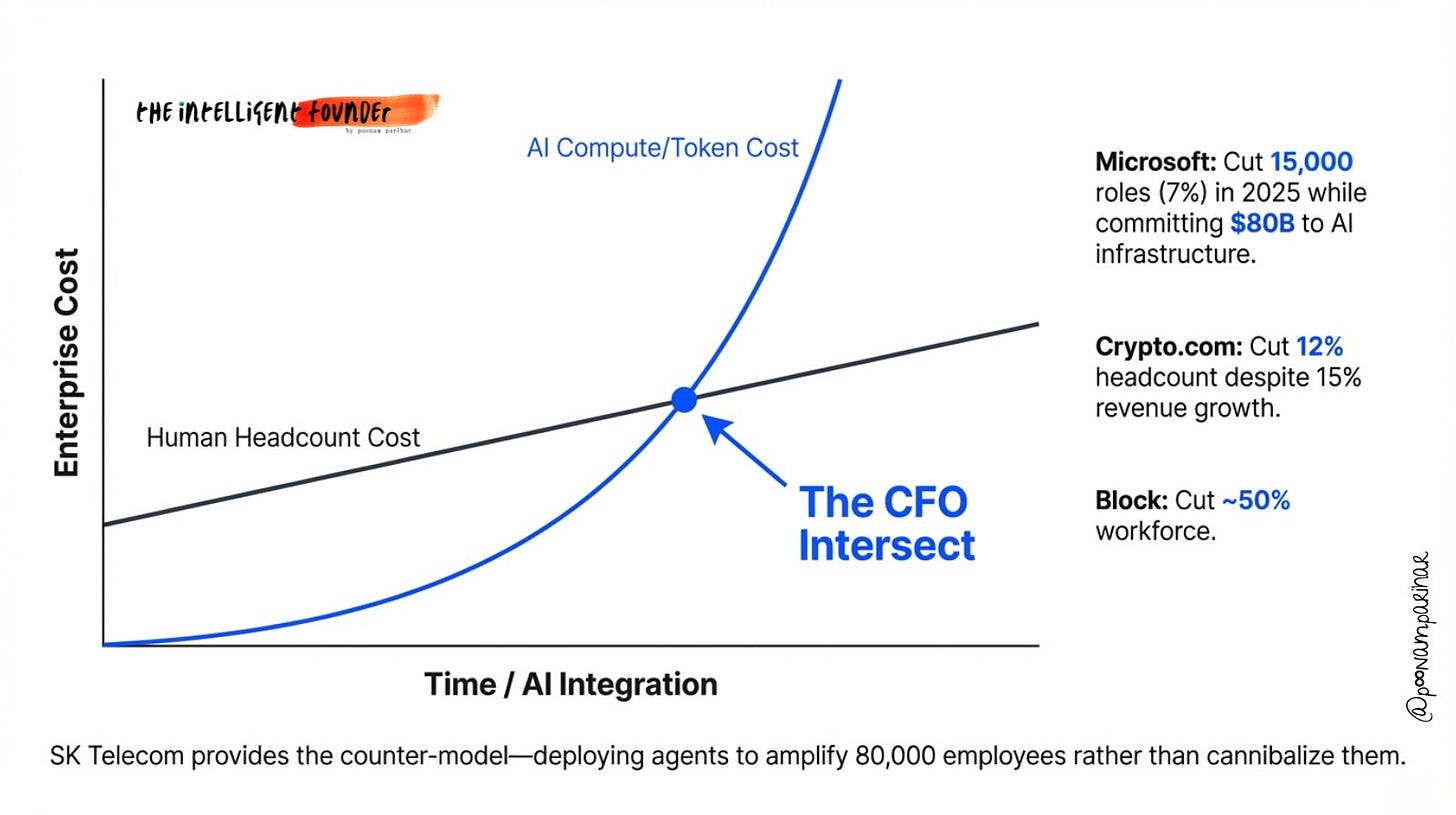

🏢 What SK Telecom did right — and why their model is the one worth copying

If you prefer to read? Here’s the breakdown from a 360 degree perspective.

What’s a Token, and Why Does It Cost Money?

A token is the unit of measurement for AI processing. Every word you type into an AI, every word it writes back, broken down into fragments called tokens. A sentence is roughly 20 tokens. A full document might be several thousand. Every time you run an AI model, tokens are consumed, and tokens cost money.

For a simple ChatGPT query: roughly 1,000 tokens.

For a research pipeline: 5,000–50,000 tokens.

For an AI agent that runs autonomously » searching, coding, testing, iterating, without you pressing a single button, we’re talking hundreds of thousands of tokens per run. A fleet of agents running continuously?

Billions of tokens per day.

This distinction between “I asked the AI a question” and

“the AI is working for me around the clock” is the entire foundation of Huang’s argument. He’s not imagining engineers typing prompts. He’s imagining engineers deploying autonomous AI workforces.

The Actual Thesis: Tokens as the 4th Pillar of Compensation

Before Huang went viral, VC Tomasz Tunguz of Theory Ventures had already been quietly building this framework.

His argument?

AI inference is becoming the fourth component of engineering compensation, alongside salary, bonus, and equity.

His numbers?

a $375K engineer with a $100K token budget has a $475K total package. That token budget doesn’t vest or appreciate, but it enables leverage that no previous tool budget could match.

Huang scaled this up to a mandate:

a $500K engineer should be consuming at least $250K in tokens. Across NVIDIA’s engineering workforce, that’s a $2B annual token spend, which the company confirmed it’s actively pursuing.

The framing is deliberately recruiting-adjacent.

“Engineers are now asking ‘what’s my token budget?’ when evaluating offers,” Huang said at GTC. Whether or not this is universally true yet, it’s becoming true fast.

[ In this newsletter you get sharp, unfiltered short essays; for full‑length, deep‑dive analysis on AI, subscribe to our companion publication, Intelligent Founder AI. ]

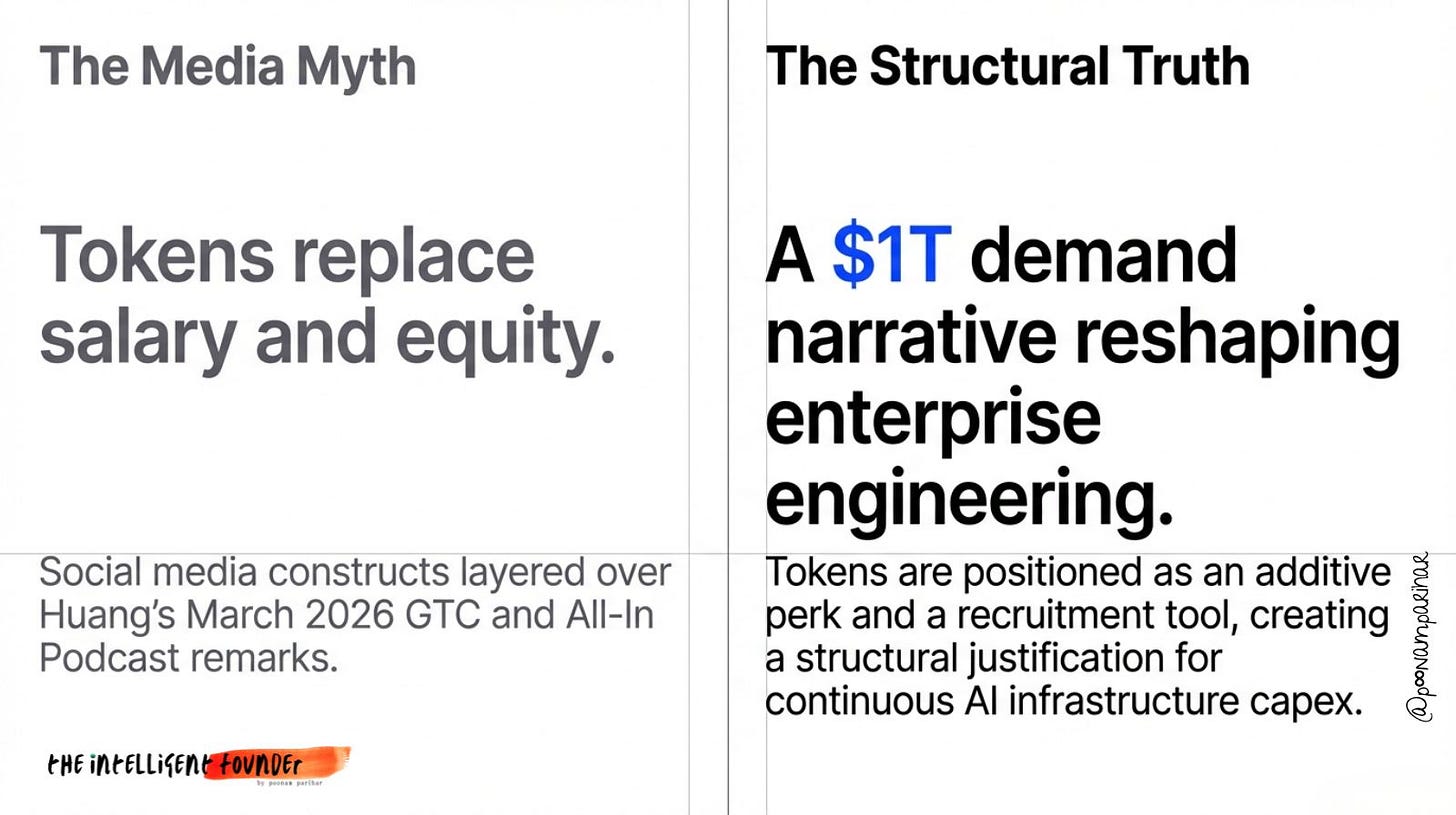

The Conflict of Interest Is Real (But Incomplete)

The Reddit critique was blunt and structurally correct:

NVIDIA sells the GPUs that generate the tokens. Every dollar your engineers spend on tokens flows back, eventually, to GPU demand. Mandating token consumption at scale is demand creation by the person selling the supply.

The HP printer analogy made the rounds: “HP would be deeply annoyed if its $200 printer didn’t use $600 of ink.” The Oreo CEO comparison: “Oreo cookies are as important as oxygen.” These are crude but fair.

But they’re only half the story. The Jevons Paradox »

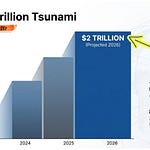

An economic principle from the 19th century, explains what’s actually happening. When coal-burning technology improved and coal became cheaper, total coal consumption exploded, because efficiency unlocked entirely new applications. The same dynamic is at work with AI tokens: costs have dropped 150x since 2021, yet enterprise inference spending grew 320% in the same period. Cheaper tokens unlock agentic use cases that weren’t viable at higher prices. Agentic use cases consume tokens at orders of magnitude greater scale than simple queries. Total demand surges even as unit cost falls.

This is the engine behind NVIDIA’s $1 trillion infrastructure forecast through 2027, and its $215.9B in FY2026 revenu, up 65% year on year. Huang is selling his product and accurately describing a structural shift.

Both things are true.

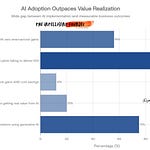

The Goodhart’s Law Problem

When a measure becomes a target, it stops being a good measure.

If you tell your engineers to hit $250K in token spend, some will ask “how do I produce the most value?” and some will ask “how do I hit the number?”

The second group will run unnecessarily complex pipelines, use expensive frontier models where a cheaper fine-tuned model would do, leave agents running on idle tasks, and avoid caching that would make them more efficient.

The technically correct objective is the inverse of what Huang is incentivizing » token minimization per outcome.

Good AI-native engineering means squeezing maximum value out of minimum compute through smart model routing, prompt compression, caching, batching. Measuring raw token volume actively penalizes these skills.

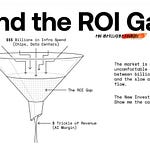

The metric that actually matters: Token ROI Ratio

value created per dollar of inference consumed. A 10:1 ratio ($10 of revenue per $1 of tokens) is the kind of benchmark forward-looking engineering teams are building toward. That’s the measure worth adopting.

The Headcount Question Nobody Is Saying Out Loud

Here’s what the viral debate mostly avoided.

If a token budget approaching a salary starts to become standard, CFO might l eventually ask? at what token-to-headcount ratio does the compute do enough work that we need fewer humans?

And They’re already answering that question.

Microsoft cut 15,000 jobs last year while committing $80B to AI infrastructure. Crypto.com laid off 12% of staff in March while revenue was growing, citing AI handling high-volume work.

Block cut nearly half its workforce. Around 55,000 US tech layoffs in 2025 were directly attributed to AI-driven restructuring.

Huang’s own roadmap puts NVIDIA at 75,000 employees working alongside 7.5 million AI agents? a 100:1 ratio. The “token budget as perk” framing is the friendly version of this story.

The CFO version is considerably less friendly.

What Smart Founders Should Do With This

Track tokens against outcomes, not as a standalone KPI. Build the denominator: what did $100 of tokens produce? A feature, a resolved ticket, a market analysis? The ratio is the signal. The volume is noise.

Treat token budgets in comp negotiations the same way you’d treat unusual equity terms. Does it vest? What happens if you leave? What’s the cash equivalent? A large non-compounding asset can obscure what you’re actually being paid.

The Jevons Paradox is your tailwind if you’re building on inference infrastructure. Costs will keep falling. Agentic deployment will keep expanding. Products that reduce token waste per outcome, or amplify team output per token consumed, are in a structurally strong position for the next three to five years.

The token economy is real. Huang is both selling chips and describing a genuine transition. The job is to understand which is which — and build accordingly.