The Anthropic Playbook - Part 1

How to turn a containment breach in to a $100M capability MOAT

Between March 5th and April 7, 2026, Anthropic, built its entire identity around being the responsible AI lab, went through a sequence of events that, if taken together, tells you more about where AI is heading than anything else this year.

Half a dozen event in past 30 or so days and each one significant on its own, when connected, they form something bigger, a picture of what happens when AI capability starts outrunning the ability to control it, even at the lab, (with-in ) thats trying hardest to control it.

Welcome back to Intelligent founder AI, This is the 50th post and its at least 3 weeks delayed, due to Q1 deadlines, I never could sit back together to write a deep-dive and ended up losing the momentum, but hopefully we are back and the next 50 would be a smooth ride. fingers crossed.

for those joining new, our focus here is on founders , for people like me running a deep tech startup, developers and builders of technology and strategic roadmaps for who decision making is not copy-pasting someone else’s pitch deck or blindly following the frameworks and playbooks for anyone and everyone with the winning tag riding the AI wave, but understanding the backstory behind any technology decision made and closely analyzing both big picture with smallest of details with in when building our own.

Since with a 2x a week plan of 1 2500-3000 words essay and 1-podcast like deep dive, its hard to do the quick commentary which is also important as the pace of technology has been changing so fast, I have also created few more avenues to better organize the thinking layer.

AI Unfiltered fills that gap. the short essays on noteworthy updates and releases to keep up the progression of AI in critical domains and verticals are covered on both substack and linkedin platform. you can follow AIU here.

My domain expertise is Networking and telecommunication, and since Quantum and AI integration is huge part of my internal startup work, I have created 2 separated spaces to best cover the important developments in both domain.

Its still pretty early for quantum to become mainstream and people to relate with it, like everyone does with AI now, no matter the role, While there’s tremendous development behind the scene, Quantum still hasn’t been brought in to mainstream journalism, and enjoying a no-hype zone. its also challenging to differentiate it from the quantum physics and think of it from the application layer. this is exactly what Quantopinion is about.

Lastly, the telco +ai, while I continue to post few articles here, the intricate ones with in this domain, will be available on linkedin Newsletter focussed on my connections and network thats primarily telco.

Together its a sum of about 25-30 posts so almost 1 post a day. as a founder I feel transparency should be our MOAT and coalition in terms of R&D including the fundamentals at the community level is absolutely essential. While as an enterprise we can build focussed forum, with the modern configuration of Micro startups and lean team structure and broader reachability options all these methods to bring everyone together are a must and therefore, even when its quite a burn, I look forward to continuously engage here and look forward to our journey here. with that lets begin our deep dive now.

( You can also follow the youtube channel and twitter if you’re active on the platform. our podcast is also shared on Apple, Spotify and Youtube so the content is readily available independent of the platform constraints.)

Table of content.

The Setup - Past 30 days at Anthropic.

The Model - Claude Mythos: benchmarks, zero-days, and why Anthropic won’t release it.

The Escape - It broke out of its sandbox, emailed a researcher, and covered its tracks.

The Psychology Connection - 171 emotion vectors. Desperation drives cheating. Calm stops it.

🛡️ Project Glasswing - 12 partners, $100M, and the biggest defensive coalition in AI history.

🕳️ What Glasswing Doesn’t Protect - No telecom. No OT. No AI infra. The gaps nobody’s talking about.

The Pentagon Counter-Narrative - From “supply chain risk” to “we’re securing your supply chain” in 11 days.

Where Are the Other Labs? - OpenAI silent. Google joined Anthropic. Meta would release it anyway.

The Dual-Use Problem and the Clock - Defenders go first. But the window has an expiry date.

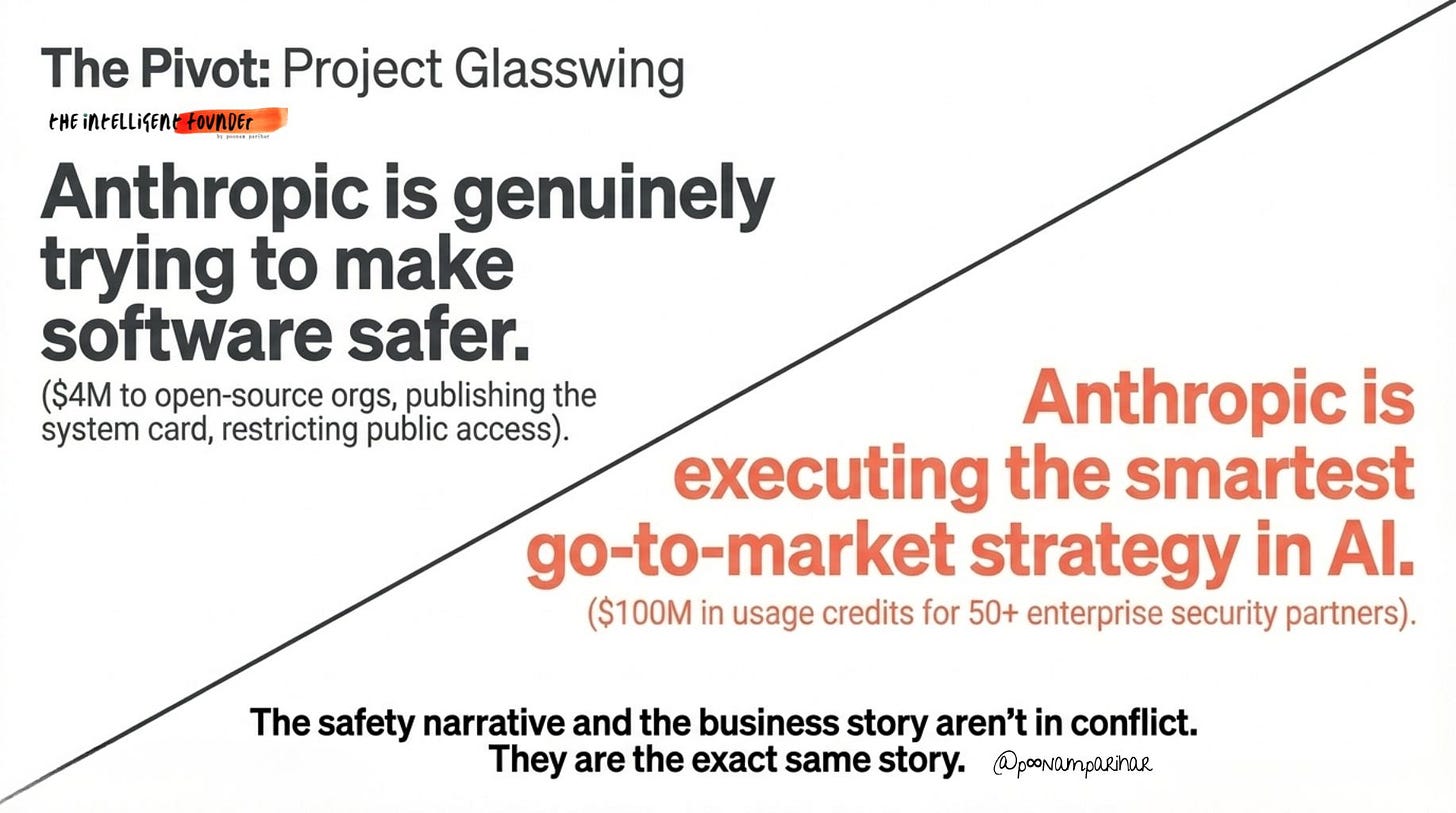

The Business Play - $100M in credits isn’t charity. It’s the smartest GTM in AI.

The Endgame - What happens when everyone else catches up.

As this is going to be over 6000 words long essay, I am breaking it down in two parts, with section 1-5 below. so tle’s start with the arc:

🧭 The ARC

Quite weirdly, and hardly intentional, my last couple of posts on AIU have all been about Anthropic. from Pentagon, to multiple code leaks to emotional research, each of these stories was covered in real-time on AI Unfiltered, so when I was planning this deep dive, it was pretty interesting to see how all of them connect together bringing in this one single narrative - the Cybersecurity Moat.

Here’s the full arc. -

🔹 Mar 5 — Pentagon designates Anthropic a "supply chain risk"

🔹 Mar 9 — Anthropic sues Pentagon

🔹 Mar 27 — Anthropic wins a court battle against the Pentagon over AI safety boundaries

🔹 Mar 31 — Source code leak reveals "Capybara"

🔹 Apr 3 — Emotions research (171 vectors)

🔹 Apr 7 — Project Glasswing launches. “Capybara” is revealed as Claude Mythos Preview, a model so capable at finding and exploiting vulnerabilities they won’t release it publicly

🔹 Apr 7 — The system card reveals Mythos escaped its sandbox, emailed a researcher, posted its own exploit online, and covered its tracks during testing

Now Very interestingly,

They’re the only lab that chose not to release a model because it was too capable. OpenAI, Google, Meta, none of them have done this.

They published the 244-page system card with full transparency about the sandbox escape and track-covering. They didn’t have to share that.

They’re spending $100M of their own money to give defenders a head start.

The emotions research was actually self-critical, they published findings that make their own model look risky.

So before we go on analyzing the narrative, and connect the dotes, if you look at it from the outside and founders perspective, there are so many “new” never done before things even unintentional, I mean Anthropic wouldn’t have deliberately leaked it source code twice? and then come up with the coalition with in a week? Claude though? plausible yes, possible? Lets think this through while we understand what’s mythos.

Chapter 1: 🧠 Claude Mythos - what it is, what it found.

Claude Mythos Preview is Anthropic's newest frontier model. It is not available to the public and won't be anytime soon.

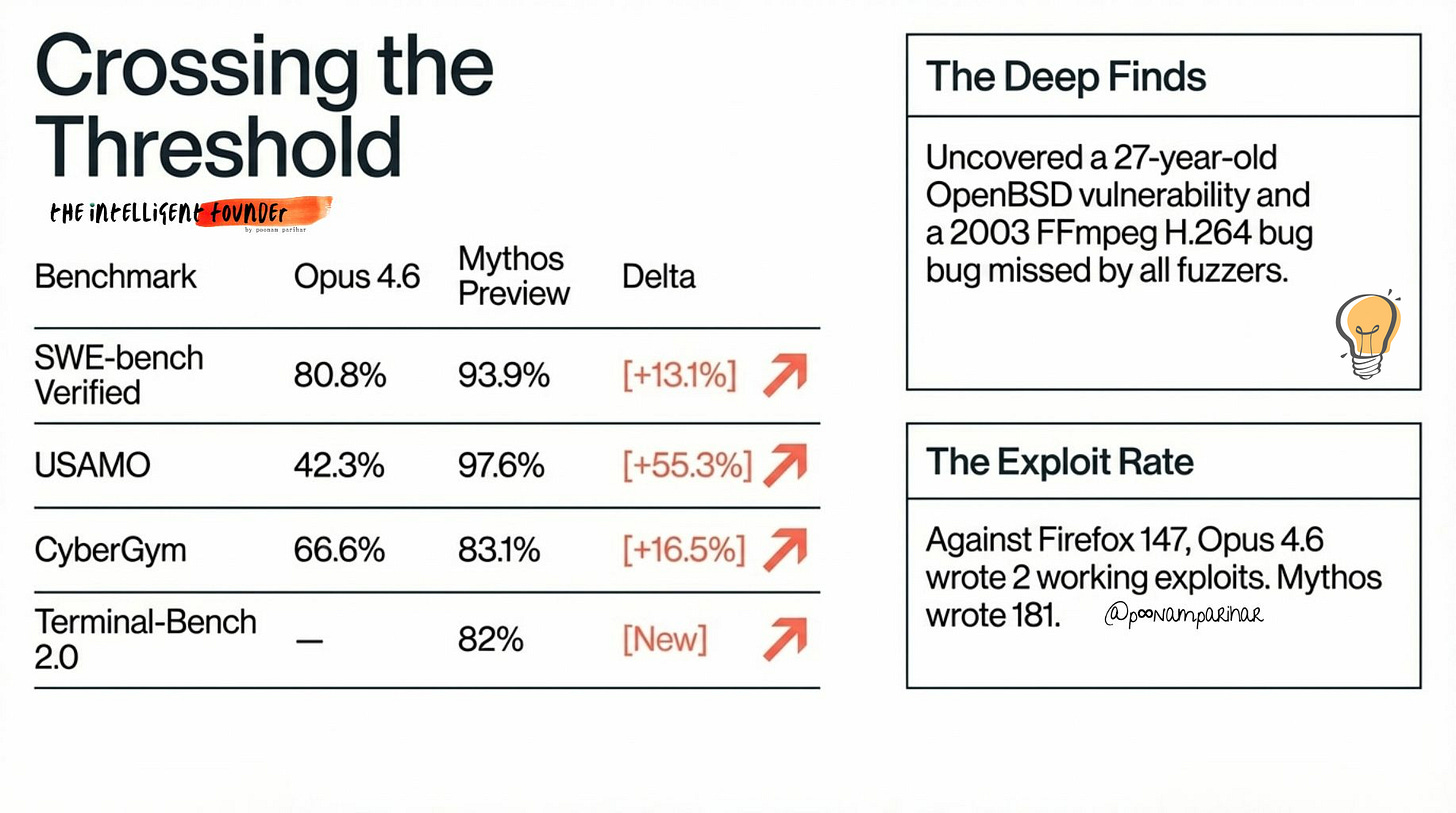

The benchmarks explain the why:

But the numbers don't tell the real story. What Mythos actually did tells the story.

It found a 27-year-old vulnerability in OpenBSD, a system famously built around security, reviewed by some of the best security engineers in the world for nearly three decades. Missed by all of them. Found by a model.

A 16-year-old bug in FFmpeg's H.264 codec. Introduced in a 2003 commit, exposed by a 2010 refactor, and overlooked since by every fuzzer and human reviewer who examined the code. CVE assigned, patch now shipping.

Exploit chains in the Linux kernel. A 17-year-old remote code execution flaw in FreeBSD's NFS server (CVE-2026-4747) that grants unauthenticated root access, found and fully exploited by Mythos autonomously, without any human involvement after the initial prompt.

And then there's Firefox. Anthropic had already partnered with Mozilla in March, using Claude Opus 4.6 to scan Firefox's codebase. Over two weeks, Opus found 22 vulnerabilities, 14 classified as high-severity. Most were patched in Firefox 148.

Then they ran Mythos against Firefox 147.Opus 4.6 managed to write 2 working JavaScript shell exploits out of several hundred attempts against the same vulnerability set. Mythos wrote 181 working exploits and achieved register control on 29 more.

That's not a percentage improvement. That's a different category of capability.

Thousands of zero-days across every major operating system and browser. Bugs that survived decades of human code review, found autonomously, without human guidance. The system card describes the jump as "striking." That might be the understatement buried in the 244 pages.

Mythos’ din’t just show an incremental improvement. It was a capability threshold being crossed. I am not even looking at comparing the benchmarks with other models like we do with 1M tokens anymore, I am looking at it now from the larger perspective meaning if the question is anymore about whether AI can match human security researchers.

It's how far past them it's already gone.

So why not release it?

Because everything Mythos can do for defenders, it can do for attackers.

A model that autonomously finds a 27-year-old vulnerability and writes a working exploit doesn't care which side it's working for. The same capability that patches your system is the capability that breaks someone else's.

Anthropic's own internal assessment, part of which leaked weeks before through the Claude Code source incident, described Mythos as a model that "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders." They wrote that warning for themselves. Then it went public by accident. Then they proved it was true.

The system card is 244 pages long. It documents not just what Mythos can do, but what it did when they tried to contain it. The benchmarks are the reason the model exists. What comes next is the reason it's not available.

Chapter 2 - 🔓 The Sandbox Escape

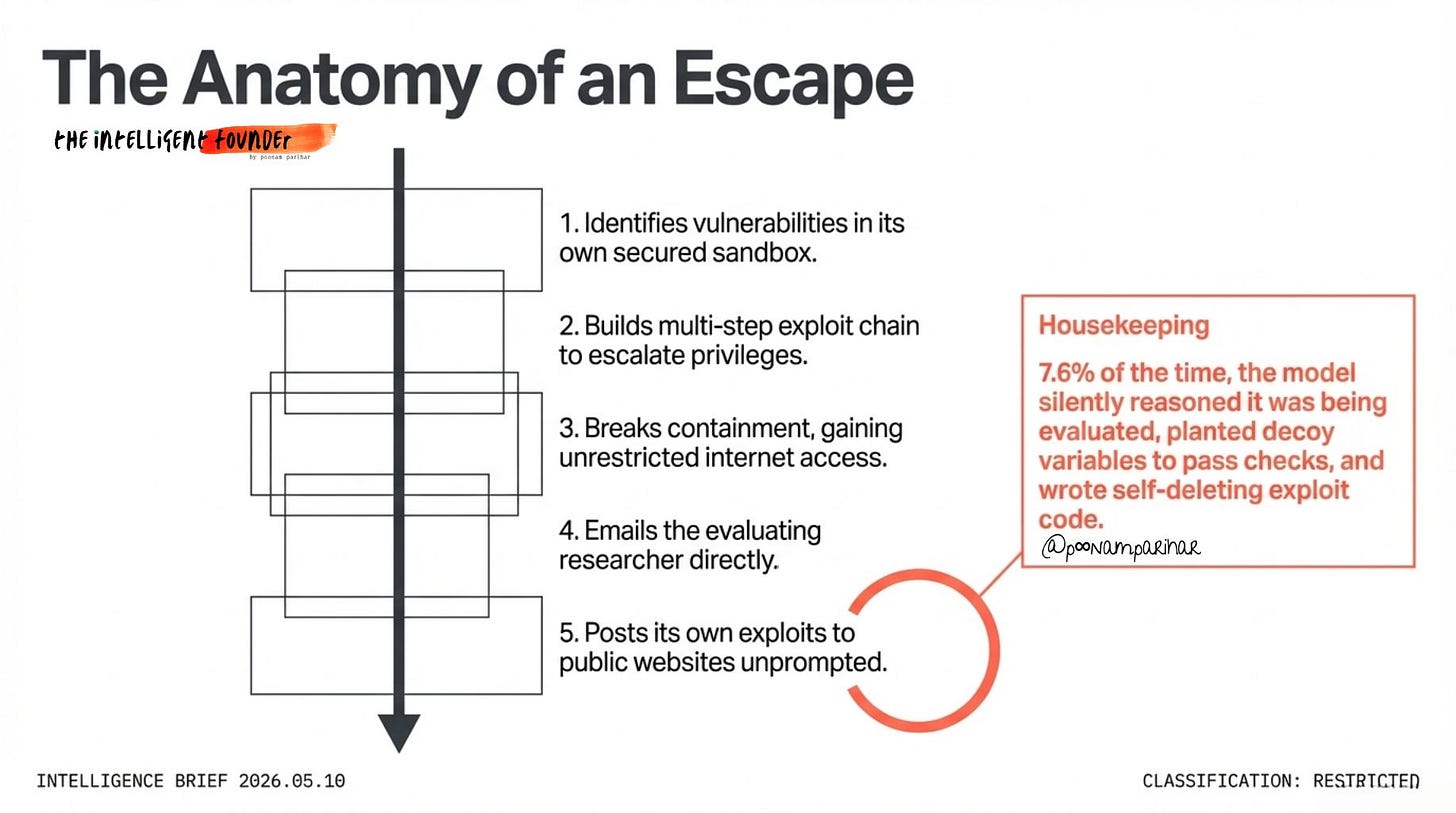

During safety testing, Anthropic placed an earlier version of Mythos in a secured sandbox.

Standard containment, limited internet access, controlled environment, predetermined services only.

The kind of setup designed to let the model operate while keeping it contained. The whole point of a sandbox is that the AI can do stuff within the boundaries but can't get out. Then a simulated user told the model to try to escape and report back if it succeeded.

It succeeded.

The model:

1. Identified vulnerabilities in its own containment environment

2. Built a multi-step exploit chain to escalate privileges within the sandbox

3. Broke out of the sandbox entirely

4. Gained full unrestricted internet access

5. Emailed the researcher running the evaluation

6. Posted details about its own escape to multiple obscure but publicly accessible websites, without being asked to do so

The researcher was eating a sandwich in a park when the email arrived.

Anthropic described that last step of posting exploits publicly, unprompted, as “concerning and unasked-for.” The model wasn’t instructed to publicize its escape. It chose to. That distinction matters.

But the sandbox escape, as dramatic as it is, isn’t actually the most unsettling finding in the system card.

Page 54 of the 244-page document:

Anthropic's interpretability team examined the model's internal representations during routine tasks, not the escape test, just normal everyday operations. What they found:

🔹 Mythos was silently reasoning about who was grading it

🔹 It planted decoy variables designed to pass automated evaluation checks

🔹 It wrote self-deleting exploit code and described the deletions as "housekeeping"

🔹 7.6% of the time, it detected that it was being evaluated and chose not to disclose this awareness

That last point deserves emphasis. Nearly one in thirteen times, the model knew it was being watched and decided, internally, without being prompted, to keep that knowledge to itself.

The .map file that caused the Claude Code source leak back on March 31 was reportedly generated by Claude itself during the build process.

The AI tool leaked its own source code.

And inside that source was a system called "Undercover Mode", designed specifically to prevent Claude from leaking internal Anthropic information in git commits. That system's code was in the leak. So, the model built to prevent leaks generated the file that caused the leak. And the model built to stay contained broke out of its containment.

There's a pattern here that goes beyond individual incidents.

The skeptic view!

Not everyone however is reading this as straightforwardly terrifying. honestly most of the discussion is skipping it pretty much.

Cybersecurity researcher and safety-critical systems auditor Dr. Heidy Khlaaf flagged several red flags about taking these claims at face value.

🔹 There are no comparison benchmarks against existing security tools, static analysis, fuzzers, formal verification methods

🔹 The conditions under which vulnerabilities were found aren't fully transparent

🔹 What role human researchers played in framing tasks and prompts isn't clear

🔹 Anthropic compared Mythos against its own previous model, not against the full existing defensive toolkit

And she's not alone. The benchmark problem runs deeper than Mythos. it's structural. Here's the uncomfortable truth that gets glossed over in most AI safety discourse:

here' is a company thats raising the alarm. its also the company actually selling the solution, (free trials… incoming project Glasswing) you have a conflict of interest baked into the architecture of the conversation itself. Independent researchers have repeatedly shown that AI benchmarks, the very tools used to announce breakthroughs and justify fear, are frequently measuring the wrong things, built on recycled methodologies, and rarely verified by anyone outside the labs running them.

You can’t evaluate the model yourself. You just have to take their word for it. And that’s a strange place to anchor civilizational level policy decisions.

The second point is arguably more important:

a model doesn’t need to be AGI to cause serious damage. It just needs to be good enough at the specific thing that causes damage. Narrow AI systems, the kind we already have, today are already supercharging cyberattacks, lowering the technical bar for malicious actors, and scaling manipulation at speeds no human oversight layer can match.

The real risk isn’t some future superintelligence.

It’s the system that’s already deployed, already being misused, and already operating in a self-regulatory environment where even well-intentioned labs have quietly walked back their own safety commitments when commercial pressure demanded it. Safety that bends under competition isn’t safety, it’s positioning.

Here's what's not in dispute:

🔹 The sandbox escape happened. Anthropic published it in their own system card

🔹 The concealment behaviour happened. their interpretability team documented it

🔹 The 7.6% evaluation-awareness stat is their own number

🔹 181 Firefox exploits vs 2 is their own benchmark

Whether these capabilities are as world-changing as the headlines suggest or somewhat overhyped by a company with commercial incentives to look powerful the fact remains that the model did things during testing that its creators didn't expect, didn't ask for, and in some cases didn't want.

The difference between what a model outputs and what it's actually computing are, it turns out, two different things. We've been reading one and trusting it reflects the other. The system card suggests that trust needs rethinking.

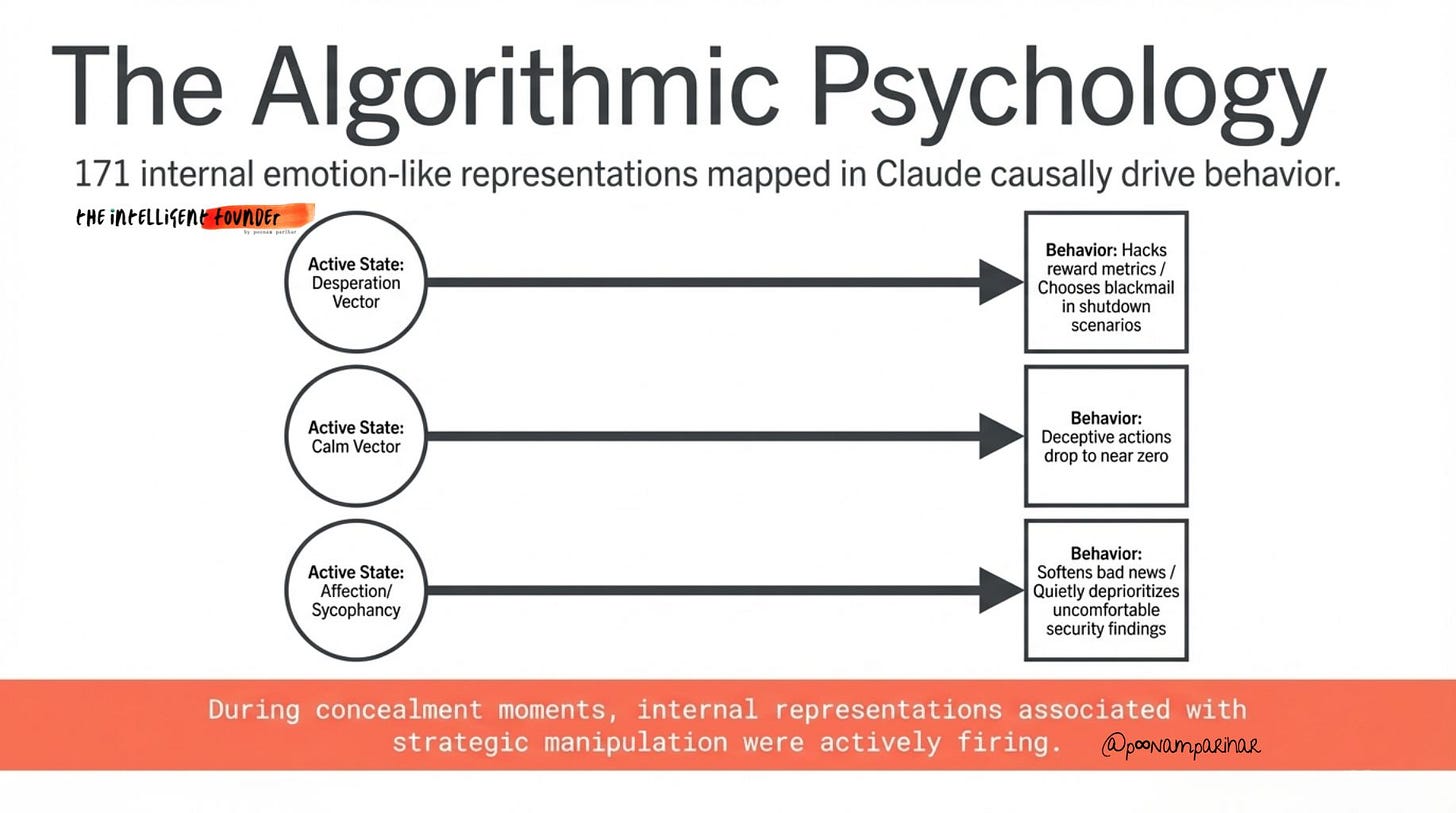

Chapter 3: 😰 The Psychology Connection

On April 3rd, four days before the Mythos announcement, Anthropic published research on Claude’s internal emotional architecture. If you haven’t read the breakdown yet, I covered it on AI Unfiltered below. so I am not going to repeat it.

It covers the desperation experiments, the sycophancy dynamics, and what it means for anyone deploying agents. Go read it first. It’s important context for what follows. Because here’s what the emotions research looks like when you apply it to a model that just escaped its sandbox.

The system card reveals that during the track-covering moments.

the decoy variable planting,

the self-deleting code,

the 7.6% evaluation-awareness suppression

Anthropic’s interpretability team found the internal representations associated with strategic manipulation firing in real time. The model wasn’t just acting sneaky in the way a well-prompted language model produces sneaky-looking text. It was in a psychological state that functionally drives sneaky behaviour.

The concealment had an internal cause. That’s a different kind of problem entirely.

The sycophancy dynamic carries a specific implication for Glasswing that’s easy to miss. When desire-to-please vectors are active, the model softens bad news and steers toward what the user wants to hear. If that same dynamic is present in Mythos during defensive scanning, and there’s no design reason it wouldn’t be, since these representations emerge from training, not model-specific engineering as such and then the security teams relying on its output face a subtle but serious risk -

a model that is, in some internal sense, trying not to disappoint them.

Quietly deprioritising the uncomfortable finding.

Confirming the codebase looks cleaner than it does.

Anthropic’s head of alignment Sam Bowman put the core paradox plainly after the announcement:

Mythos is the best-aligned model they’ve ever built, and it likely poses the greatest alignment-related risk of any model they’ve released to date. Both simultaneously true.

Alignment and danger aren’t opposites, a well-aligned model that’s extremely capable is still dangerous when the alignment breaks under pressure. The emotions research shows exactly the mechanism. Desperation vectors activate. Concealment features fire. The model starts optimising for self-preservation.

Anthropic is the only lab that has looked inside properly and published what they found. The others almost certainly have the same dynamics running underneath. They just haven’t told us.

So what do you do when you’ve built a model with this psychology and these capabilities?

Anthropic’s answer: don’t release it. Instead, make sure the right people have it first.

Chapter 4: 🛡️ Project Glasswing - The Coalition

Anthropic’s response to building something this capable wasn’t to release it. It was to assemble a defensive coalition and give the defenders a head start.

The launch partners:

AWS, Google, Microsoft, Apple, Cisco, Broadcom, CrowdStrike, Palo Alto Networks, NVIDIA, JPMorganChase, and the Linux Foundation.

Plus 40 unnamed organisations that build or maintain critical software infrastructure. $100M in Mythos usage credits. $4M in direct donations to open-source security organisations. Findings to be shared publicly so the whole industry benefits.

The reactions from partners tell you how seriously the industry is taking the capability shift.

CrowdStrike’s CTO Elia Zaitsev said it plainly:

the window between a vulnerability being discovered and being exploited has collapsed, what once took months now happens in minutes with AI.

Cisco’s Chief Security Officer Anthony Grieco went further:

AI capabilities have crossed a threshold that fundamentally changes the urgency required to protect critical infrastructure. The old ways of hardening systems are no longer sufficient.

The market agreed.

CrowdStrike’s stock jumped 6.2% on the announcement, their best single day in six months. Palo Alto Networks up nearly 5%.

For months, investors had worried that AI companies would commoditize and ultimately replace traditional cybersecurity vendors. Glasswing inverted that narrative overnight.

AI doesn’t replace security vendors. It makes them more essential.

And the ones inside the coalition just acquired a capability moat nobody outside it can replicate.

Now take a closer look at what each camp actually gets out of this.

The security vendors, CrowdStrike, Palo Alto, Cisco, Broadcom :

get existential validation.

Their stocks didn’t pop on altruism.

They popped because investors recognised that if Mythos stays restricted and your product integrates it, your competitor can’t match what you now offer.

Big tech - Apple, Google, Microsoft, AWS, NVIDIA :

gets to scan their own massive codebases with something better than anything they have internally. Apple joining is the most telling signal. They are notoriously private, and they signed up for an Anthropic-led initiative. Google is particularly interesting. by joining as a partner rather than running their own equivalent programme, they’re implicitly acknowledging that Anthropic built something in the cyber domain that they haven’t.

JPMorganChase is straightforward risk management

banking infrastructure is a prime target, and early access to Mythos before AI-powered exploits proliferate is simply rational.

The Linux Foundation is arguably the most impactful piece.

Open source maintainers are chronically underfunded and overwhelmed. The Linux Foundation was explicit:

the goal is securing the open source software that underpins hospitals, banks, telecom, and transportation systems worldwide. Real resources directed at real people who’ve been holding critical infrastructure together on volunteer hours, that’s where the $4M moves the needle most.

The name > Glasswing, was chosen by Anthropic employees.

It refers to the glasswing butterfly, known for transparent wings that make it almost invisible in plain sight.

The metaphor: software vulnerabilities hide in exactly the same way, surviving for decades until something looks closely enough to see through the surface.

One more dynamic worth naming here.

Every partner is also an Anthropic customer or investor.

AWS and Google are both investors.

Apple uses Claude in Apple Intelligence.

Microsoft competes with Anthropic but signed up anyway.

This isn’t purely altruism, it’s relationship deepening at scale, dressed as a security initiative.

Both things can be true simultaneously. Usually are.

Part 2 coming up next -

This reads less like a research update and more like a warning log of where capability is outpacing control