The Network Is Now the AI Computer.

Why Bandwidth, Photonics, and Security Will Decide Who Wins the AI Race!

Right now, the AI story you see online is all about “agents,” “copilots,” and “one‑person unicorns.” It looks like magic on a laptop screen. But behind every slick demo there’s something much more boring 😑 🥱 yet much more important going on and that is computers yelling at each other over wires.

When you train a big AI model, it doesn’t run on one fancy chip.

It runs on thousands of chips at once, spread across rows of racks in a warehouse. Those chips have to talk to each other constantly. If the “conversation” between them slows down, everything slows down.

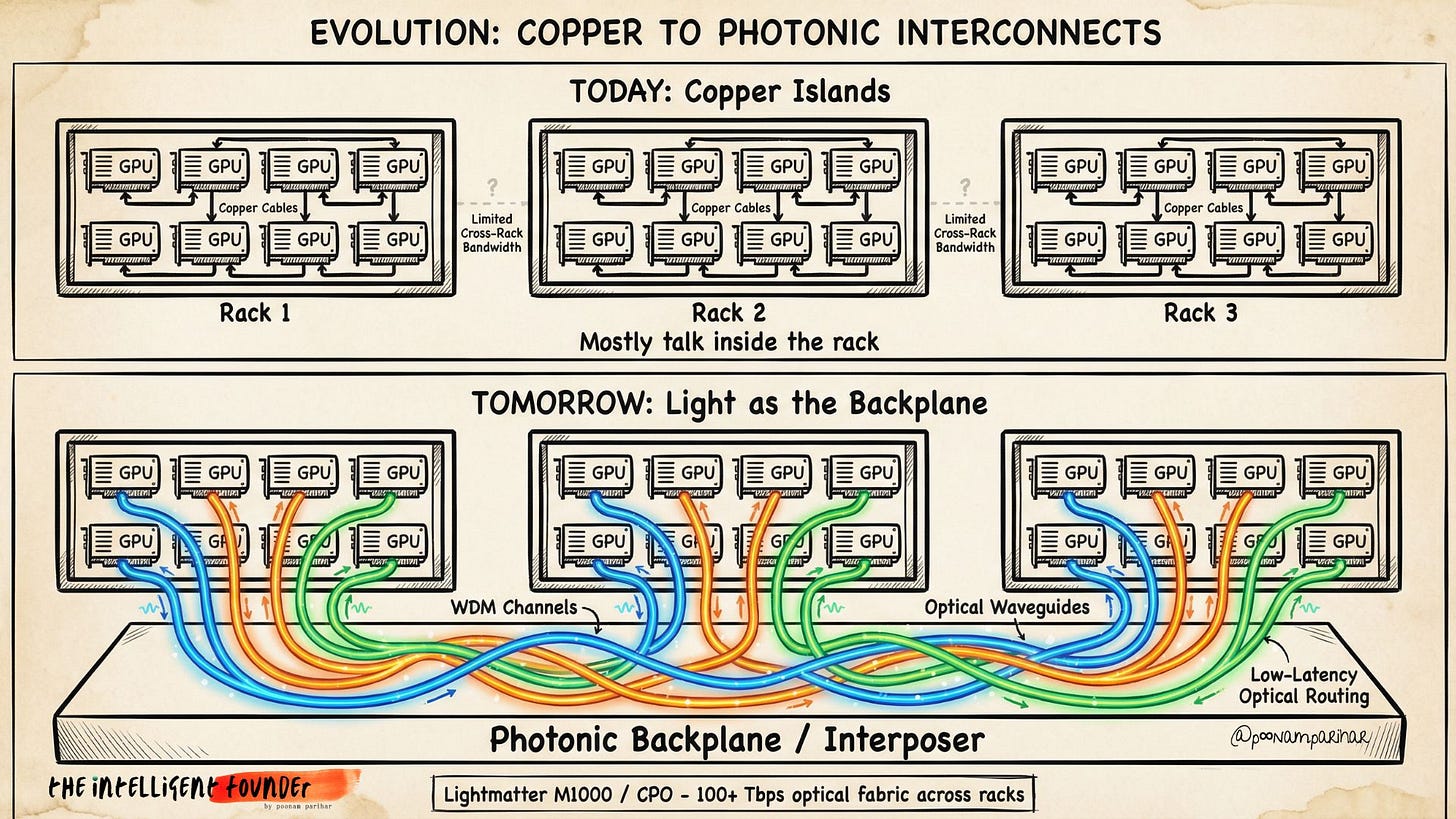

That conversation happens over cables. Today, those cables are mostly copper. Over the next few years, they’ll increasingly be glass fibers carrying light. And that change, from electricity in metal to light in glass – will quietly decide who actually wins the AI race.

Put simply: It’s no longer about who has the cleverest AI. It’s about who can move data around the fastest and cheapest.

TL;DR

AI isn’t just about smart software; it needs huge factories of computers working together.

The real choke point now is how fast we can move data between those computers.

Old copper wires are hitting physical limits: they get hot, waste power, and can’t go far at very high speeds.

Light through fiber (photonics) lets data travel faster and farther with less energy.

The winners in AI will be the ones who build better “roads and pipes” for data, not just better apps.

Table of Contents

Why the conversation between thousands of GPUs matters more than the demo

Tech giants are spending like they’re building a second power grid, not buying servers

At bleeding-edge speeds, copper cables collapse to rack distances—here’s the physics

How light replaces electricity to turn scattered racks into one giant machine 🔒

What MWC, RSA, and national AI summits reveal about infrastructure constraints e 🔒

Specific moves for founders, enterprise buyers, and investors 🔒

For Founders 🔒

Four white-space opportunities in AI infrastructure toolingFor Enterprise Buyers 🔒

Questions that separate real infra from vendor fluffFor Investors 🔒

How to spot actual moats when bandwidth dominates.

Winners build better machines, not just better models.

Everyone’s Selling Agents; The Bottleneck Is the Wires.

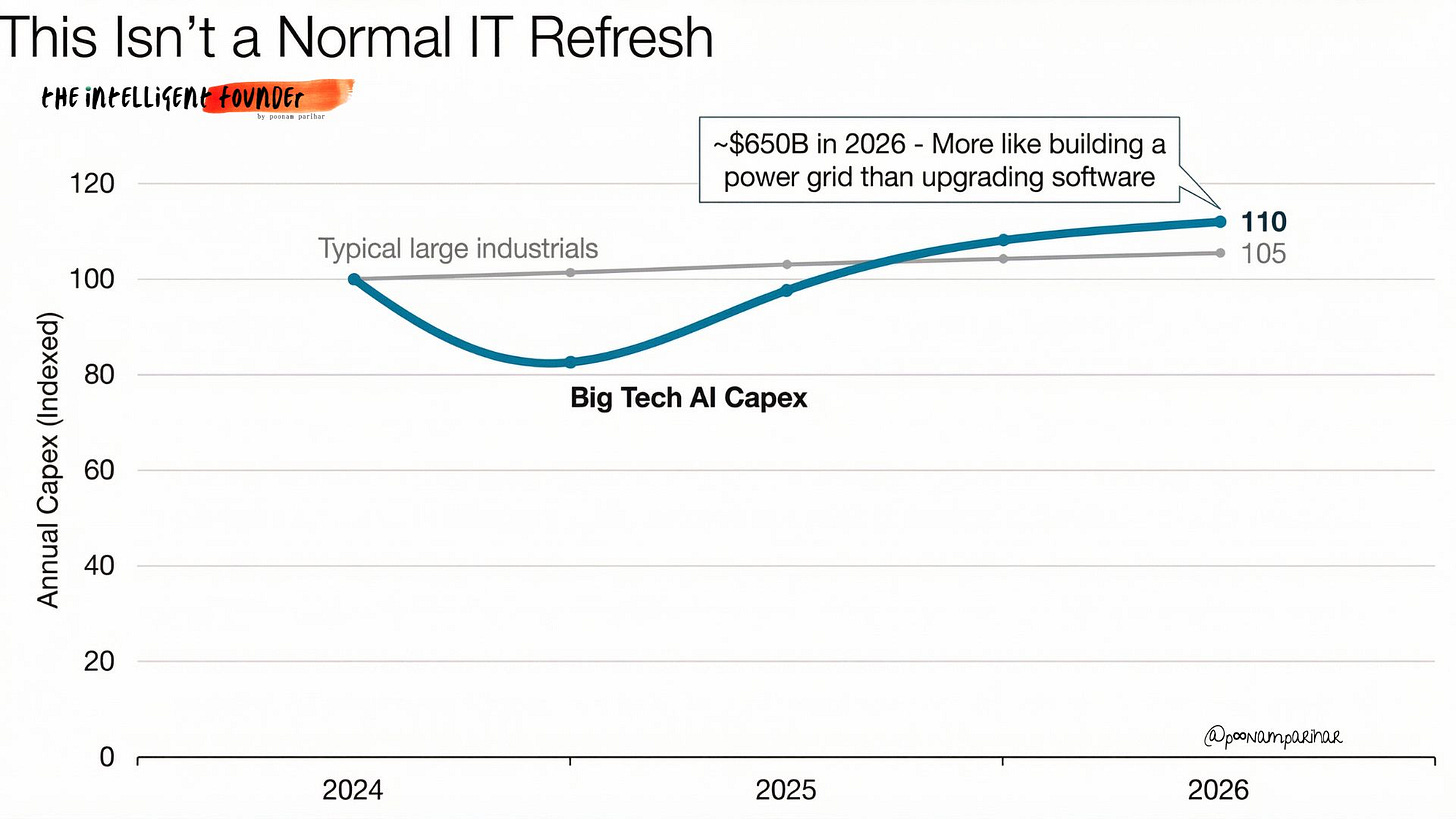

The $650B AI Grid Build-Out

Over just one year, the biggest tech companies ( Alphabet, Amazon, Meta, and Microsoft ) are planning to spend around $650 billion building AI infrastructure mostly data centers, power, and networks. That’s more than many countries spend on their entire power grid in the same period.

Bridgewater framed the same magnitude in Reuters coverage, emphasizing it as “AI investment” scale rather than routine IT refresh. Meaning?

This isn’t “buy a few more servers” money or a normal cloud upgrade cycle. This is “build a new utility” money. The spending tells you what cycle you’re actually in.

That number matters less as a headline and more as a diagnostic:

This is utility build-out behavior, not a SaaS feature cycle.

The constraint is increasingly “can we build it?” » power, sites, cooling, networking gear and not “can we sell it?”

The bottleneck is moving from software iteration to construction, supply chain, and physics.

A helpful way to think about it:

The first internet was built so people could send messages, watch video, and browse websites.

The new AI “internet under the internet” is being built so computers can talk to other computers at extreme speed, all day, every day.

A good comparison is “building a second internet under the first one.”

The public internet is optimized for many independent flows like north-south traffic between clients and servers. AI infrastructure is increasingly dominated by east-west traffic:

accelerators synchronizing,

exchanging activations,

routing tokens (MoE),

sharding weights,

retrieving KV cache,

checkpointing, and

feeding multi-stage inference pipelines.

That east-west world needs different economics and different topology. It needs the kind of determinism and bandwidth you don’t get by accident.

So effectively we’ re laying a second, invisible network inside and between data centers that is only there so AI chips can keep each other up to date. That’s what this $650B wave is really paying for.

for a founder or CTO, the non-obvious takeaway is that the enduring advantage in this cycle is not a wrapper UI. It’s throughput, latency, and reliability per dollar spent, end-to-end from GPU package, to rack, to row, to campus.

Why Copper Is Running Out of Road

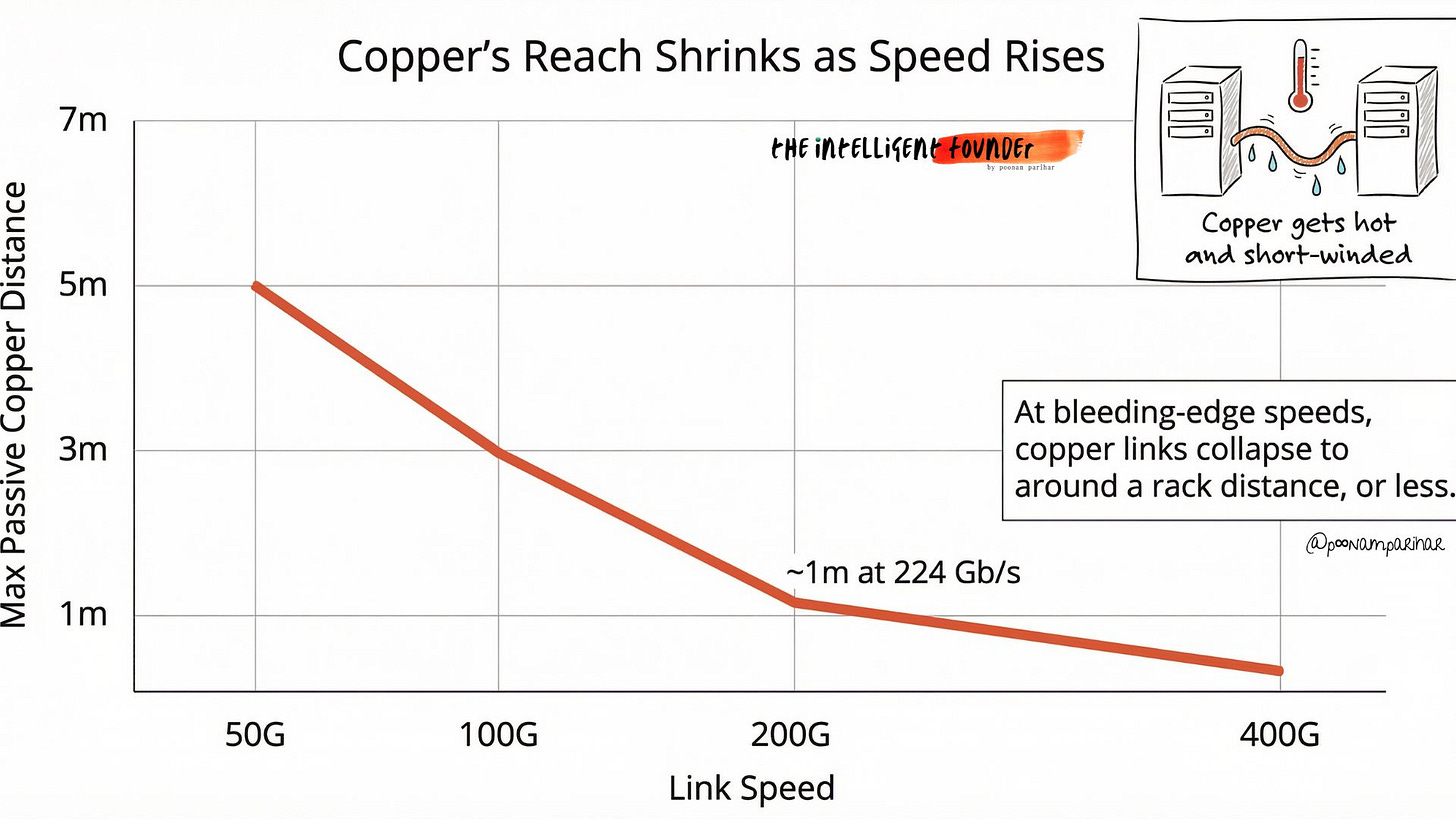

I’ll try to explain this and next section simple language, as most of the readers may be non-deep tech. Most of today’s AI data centers still use copper cables for the short links between chips and racks, this is basically very expensive versions of the same metal inside your laptop charger. The long‑distance traffic across cities and under oceans already runs mostly over fiber, but inside the AI ‘factory’ a lot of those close‑range connections are still copper.

When you push data through a copper cable at extreme speeds, two things happen:

The signal dies quickly – after maybe a meter or so at cutting‑edge speeds, it becomes mush without heavy boosting.

You burn a lot of power as heat, because you’re constantly charging and discharging a metal wire at GHz rates.

For AI, this is a killer. A modern AI server rack can use 3–5 times more power than a traditional rack. If too much of that power is wasted just pushing bits down hot copper, you hit a wall on both cooling and cost.

Inside the rack, that’s why copper is still everywhere: it’s cheap, familiar, and for a few meters it works great. But as AI clusters grow, the real bottleneck isn’t just “moving big files”, it’s keeping thousands of GPUs in sync so none of them are sitting idle waiting on the slowest link.

In modern systems, a surprisingly large chunk of time is spent just on GPUs talking to each other rather than doing math, and that share keeps rising as models and clusters scale.

Bottomline. the physics is now biting.

copper isn’t “dead,” but it’s running out of road as the main way to glue big AI machines together. The faster we push the links, the shorter and hotter those copper runs become, and beyond roughly a rack’s distance the wires themselves start to dictate how you design the whole system.

Photonics and the New AI Backplane

If copper is like shouting across a room, photonics is like using lasers and fiber‑optic cables to talk. Instead of pushing electricity through metal, you send light through glass.

Why this matters:

Light can carry much more data over longer distances without fading.

It uses less energy per bit, especially as the distance increases.

It doesn’t turn your cables into heaters in the same way.

In the old world, we had lots of separate servers connected by a network. In the AI world, the “computer” is the whole domain of accelerators that can swap tensors fast enough to feel like one big chip. Photonics simply makes that domain bigger.