Thursday’s deep dive took longer than expected. the agent war moved fast this week and I’m still debating whether to approach it from the OpenClaw architecture angle or the OpenAI acquisition angle, which arguably makes Anthropic the biggest loser in recent AI history. We’ll see how that plays out soon.

The community is as usual divided. There’s real backlash over open-source ownership now that Steinberger is inside OpenAI, which is valid. so nothing new in open source, honestly but what’s interesting is that the backlash has increased activity in the space. Forks like ZeroClaw and PicoClaw are already gaining traction, and if nothing else, the acquisition seems to have lit a fire under independent developers to build harder and faster. Mac Minis are selling like hotcakes because Andrej Karpathy said he bought one. Well, you can absolutely set up an OpenClaw agent for half that cost, but Macs are cool, so I say go for it.

On the enterprise and rivals front, not much to report this past week beyond the usual scrambling and fumbling coverage. So instead of chasing that noise, let’s do something more useful. and I’ll come back to this either in next post or a bit later.

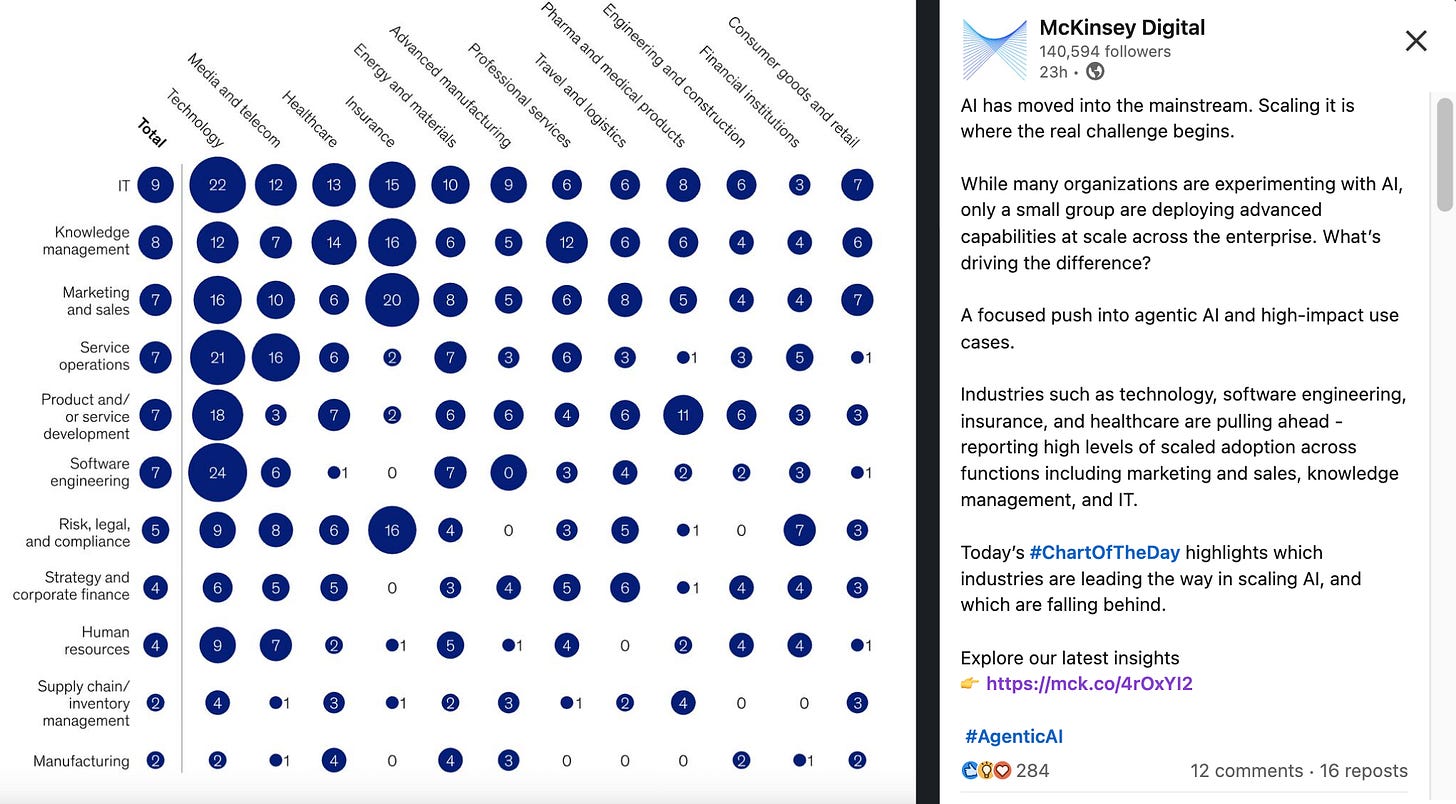

Now, I was going through my usual linkedin feed this morning and saw the McKinsey’s latest post, with basically 3 rehashed themes from its state of AI’25 report -

scaling is harder than experimenting,

governance matters,

agentic AI is next

and few sharper points like sub-millisecond multi-agent orchestration, process context layers for agents, but again these are known issues. and so I thought let me pull down the Q1 reports and see if anyone is actually addressing the gaps or starting new conversations. and that's exactly what we’re covering in this podcast -

📊 Reports analyzed: McKinsey State of AI ‘25 | Deloitte Enterprise AI ‘26 | Stanford AI Index ‘25 | Gartner Predicts ‘26 | NVIDIA Telecom AI ‘26 - Plus: Orgvue Workforce Survey ‘25 and TechCrunch Enterprise VC Survey ‘26

The Headline Numbers first -

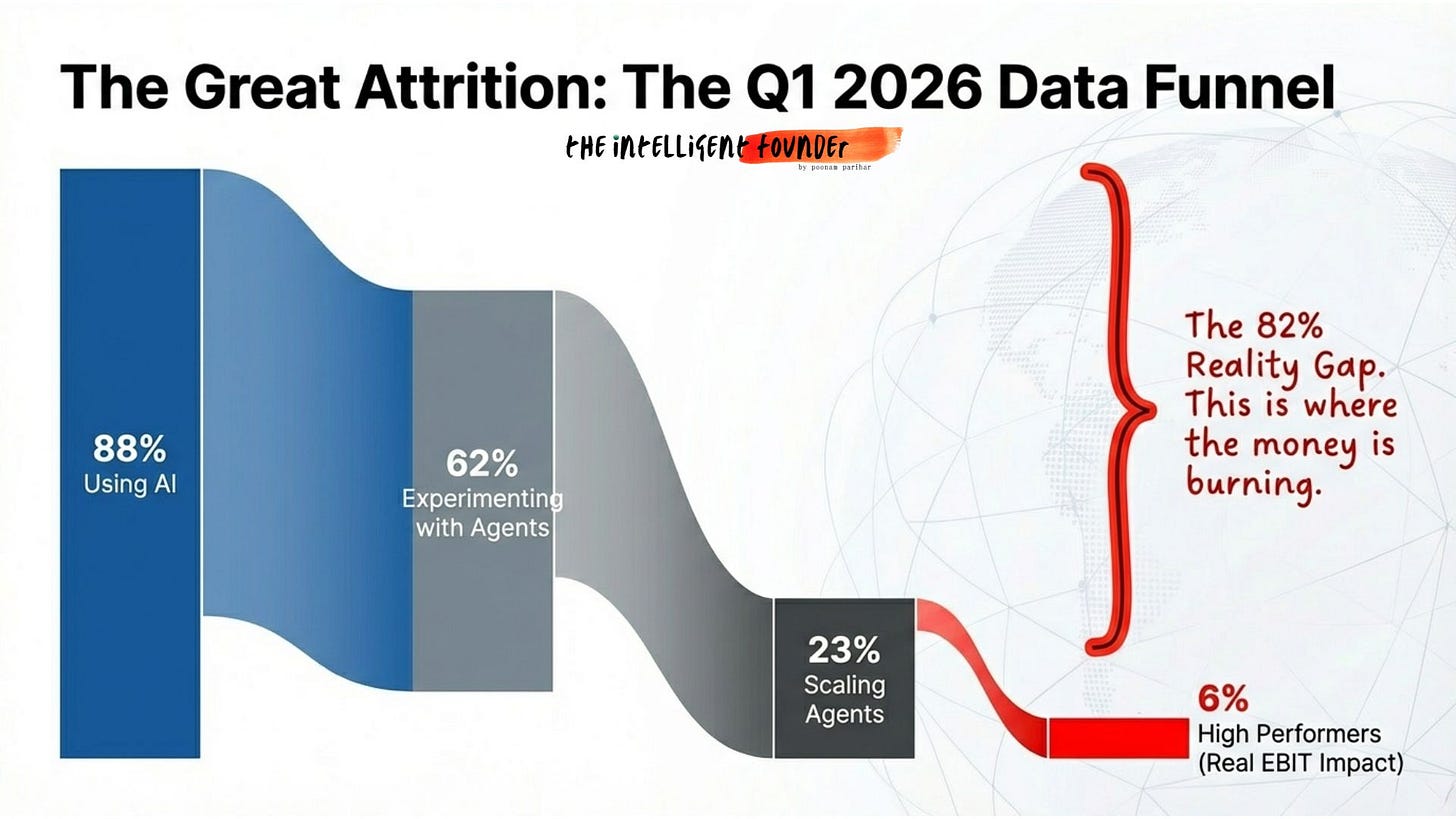

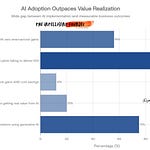

88% of organizations now use AI. 62% are experimenting with agents. But only 23% are scaling. And only 6% see real EBIT impact. That’s the funnel.

88 goes in, 6 comes out.

Deloitte surveyed 3,235 leaders and found the same wall bur from a different angle.

Only 25% have moved 40% or more of pilots into production.

74% plan agentic AI within two years. But only 21% have governance ready. and Talent readiness? Just 20%.

Gartner’s counter-narrative is actually brutal

40%+ of agentic AI projects will be scrapped by end of 2027. Only about 130 of thousands of “agentic” vendors are genuine. The rest is agent washing.

Stanford confirmed the tech barrier is collapsing

inference costs dropped 280x in two years. But the organizational barrier? That’s the one that remains.

What People Are Actually Saying?

On Reddit, practitioners called Gartner’s 40% “generous”, one commenter put it quite bluntly saying: “This would mean lower failure rate than implementing a new CRM.”

On LinkedIn, someone reframed McKinsey’s data as: “We spent $47M on AI. Nothing’s different.”

The Deloitte governance gap » 74% planning agentic vs 21% ready, got called “a collision course, not a strategy.”

Honestly, I agree. The reports are measuring adoption when they should be measuring operational readiness. Those are two very different things.

Fair counterpoint though: one Reddit commenter noted Gartner has its own reason for pessimism. their business is being disrupted by AI too. Even the analysts have skin in the game. Quite right actually.

What’s Actually Failing vs. What’s Actually Working?

So the failures have names now:

Klarna replaced 700 jobs with AI, then rehired humans after quality dropped 22%

McDonald’s killed their AI drive-through after 3 years, the system rang up 260 McNuggets and added bacon to ice cream

Air Canada was held legally liable for a chatbot that invented a fake refund policy

55% of companies that replaced workers with AI now say it was a mistake

Every failure shares one trait: AI bolted on without workflow redesign.

But the wins are real and where scope is narrow:

Insurance claims: 245% ROI on structured, well-defined tasks

Revenue leakage detection: $5.7M retained, cost less than one senior hire

Sales forecasting: accuracy jumped 63% to 85%, deal slippage down 28%

Customer support (done right): 55% tickets resolved autonomously, costs down 32%

The pattern? Tightly scoped. Domain-specific. Clean data. Human escalation built in. Boring? Yes. Profitable? Absolutely.

The Spend Paradox

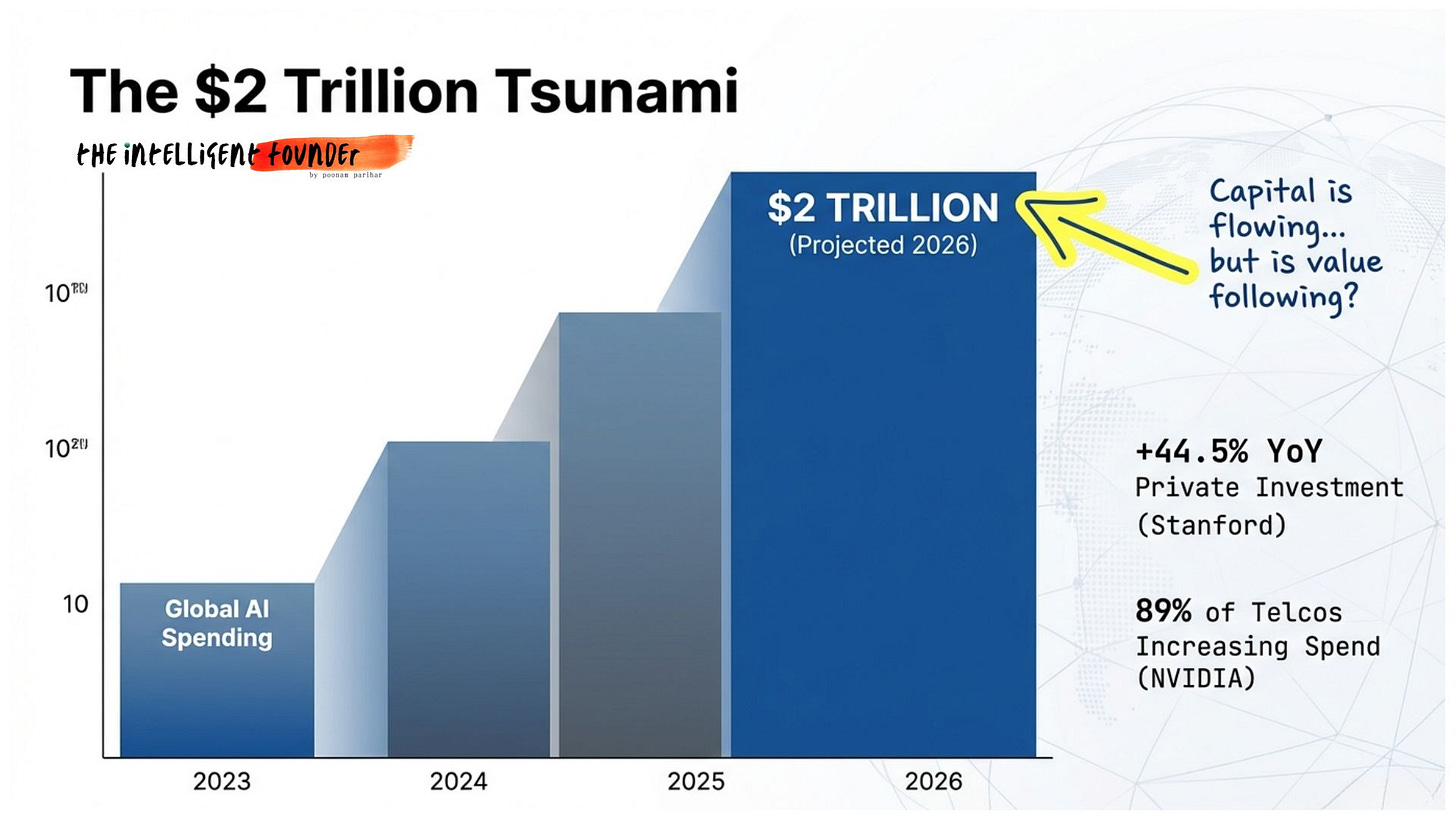

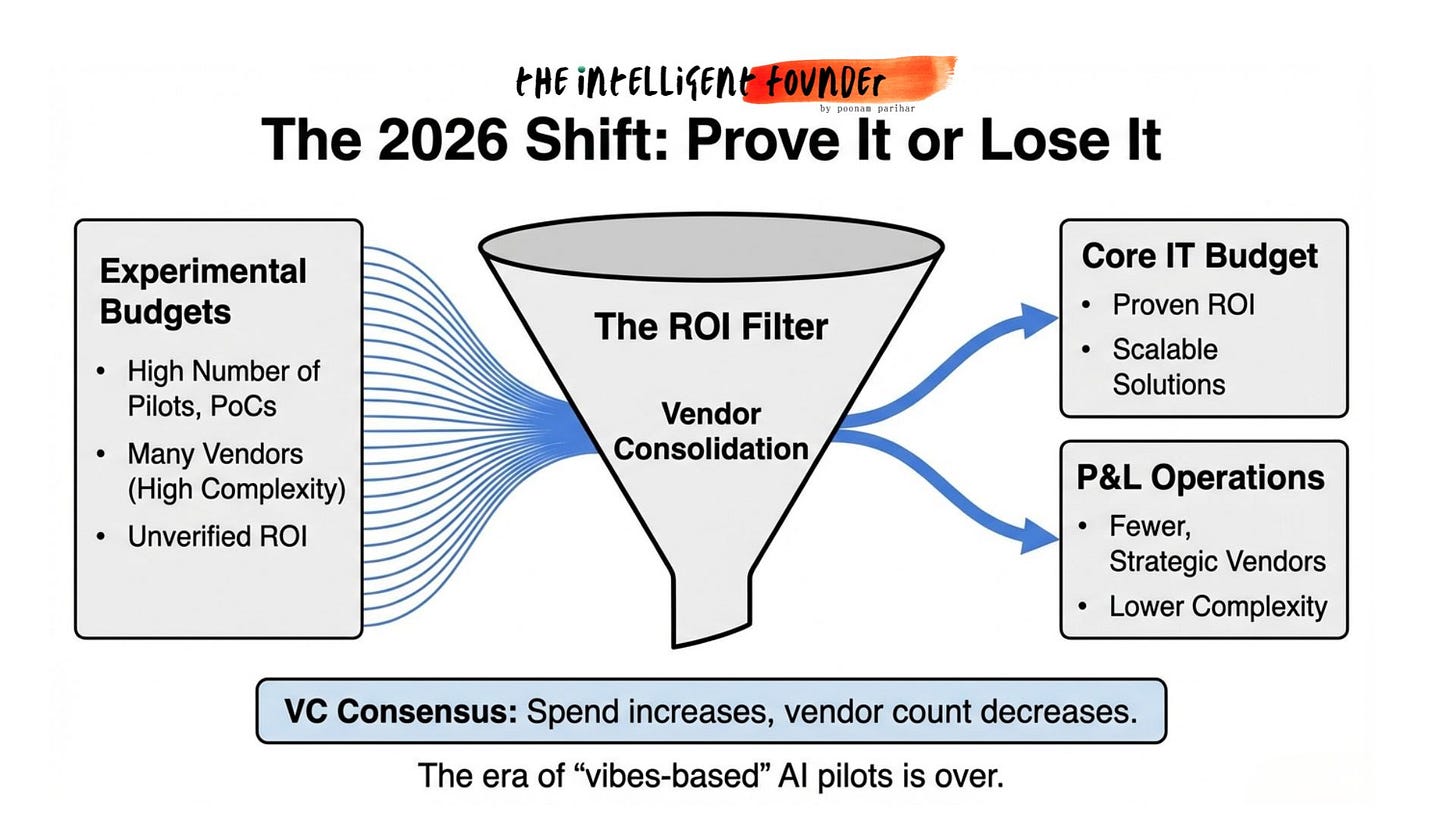

Global AI spending is projected to hit $2 trillion in 2026. But VCs predict vendor consolidation so more money but through fewer vendors.

“Budgets will increase for a narrow set of AI products that clearly deliver results and will decline sharply for everything else.”

But here’s the Gap Nobody’s Naming!

Here’s what none of these reports actually addressing and thats the infrastructure visibility problem. Agents are being deployed on top of systems where operators can’t see the majority of what’s actually happening.

The reports talk about adoption. Practitioners talk about failure. But almost nobody talks about the plumbing between “it works in a notebook” and “it works in a live production environment.”

The 6% who are winning aren’t winning because they picked the right model. They’re winning because they built the operational backbone » orchestration, governance, and infrastructure that lets agents actually run in production, not just in a demo.

Practical Implementation that reports aren’t covering.

The Adoption isn’t the problem. Almost everyone has adopted, a little or more. (88% according to McKinsey of course). But I wanted to look at some AI deployment at scale examples because the reports data looked more theoretical otherwise. so here is what I found, and these are not part of any of the reports we are talking about.

Won:

Morgan Stanley (AI advisor assistant), Accelirate/UiPath (insurance claims), Anysphere/Cursor (AI coding), SK Telecom + Samsung (AI-RAN), Telecom sector broadly (autonomous networks).

Lost:

Klarna (fired 700, rehired humans), S&P Global’s 42% graveyard (enterprises scrapping initiatives), MIT’s 95% (zero P&L impact across $44B in investment).

THE PATTERN

Every winning example shares the same DNA:

Narrow, well-defined task (not “enhance productivity”)

Workflow redesigned around AI (not AI added to step 7)

Clean, structured data or proprietary data advantage

Measurable financial outcome tied to the deployment

Human-in-the-loop where judgment matters

Every failure shares the opposite:

Vague goal (”improve efficiency”)

AI bolted onto broken processes

No governance before scaling

No KPI tracking

Fired people before understanding impact

The pattern is the same every time, the winners redesigned the workflow before deploying, the losers bolted AI onto what was already broken.

In simple language, the winners deployed AI into production, embedded it into core workflows, and got measurable business outcomes (revenue, cost savings, ROI). The losers adopted AI, ran pilots, and never made it past the proof-of-concept stage, or deployed it recklessly and had to reverse course.

The Bottom Line

AI adoption is universal. AI value capture is not. The technology has arrived. The organizations haven’t.

2026 won’t be the year AI transforms everything. It’ll be the year the shakeout begins, vendor consolidation, governance debt coming due, and pilot graveyards getting cleaned out. The next frontier isn’t a better model. It’s physical AI, sovereign infrastructure, and agentic orchestration at the edge.

The winners won’t be those with the best algorithms. They’ll be those with the best plumbing.

The full podcast digs deeper into all five reports and the gaps between them. Listen to the full breakdown on the Intelligent Founder podcast. Subscribe so you don't miss what comes next.