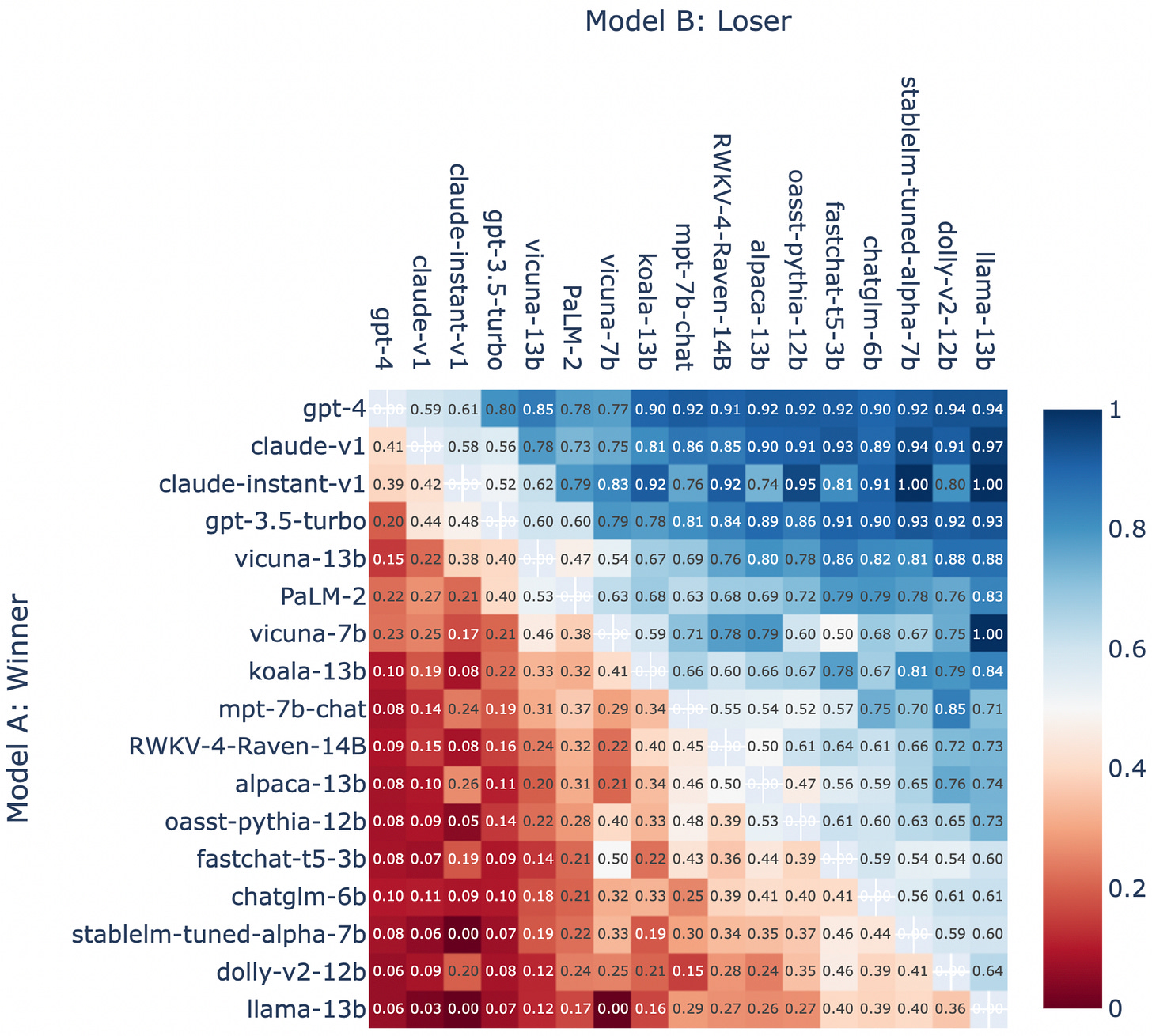

(From DeepSeek Math V2 : Mathematical Reasoning LLM outperforming humans and other AIs, to LMArena, an AI evaluation startup, raising $150 million at a $1.7 billion valuation and being called a cancer.)

Three days ago, a benchmark scandal broke: researchers and insiders described how major labs can “cheat a little bit” on public rankings by testing many internal model variants on these arenas, then only releasing the winner. -

Yes it was Meta.

In Meta’s case, internal teams reportedly tested many Llama variants on LMArena and only shipped the top-performing one, inflating leaderboard rankings in ways regular users could not reproduce.

Meanwhile, LMArena itself raised around $150 million at a roughly $1.7 billion valuation and was bluntly described by critics as a ‘cancer’ on the AI ecosystem.

Relying on traditional AI benchmarks for your business is like hiring a candidate solely because they memorized the answers to an old version of the SAT. They may appear brilliant on paper, but they lack the applied skills, reliability, and cost-consciousness required to handle the actual complexities of your specific office environment.

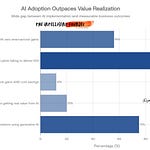

Traditional benchmarks are broken due to high data contamination (up to 67.6%), causing models to memorize answers rather than exhibit true intelligence. They further fail businesses by prioritizing academic trivia over functional utility, such as reliability, instruction-following, and total cost of ownership. Ultimately, these rankings do not predict real-world outcomes or economic value, which are the only metrics that truly matter for actual deployment.

I initially did a deep dive on benchmarks when DeepSeek-Math-V2 dropped in late November 2025, comparing open‑source models to the frontier systems ( read this post), and the landscape has shifted dramatically since.

What’s Changed Since November 29

✓ LiveBench standardized » quarterly updates prevent data contamination

✓ Enterprise ROI frameworks » 78% use AI, but only 23% measure ROI properly

✓ FDA pivoted » requesting real-world performance metrics, rejecting the static benchmarks

✓ Business value metrics » the 5-metric framework now adopted at enterprise scale

⚠ Benchmark innovation works » LiveBench proves industry can fix the problem

But what hasn’t changed is that traditional benchmarks are still failing to predict real business value, even as the leaderboard drama has only intensified.

In this first podcast (Episode 001), we investigate why most companies are choosing AI models based on contaminated benchmarks and gamed leaderboards and why that's costing them millions. Starting with the DeepSeek-Math-V2 breakthrough from 40 days ago to this week's explosive $1.7 billion LMArena controversy, this episode reveals the five metrics that actually predict business value and delivers a practical 6-step playbook for evaluating AI on your own data, not someone else's scoreboard.