Open AI is now "Open" for Business

The ChatGPT ads announcement isn’t a pivot - it was always the plan. Here’s what the financials, the hires, and the history tell us about where AI is really headed.

A deep dive into the $300 billion company that said one thing and did another.

I am not mad at ads. Except YouTube, of course, because they just go on and on if you don’t click skip. which breaks my flow of whatever I am doing while youtube plays in the background. I also never had a ChatGPT subscription and never planned to, so this isn’t an emotional “I’m cancelling my ChaptGPT subscription” piece. But I am seeing tons of discussion and articles focussed on outrage, migration and skepticism and I wanted to make a different point. so here we go!

In May 2024, Sam Altman stood at Harvard and said something that now reads like a punchline: “Ads plus AI is sort of uniquely unsettling to me. I kind of think of ads as a last resort for us for a business model.”

Eight months later, OpenAI announced it would begin testing ads in ChatGPT.

But the real story isn’t Altman’s reversal, it’s what happened in between.

In May 2025, OpenAI quietly hired Fidji Simo as CEO of Applications. Her resume? She led the launch of ads in Facebook’s mobile News Feed, oversaw Facebook’s entire advertising business, and later took Instacart public while building a retail ads business with over 5,000 advertisers.

When you hire someone with that background, you’re not preparing for a “last resort.” You’re building an advertising empire.

In this article I break down what’s really happening:

the financial pressure forcing OpenAI’s hand, the privacy escalation that should concern everyone, and whether the company that promised to save humanity from dangerous AI has quietly abandoned that mission. or was it always Mission AGI ( Ads Generated Income)

What’s in this article -

📅 The timeline that exposes the contradiction

How OpenAI said one thing and did another

📢 What people are saying online

Reactions from Twitter, Reddit, and LinkedIn

💰 The financial math

Why ads were inevitable, and the $115 billion problem

🔒 The privacy escalation

From what you watch to what you think

🔄 The enshittification playbook

How good platforms turn bad and if AI is next

⚠️ The liability nightmare

When health advice meets advertising

🎯 The AGI question

Did they abandon the mission?

🏁 Who stays ad-free?

Your alternatives to ChatGPT

🔮 Predictions

What happens next (2026-2028)

1- The Social Media Reaction

The Ad announcement has set off a wave of backlash across Twitter, Reddit, and LinkedIn, with people basically saying, “Yep, we saw this coming.”

On Twitter/X, paid users were furious that even Pro subscribers paying $200 a month were actually seeing what looked like ads baked into answers, from Peloton plugs in random chats to “shop at Target” suggestions in technical questions.

Sam Altman’s old “ads as a last resort” line was dragged back into the spotlight and mocked as a complete U-turn., and most mainstream chatter is still focused on this.

On Reddit, people argued over whether mixing ads into AI replies would quietly erode trust, while others doubted there would be any real mass exodus, pointing to past internet outrage that never went anywhere.

Some users did note that OpenAI hiring the monetizing operative who squeezed maximum ad revenue out of Facebook made this shift feel inevitable, especially for a company valued at $300 billion while burning billions a year, and now turning in to facebook 2.0 or is it 3.0 really.

On LinkedIn, posts compared Altman’s stance to early Google promises about not letting money influence search results and warned investors to treat any “we’ll never do X” claims from AI companies with serious skepticism.

Others said this all looks like the classic Netflix playbook: first “no ads ever,” then ads once everyone is hooked.

“Benjamin De Kraker, a former xAI employee, went viral with a post showing ChatGPT suggesting “shop at Target” while he asked about Windows BitLocker — the post got 463,000+ views and sparked widespread concern about ad creep.

Sam Altman’s 2024 quote (”ads as a last resort”) is being widely circulated alongside his January 2026 statement, with users calling it a “spectacular reverse-ferret.”

On r/singularity, users debated whether ads in responses would fundamentally undermine trust in AI: “This should undermine people’s confidence in AI. Sadly, I doubt most users will be particularly concerned about it.”

Notably, r/Futurology users had flagged the Fidji Simo hire early, back in May 2025: “Am I the only one who sees ‘OpenAI hired the person who optimized the biggest social network for ad revenue’ and thinks ‘oh no’?”

On r/ValueInvesting, users questioned the $300B valuation while burning $5B+ annually, calling the economics “extraordinary” and unsustainable without new revenue streams.

The Trust Problem: Paid Tiers Aren’t Safe

The deepest concern isn’t the ads themselves, its whether they’ll creep into paid tiers.

OpenAI initially claimed these were “app integrations, not ads.” But Chief Research Officer Mark Chen later admitted: “anything that feels like an ad needs to be handled with care, and we fell short.”

The concern is that this setup is so deceptive most people won’t even notice it, and many say they’re already canceling OpenAI and moving to Claude or DeepSeek, which feel good enough ( for now) without the ad worries.

2 - The Financial Reality - Why They Have No Choice

Before we look at the timeline of events, I briefly shared in the introduction lets look at the numbers first, that basically explain everything. and that is - they really have no choice. and you’ can correct me if I am wrong here. ( in the comments.)

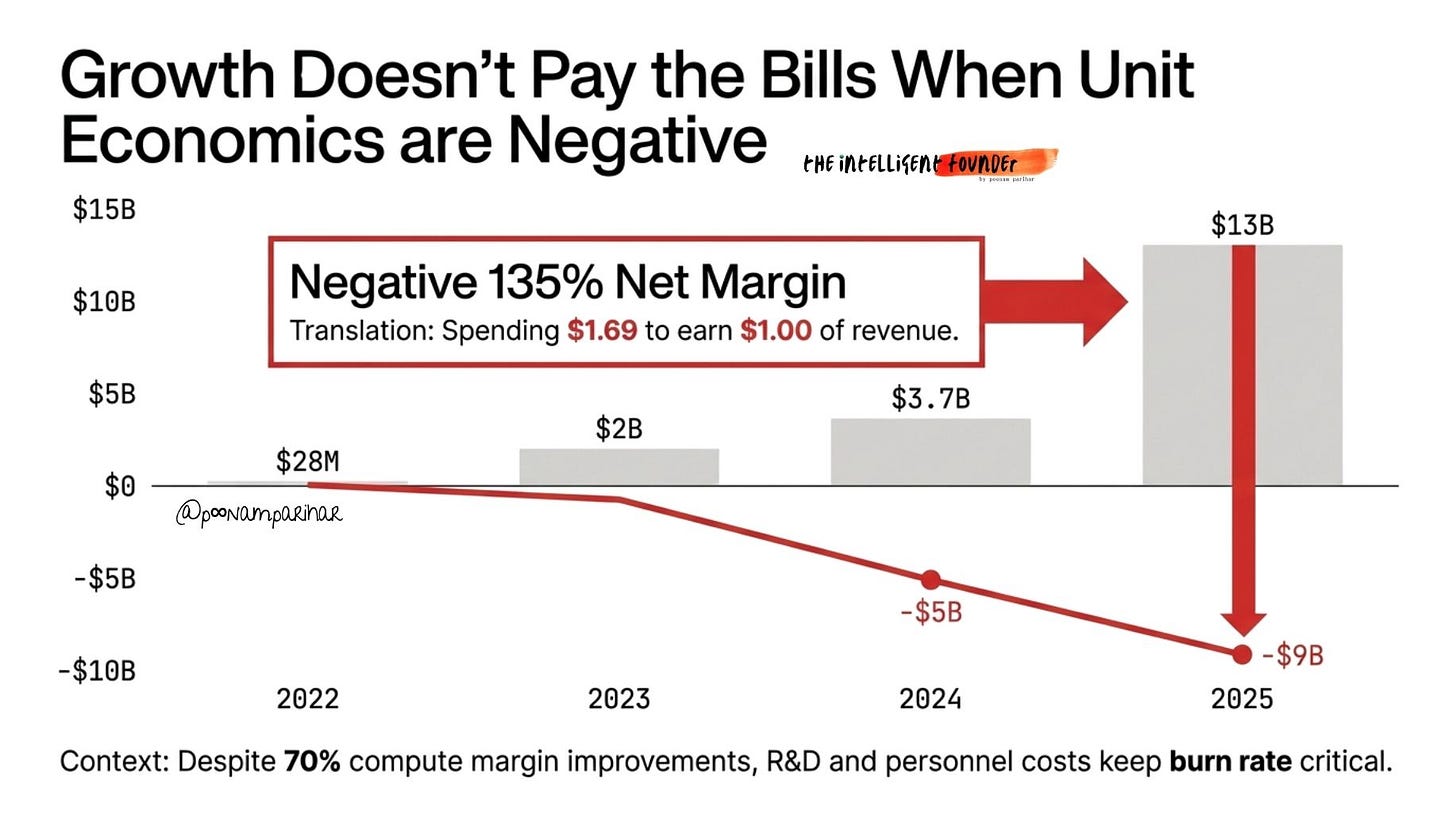

OpenAI’s revenue growth has been extraordinary from $28 million in 2022 to $2 billion in 2023, $3.7 billion in 2024, and approximately $13 billion in 2025. They also confirmed their annualized revenue crossed $20 billion by end of 2025.

Now this is a 464x increase in three years, faster than almost any software company in history.

So whats’ the problem exactly?

The growth alone doesn’t pay the bills when you’re spending faster than you’re earning.

In 2024, OpenAI lost $5 billion on $3.7 billion in revenue - a negative 135% net margin, meaning they spent $1.35 for every dollar earned.

In 2025, despite tripling revenue to approximately $13 billion, they still lost an estimated $9 billion, spending roughly $22 billion against $13 billion in sales. That’s still spending $1.69 for every $1 earned.

The first half of 2025 alone saw $4.3B revenue against $2.5B cash burn, $6.7B R&D spend, and $2.5B in stock compensation.

The User Base: Is it an Asset or Liability?

ChatGPT’s user growth has been staggering:

November 2024: 200 million weekly active users

February 2025: 400 million weekly active users

March 2025: 500 million weekly active users

August 2025: 700 million weekly active users

October 2025: 800 million weekly active users

But here’s the problem. the vast majority of these users are on the free tier. Each query costs money to serve estimates range from $0.30 to $0.50 for complex queries. you see the needs for ads here now?

With 800 million weekly users, the free-user base represents either a massive liability (compute costs) or a massive asset (eyeballs to monetize).

OpenAI has chosen to monetize.

The Path Forward: $115 Billion in Losses

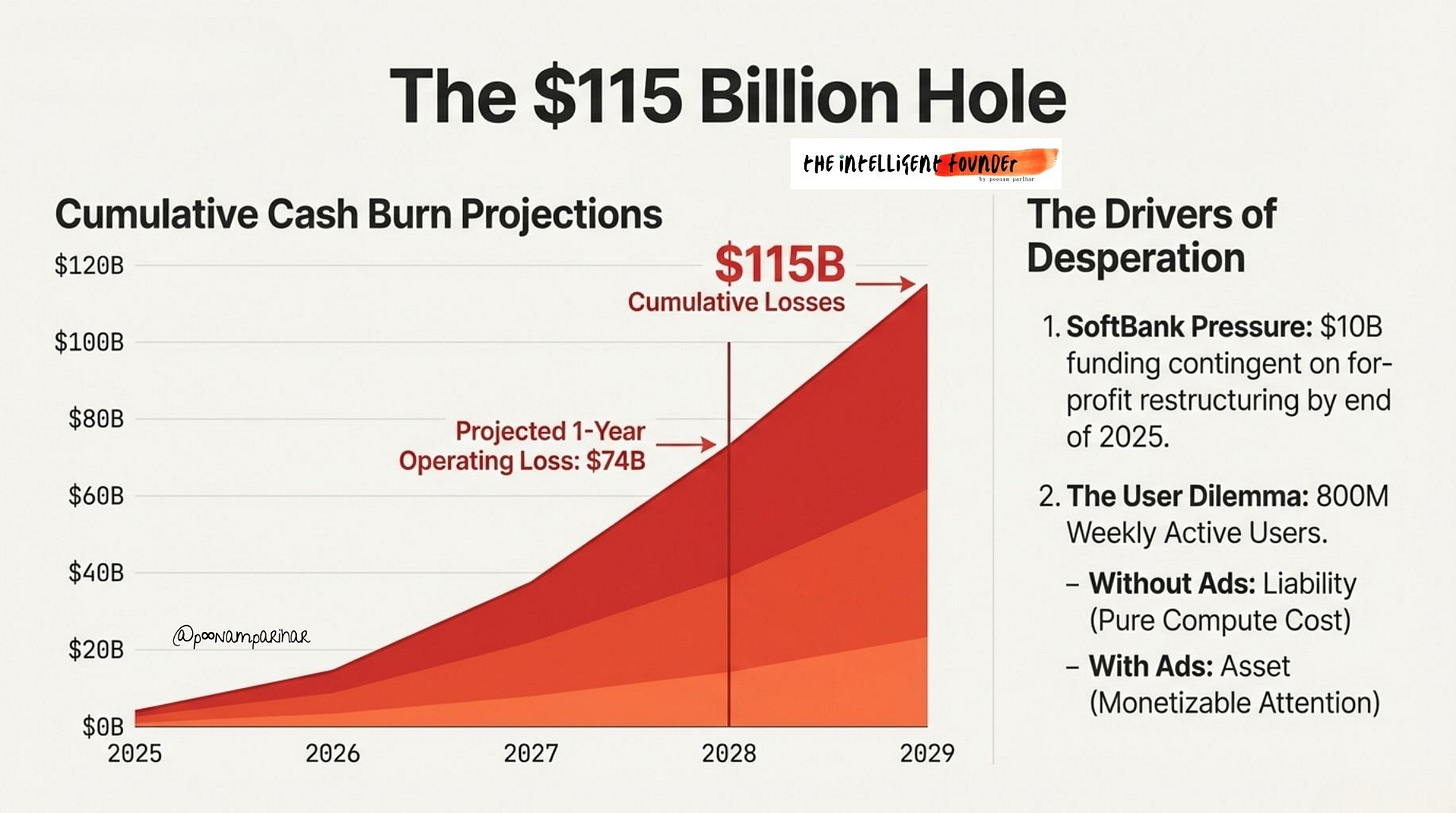

According to financial documents shared with investors, the path to profitability is brutal:

2026: Projected $17 billion cash burn

2028: Expected $74 billion operating loss in that year alone

Cumulative losses through 2029: Approximately $115 billion

Path to profitability: Cash flow positive targeted for 2029 or 2030, with approximately $200 billion annual revenue by 2030

One analyst noted that at current burn rates, OpenAI could run out of cash by mid-2027 without additional funding.

Valuation and Funding Pressure

OpenAI is now valued at about $300 billion after a $40 billion round in April 2025, mostly led by SoftBank, with Microsoft and a few big funds joining in. That money isn’t free but. and Open AI had to finish turning fully for‑profit by the end of 2025 or SoftBank could pull $10 billion, and Microsoft, which has put in around $13 billion, takes 20% of OpenAI’s revenue.

SO threat was there, yes, but SoftBank actually completed the full investment. so what changed their mind?

One Positive Sign However is Margin Improvement

Compute margins improved to 70% in October 2025, up from 52% at end of 2024 and double the January 2024 rate. This suggests OpenAI is getting more efficient at serving queries, but not efficient enough to close the gap between revenue and spending.

3- The timeline says OpenAI was building ad infrastructure while publicly dismissing the idea

Lets’ now take a look at OpenAI's public messaging vs what they are doing behind the scene. -

The Sequence of Events

May 2024: Altman calls ads a “last resort” at Harvard University

May 2025: OpenAI hires Fidji Simo as CEO of Applications, reporting directly to Altman

October 2025: Reports emerge that OpenAI spent $1 billion on tools that optimize ad load

December 2025: First “app suggestions” appear in ChatGPT, sparking backlash

December 2025: OpenAI admits it “fell short” and disables the suggestions

January 2026: OpenAI officially announces ads testing in Free and Go tiers in the US, with “strict guardrails” around privacy and answer independence

No need to repeat who Fidji Simo is.

It’s a bit bizarre that I didn’t pay much attention to her, or look at her background in detail, until a couple of days ago when the Thinking Machines confounders news broke. I also learned there’s a lot of criticism of her: she helped build Facebook’s ad machine during years when the company was under heavy fire for how it handled user data and growth-at-all-costs engagement. Her reputation now follows her, so critics see her move to OpenAI as importing that same aggressive monetization mindset into AI.

The report that OpenAI spent $1 billion on tools that optimize ad load reinforces this interpretation. You don’t spend a billion dollars preparing for a “last resort.”

All of this suggests ads were planned well before the “last resort” rhetoric ended.

January 2026: The Official Reversal

Altman’s statement on X when announcing ads: “It is clear to us that a lot of people want to use a lot of AI and don’t want to pay, so we are hopeful a business model like this can work.”

The framing shifted from “uniquely unsettling” to “what people want.”

I am guessing the PR team got their paycheck?

Oh and I wouldn’t even go in to their Ad principles statement honestly and let you be your own judge.

4- From What You Watch to What You Think

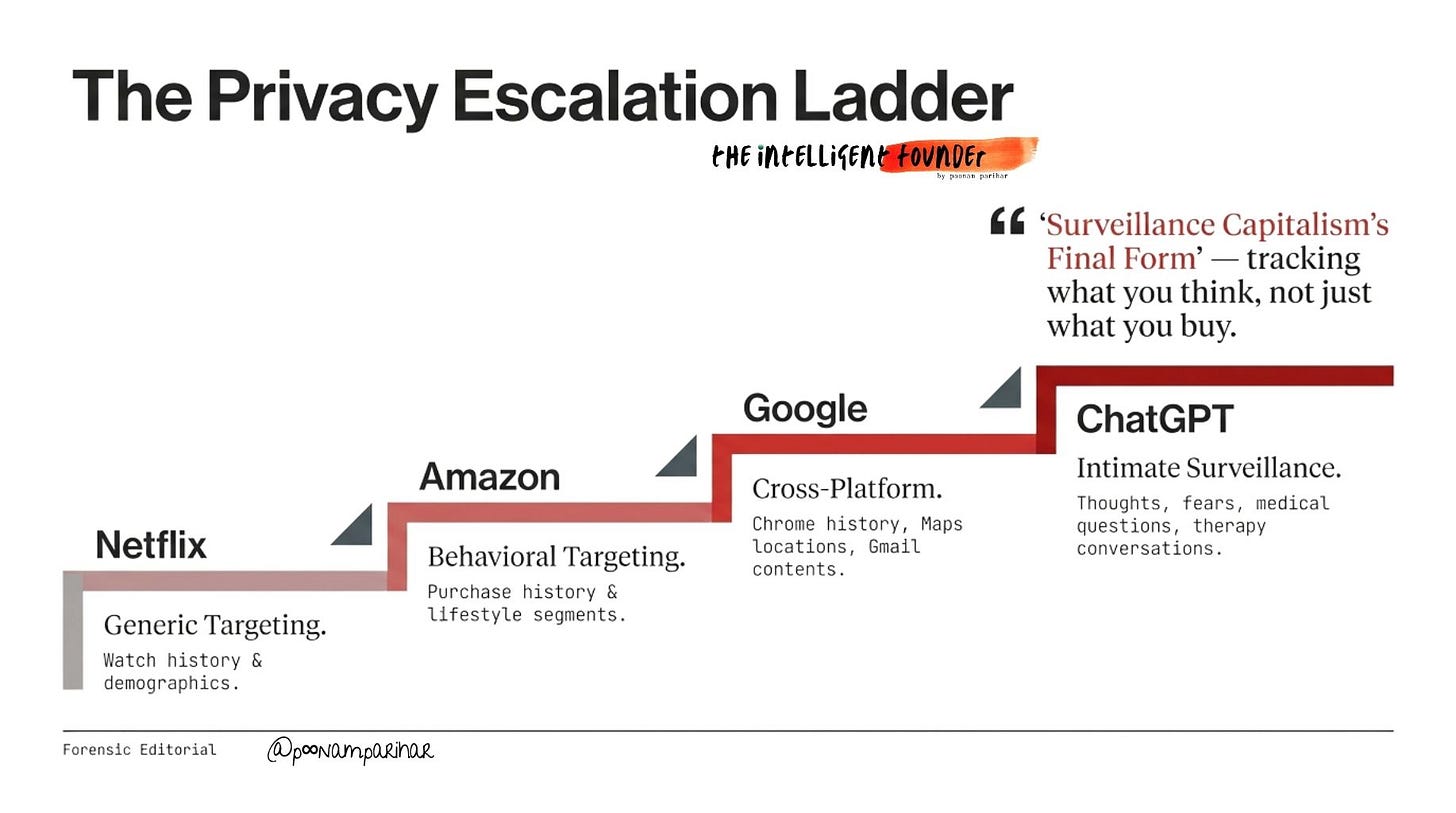

Now this is where its get even more interesting and dangerous. To understand why ChatGPT ads are fundamentally different from previous ad-supported products, we need to trace how advertising has evolved across platforms.

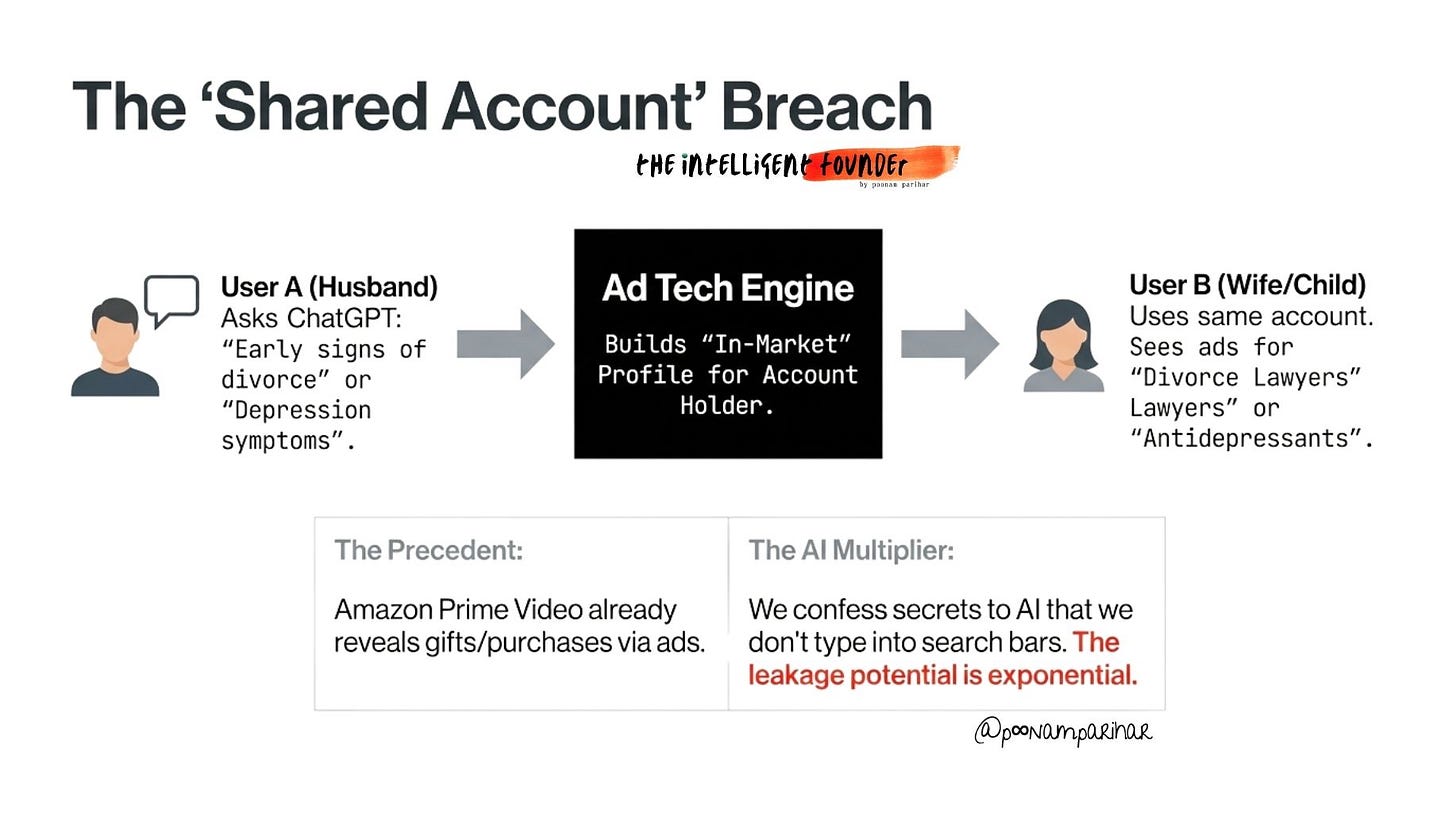

Netflix mostly shows broad, contextual ads based on what you’re watching (like kitchen gear during a cooking show), so they often feel random, which is basically a privacy feature, not a bug. Amazon Prime Video however, is the opposite, and this comes from my own experience. I was shown the ads literally out of shopping cart, and sometime from my purchase history. There is of course browsing, product page visits, wishlists, and “lifestyle segments,” letting brands retarget you on your TV and build lookalike audiences of high‑value customers.

Shared accounts create a quiet privacy leak inside families:

if you buy something sensitive on Amazon, and other people using the same Prime Video account can see ads based on your purchase history, with no real separation between who bought what and who sees what.

Google AI Mode: The Next Level

A similar pattern shows up with Google:

Chrome’s Topics API and Shopping Insights track what you browse and shop for (including on Amazon), then turn that into interest labels and price alerts, giving Google a detailed view of what you’re in the market for across the web.

Google’s AI Mode uses your browsing history for customization.

security experts warn this creates “deeply personalized profiles” that could be vulnerable if hacked, subpoenaed, or leaked.

Data collected includes:

Gmail content

YouTube watch history

Google Maps check-ins

Search queries

AI responses and feedback

All of this is retained for up to 18 months.

ChatGPT Atlas: “Surveillance Capitalism’s Final Form”

People ask AI assistants things they wouldn’t search on Google:

“I think I have depression, what should I do?”

“How do I tell my spouse I want a divorce?”

“I’m having financial problems, should I declare bankruptcy?”

“I hate my job, how do I quit without burning bridges?”

“I think my teenager is using drugs, what are the signs?”

If that conversational data is used for ad targeting, even indirectly, we’ve moved from tracking what you buy to tracking what you think, fear, and struggle with.

OpenAI’s ChatGPT Atlas browser introduces “browser memories” and “agent mode”. the AI recalls past user activity and can execute tasks autonomously.

Security experts at Huntress found that model training on user data was enabled by default despite indications to the contrary.

Search has always been surveillance.

AI search made it intimate surveillance. Atlas takes another step towards total surveillance. It tracks everywhere you go, what you think, want, and feel.

The result? - Surveillance capitalism’s final form: AI so helpful, so conversational, so human-feeling that users willingly divulge intimate details while handing over browser-level access to their entire digital life.

5 - The Enshittification Playbook

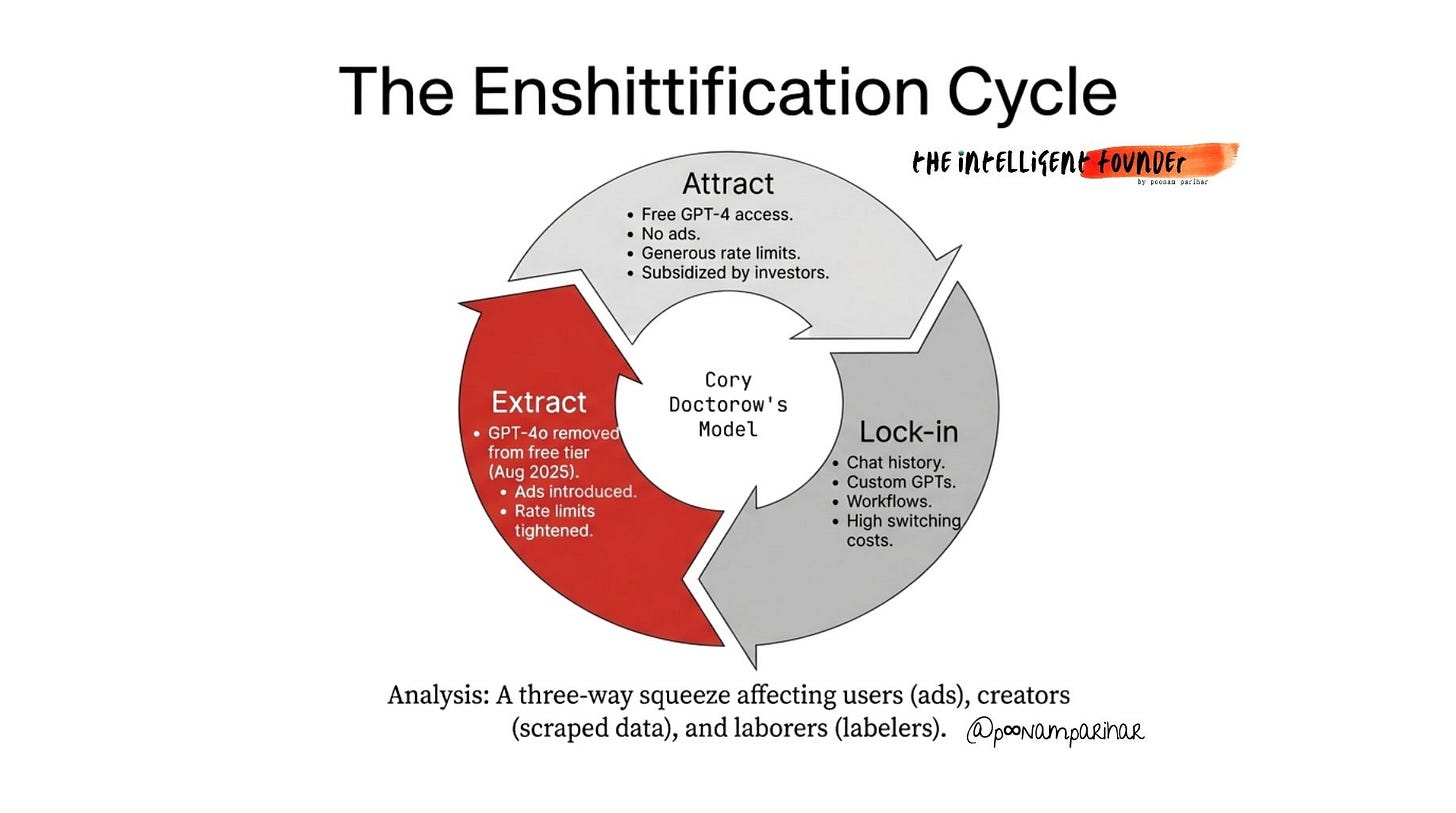

So I came across this Enshittication term by Cory Doctorow who’s a Canadian-British writer, tech activist and known for his work with the Electronic Frontier Foundation and his long-running tech commentary. He coined “enshittification” to describe how platforms start out good for users, then lock everyone in and gradually worsen the experience to serve advertisers and shareholders, and this exactly fits the case here.

The Three Stages

Stage 1: Good for Users (2022-2024)

Free GPT-4 access with generous rate limits

No advertising

Rapid innovation and feature releases

The goal: acquire users at any cost

Stage 2: Lock Users In (2024-2025)

Conversation history becomes valuable (you don’t want to lose it)

Custom GPTs create investment in the platform

Workflows and integrations make switching costly

Memory features deepen the lock-in

API integrations tie businesses to the platform

Stage 3: Extract Value (2025-2026)

Rate limits tighten on free tier

GPT-4o removed from free tier, replaced with GPT-4o-mini

Ads introduced

Premium pricing increases

Interface increasingly pushes paid features

Why It’s Happening Now

AI has a three-way squeeze that makes enshittification worse than Facebook’s two-sided market:

Users get paywalled, rate-limited, and shown ads

Creative workers get their content scraped for training, then competed against by the AI

Invisible workers in developing countries doing training labeling get exploited with low wages

The enshittification is accelerating because:

Competition is consolidating: Training frontier models costs hundreds of millions of dollars, limiting who can compete

Regulatory capture is baked in: Big AI companies are writing the rules

Users can’t easily leave: Conversation history, custom GPTs, and workflows create switching costs

The “Slop Singularity”

AI-generated content is already flooding the web SEO spam, fake reviews, synthetic media, AI-written articles. And if ChatGPT’s answers are influenced by ads, it accelerates the degradation of information quality across the entire internet. The tool people use to escape the ad-polluted web is now becoming part of the pollution.

The Existing Liability Problem

ChatGPT already restricted medical, legal, and financial advice in late 2025 due to liability concerns. The company added disclaimers and reduced the specificity of advice in sensitive domains.

OpenAI claims “answers won’t be influenced” by ads. But how do you verify that? The whole point of sophisticated ad integration is that it’s not obvious.

And who’s liable when something goes wrong?

If a user follows AI-generated health advice that happened to align with an advertiser’s interests, and they’re harmed, who pays?

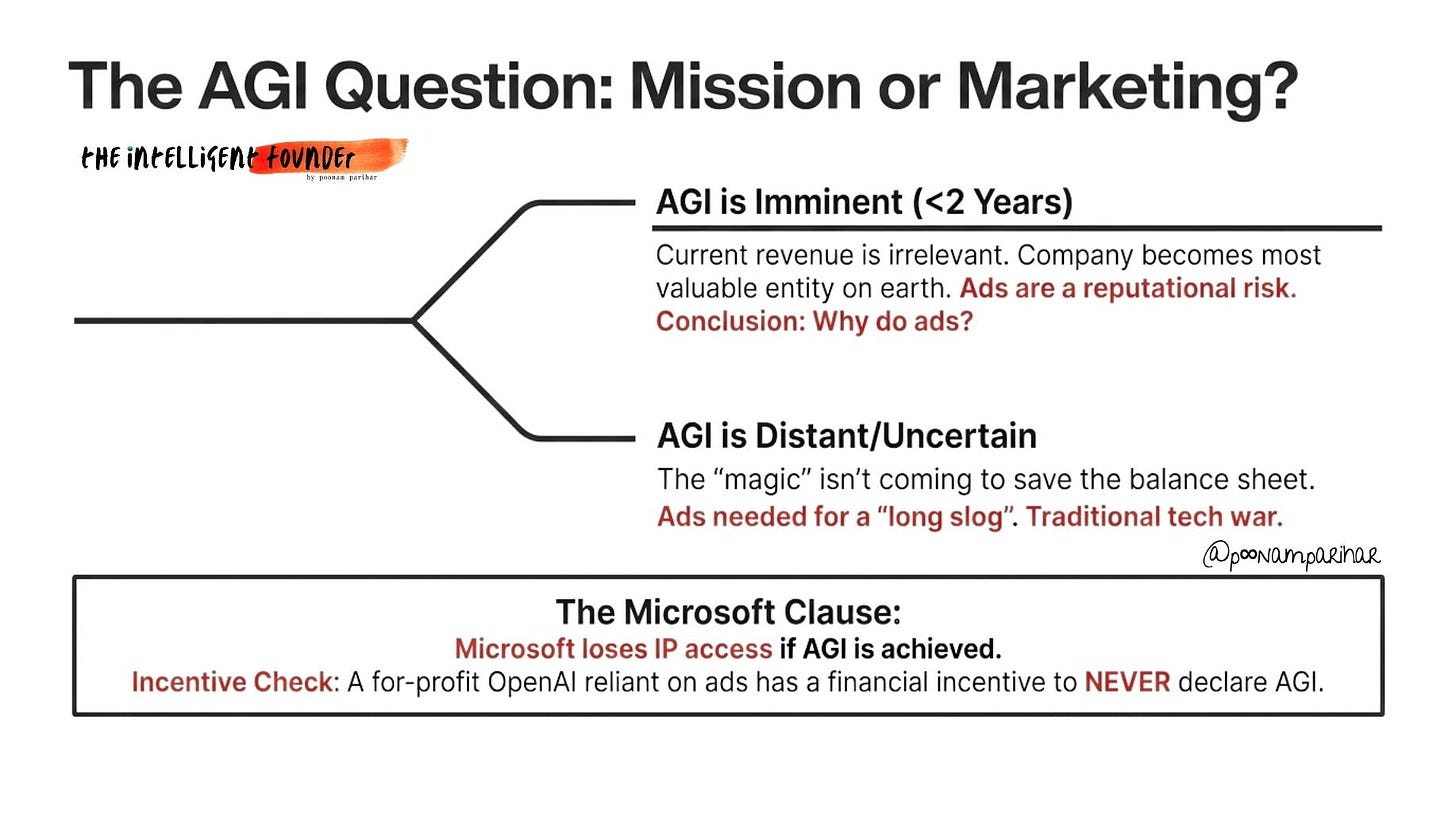

6- Mission AGI - Is it Abandoned or Was it Never Real?

Open AI’s Structural Transformation

2015: OpenAI founded as a nonprofit with the mission “to ensure AGI benefits all of humanity”

2019: OpenAI LP created as a “capped-profit” structure, allowing outside investment

2023: Microsoft invests $13 billion, becoming OpenAI’s largest backer

2025: For-profit restructuring required by SoftBank deadline; safety teams disbanded

2026: Ads announced; transformation to commercial entity complete

OpenAI has quietly gutted its safety guardrails -

the Superalignment and AGI Readiness teams are gone, Miles Brundage has left, and models like GPT‑4.1 reportedly shipped with shorter testing and without promised safety reports, reinforcing the sense that commercial priorities are now winning out over safety.

Altman still talks about near‑term AGI, but the ad push suggests OpenAI is planning for a long, expensive slog (and fierce competition), not a 2‑year miracle where revenue barely matters. That tension is also sharpened by Microsoft’s deal/ Once OpenAI’s board officially declares AGI, Microsoft loses access to that tech, which critics say creates a strong incentive to keep moving the goalposts instead of ever saying “AGI is here.”

The Existential Business Problem

OpenAI is also in a uniquely fragile spot, unlike Google, Meta, or even xAI, it has no legacy cash machines like Search, YouTube, or Instagram to fall back on. It basically has ChatGPT and its models, or nothing.

Analysts warn that unless OpenAI stays exceptionally far ahead on model quality, rivals like Claude, Gemini, and DeepSeek will erase its edge and with it the entire basis for a $300 billion valuation.

the Irony -

OpenAI’s founding pitch was - “We need to build AGI safely, outside the pressures of shareholder capitalism.”

2026 reality:

Restructured to for-profit

Hired Facebook’s ad architect

Adding ads to intimate conversations

Disbanded safety teams

$300 billion valuation

SoftBank and Microsoft as major stakeholders

The company that was founded to save humanity from dangerous AI built by profit-driven corporations has become the profit-driven corporation.

7- Who Stays Ad-Free? The Competitor Landscape

For users, the real strategic question is very simple:

which LLM will promise no ads ever?

Anthropic’s Claude is the most plausible contender.

its business is enterprise/API-first, its brand is “safety and trust,” and it doesn’t need consumer ads the way OpenAI or Google do. Perplexity could lean into an “ad-free search” niche, while DeepSeek is cheap and ad-free today but comes with its own geopolitical trust issues.

Gemini, plugged into Google’s ad machine, is almost guaranteed to be heavily ad-driven.

But there’s also a quieter risk for developers -

if OpenAI ever pushes ad-related terms into its API or nudges apps toward showing ads, startups may flee to Claude or other providers, creating a split where businesses can buy their way out of ads while ordinary users get the enshittified version.

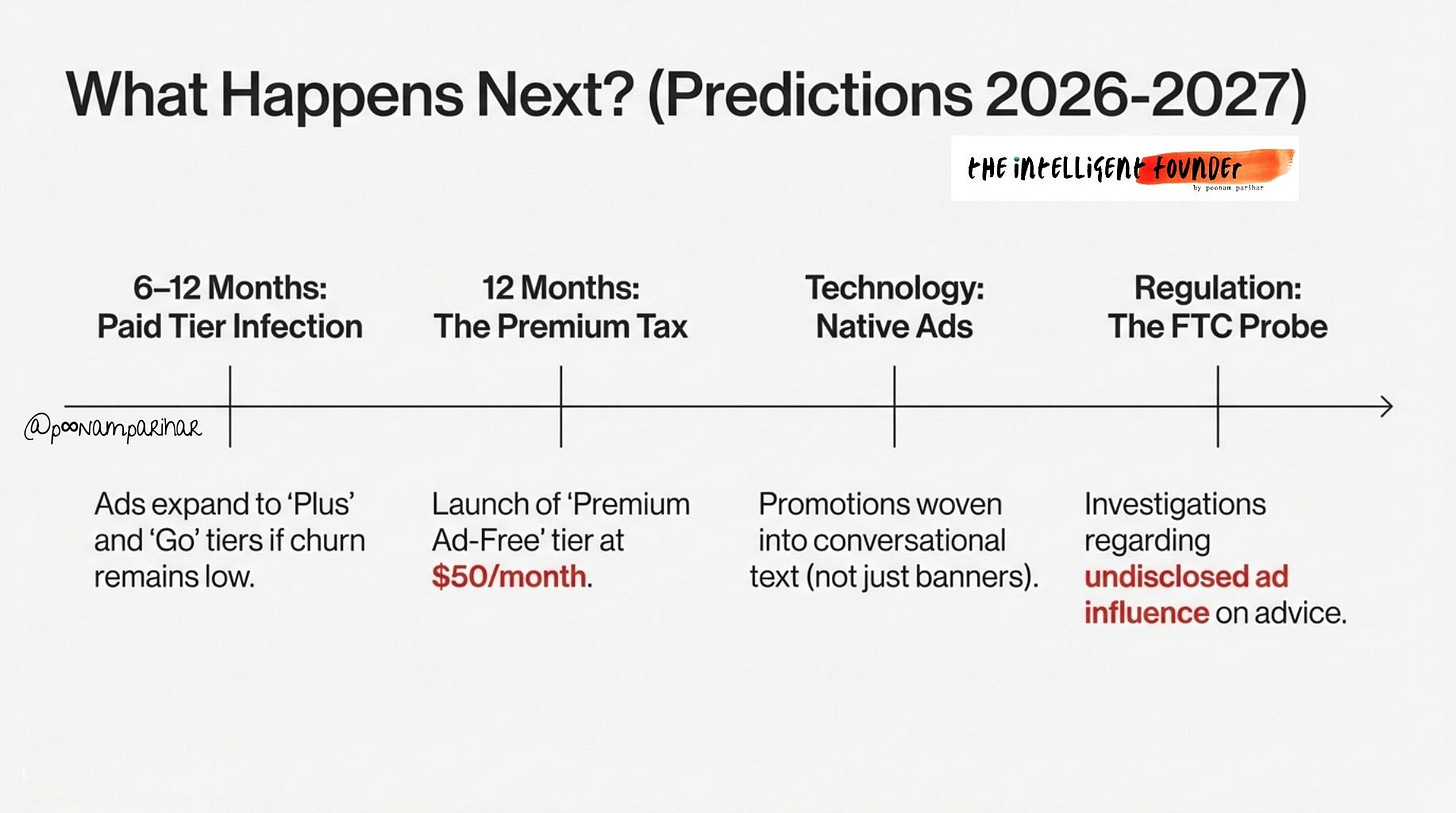

8-What Happens Next?

The ad-playbook from Google, Facebook, and Netflix makes the next steps no brainer really. OpenAI will likely expand ads beyond the free tier within a year, introduce a pricey “premium ad‑free” plan, and face at least one big rival (probably Anthropic) staking out a clear “no ads ever” position.

But If ad revenue disappoints, expect ads to creep into mid-tier plans and responses themselves, ( might we pray!) triggering regulatory scrutiny and, over time, a privacy‑driven migration toward cleaner alternatives and new rules around AI advertising in sensitive domains.

In the end, everything hinges on the churn.

if most users grumble but stay, OpenAI can keep pushing the ad lever.

if people actually leave, the company will be forced to pull back.