The 2026 AI Operating System: Stop Shipping Demos, Start Shipping EBIT

Why the ‘speed is your moat’ playbook broke in 2025, and the 6-week framework to build a product your customers can’t live without.

The 2025 Reality Check:

Burn Rate: AI startups spent capital 2x faster than previous SaaS generations.

The Survival Gap: While 95% of pilots failed, a “5% Club” achieved 300%+ ROI.

The Moat Myth: Model performance increased 4x, but startup defensibility dropped to near-zero.

If your 2026 resolution is to “move faster” than you did last year, you’ve already lost. In 2025, we learned the hardest possible lesson » Speed isn’t a moat when everyone is fast, it’s just a very expensive way to fail.

Last week, we picked through the $260 billion wreckage of that “speed-first” era - a brutal 95% pilot failure rate that left many founders feeling a heavy sense of “AI fatigue.” But today, we’re done looking at the smoke. We’re building the vehicle to drive past it. This isn’t just a retrospective; we are installing a new AI Operating System for 2026.

⚡ What’s Inside:

The 2025 Stress Test: Why so many AI initiatives hit the “Deployment Wall” and how major bets burned $680M+ by solving the wrong problems.

The ROI Gap / Money-trap: Why AI models got 91% cheaper, but 84% of companies still struggled to find a profit margin.

The “High Performer” Secret: Why the “best model” is now a liability, and how to build a “Workflow Anchor” your customers can’t live without.

The AI Profit Filter: Five questions to vet an AI idea in 60 seconds before it consumes $500K of your runway.

The 6-Week Playbook: The exact timeline the “5% Club” uses to move from a raw idea to measurable ROI.

The Case Studies: 10 deconstructed bets to learn from and 5 real-world success stories to copy.

1. The 2025 Stress Test - ( The Model vs Workflow plus Why the $680M Bets Broke)

To build the 2026 OS, we must first need to deconstruct why 2025’s biggest bets hit a wall. Every one of these ten failures below repeated the same core mistake » They prioritized “The Model” over “The Workflow.” They focused on the ‘Brain’ (intelligence) but ignored the ‘Nervous System’ add more to that, how that intelligence actually connects to a daily habit? Here is the full breakdown:

Builder.ai:

They prioritized the output of a model that didn’t exist yet.

By using offshore humans to hide AI flaws, they built a ‘Model’ that had the unit economics of a service agency, not a software company.

Burned $445M before bankruptcy.

Humane: A $241M wearable AI pin that faced a massive adoption barrier because it was a solution in search of a problem. why?

built a ‘Brain’ you could wear, but forgot the ‘Workflow.’

assumed people would change their daily habits (talking to a pin) just to access AI, rather than embedding the AI into the habits people already had (the smartphone)

Sold for less than half its funding.

Noogata:

They built impressive ‘Intelligence’ dashboards that provided ‘Wow’ moments for CEOs, but they failed to anchor that data into a specific, high-frequency workflow that a line manager couldn’t live without.

Landed giants like PepsiCo but hit the “Deployment Wall.”

had logos, but no production revenue, proving that “insights” without ROI are easy to cut.

HuggingChat:

chased the ‘Model Interface’ (Chat) because it was the hyped workflow of 2024, instead of leaning into their true workflow anchor, the developer infrastructure that powers the rest of the industry.

Faced a Moat Distraction by trying to compete on the consumer front-end with OpenAI instead of defending their infrastructure dominance.

Yara AI: In high-stakes industries, the ‘how’ of safety is more important than the ‘what’ of the AI’s answer. not in case of Yara though!

A Compliance Dead-End.

They prioritized a ‘Mental Health Model’ without solving for the ‘Regulatory Workflow.’

The risk of AI hallucinating mental health advice made liability insurance cost-prohibitive.

No. 6-10 ( The Silent Majority)

CodeParrot: Suffered from Pivot Exhaustion in a market dominated by incumbents like GitHub Copilot.

Locale.ai: Hit an Invisible Ceiling in a niche market that couldn’t support the escape velocity VCs demand.

Subtl.ai: Technically superior RAG but hit the Deployment Wall by trying to solve too many industry problems at once.

Tune AI: Suffered from Platform Delusion, attempting to build middle-ware that the cloud giants (AWS/Google) eventually gave away for free.

Astra: A Silent Shutdown - representative of the invisible majority that ran out of runway without finding a workflow anchor.

These are just 10 examples but in 2025, this story played out over 1,000 times. Companies that raised millions, hired brilliant engineers, and landed impressive customer logos... all gone. The total damage? $260 billion in burned venture capital.

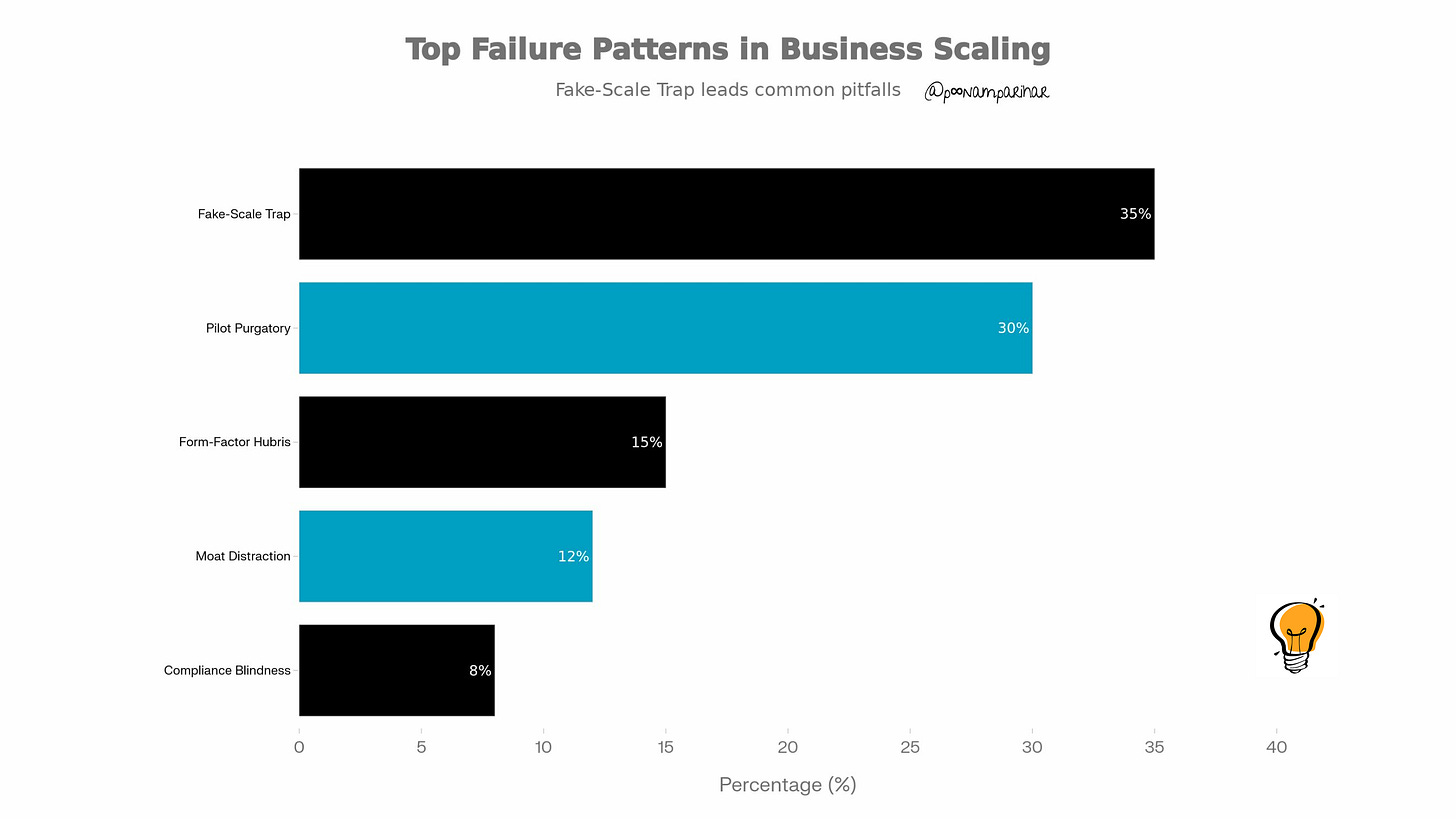

So when we look closely at cases we see that these weren’t failures of intelligence, but failures of incentive alignment. Startups like Builder.ai fell into the Fake-Scale Trap because their business model demanded the margins of software, but their technology only offered the output of a service. They used venture capital to subsidize human labor, hoping the AI would ‘catch up’ before the cash ran out. Well, It didn’t.

Similarly, the Pilot Purgatory seen at companies like Noogata happened because founders focused on ‘the demo’, the magical moment when a CEO sees a dashboard and says ‘wow.’ But ‘wow’ doesn’t pay the bills. Without a Workflow Anchor that solves a boring, high-frequency problem, these pilots were treated like ornaments which are just plain nice to look at, but the first thing to be packed away when the economic weather turned cold.

2. Is "Cheap" AI is Bleeding You Dry? - The ROI Gap

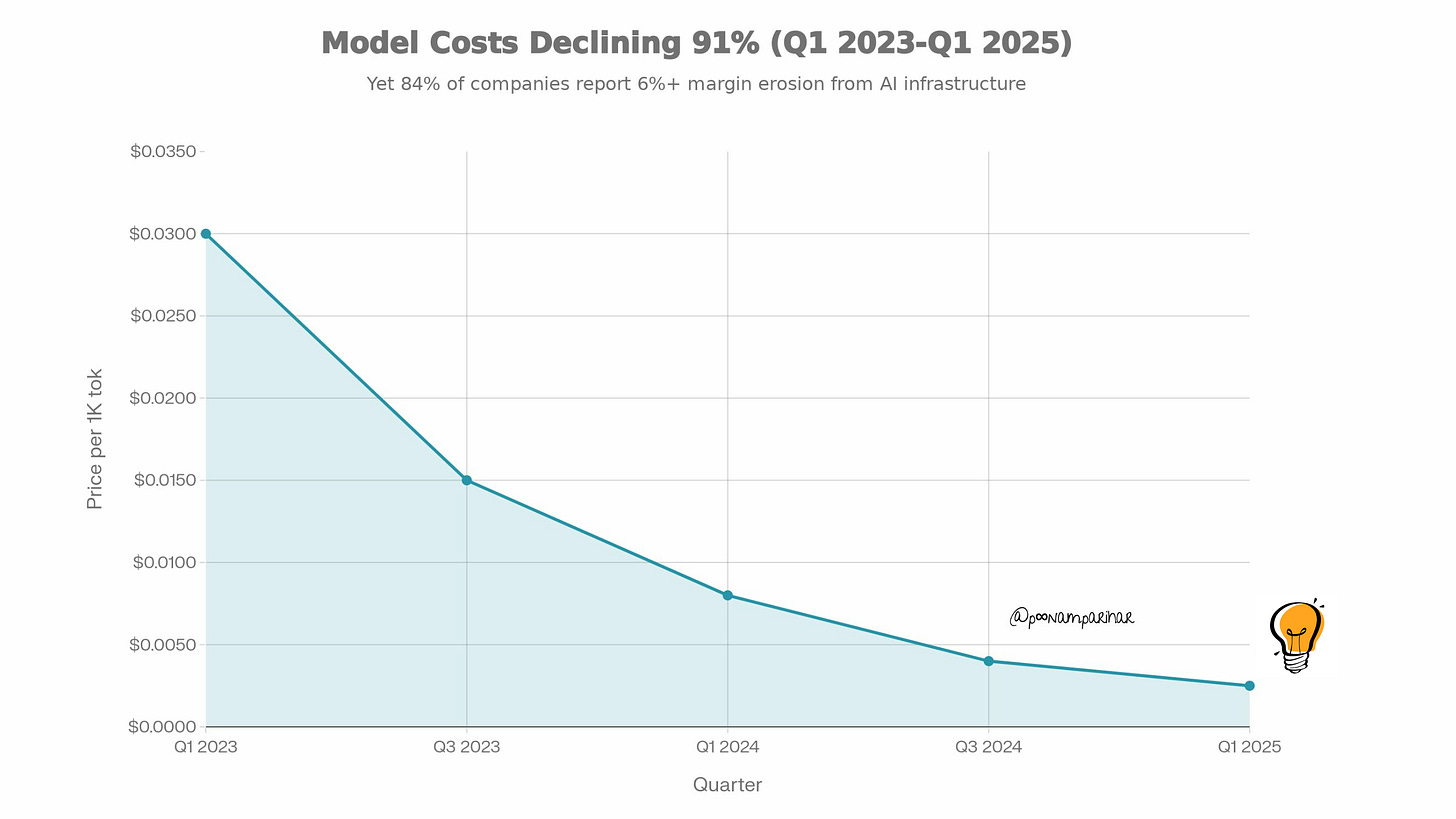

GPT-4 token pricing dropped 91% from $0.03 to $0.0025 per 1K tokens, yet 84% of companies saw their margins erode.

In 2025, something remarkable happened: model costs dropped 91%, but companies still bled cash. GPT-4's input token pricing fell from $0.03 per 1,000 tokens in Q1 2023 to just $0.0025 in Q1 2025 » a staggering 91.7% reduction. Meanwhile, 84% of enterprises reported gross margin erosion of 6% or more due to AI infrastructure costs. This created a "Financial Reality Gap" that swallowed even the most hyped unicorns.

So if the models got cheaper, where'd all the money go? or is going?

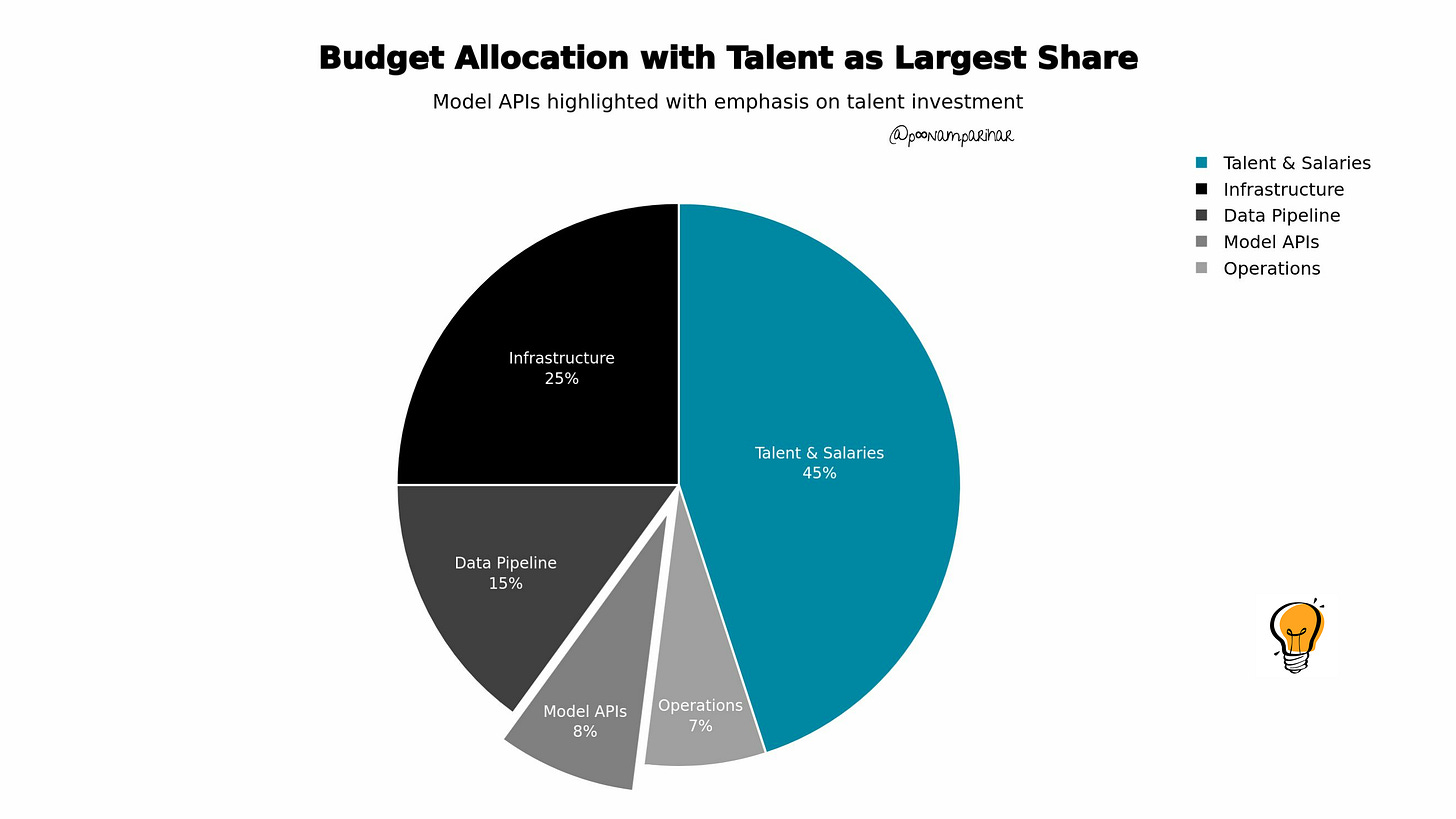

Where Your $1M AI Budget Actually Goes

Talent (45% = $450K): Senior AI engineers cost $250K-$400K/year.

Infrastructure (25% = $250K): GPUs are the new rent. Running 50 H100s for a month = $126,000.

Data Pipeline (15% = $150K): Fixing the “data swamp” so AI actually works.

Model APIs (8% = $80K): The part everyone thinks is expensive, but it’s the smallest line item.

Monitoring (7% = $70K): Drift detection and logging often consume more compute than the models.

The CEO FOMO Tax

The 30% cost overrun that happens when boards demand “an AI strategy” after seeing a cool demo at a conference, without understanding unit economics.

This tax shows up as:

Hiring ML engineers before you have clean data

Buying GPU credits before you know what you’re building

Launching pilots without ROI metrics

Building custom models when an API call would work

The 30% Underestimation Problem

IDC predicts that Global 1,000 companies will underestimate their AI infrastructure costs by 30% through 2027. When you budget $1M for an AI project, the real cost is $1.3M, and 80% of enterprises miss their forecasts by more than 25%. This is why 84% of companies report gross margin erosion of 6% or higher tied to AI workloads. The token line got cheaper, but the system stayed expensive and that’s the part everyone underestimates.

The Capital Trap and Why You Can’t Afford to Guess

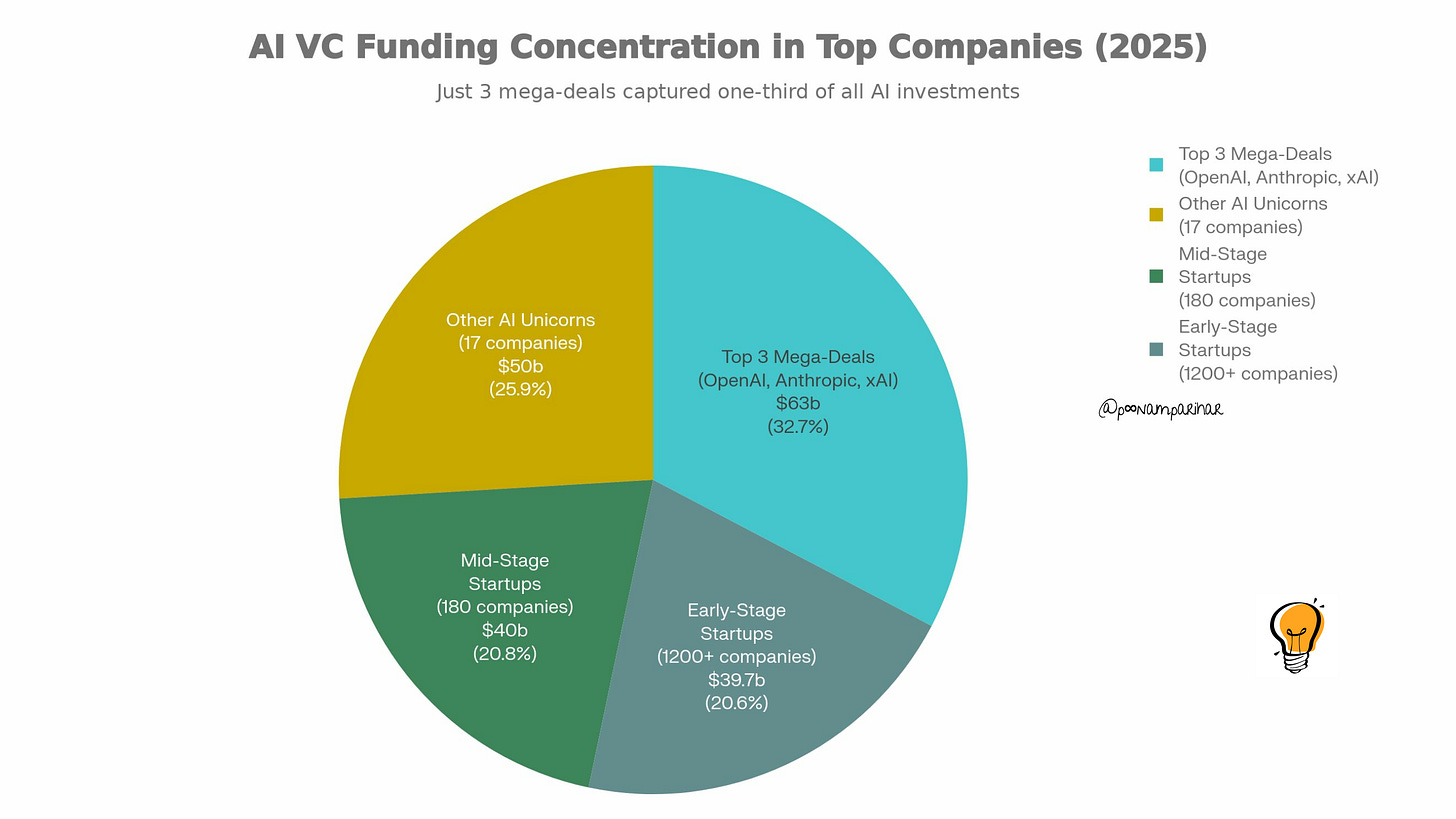

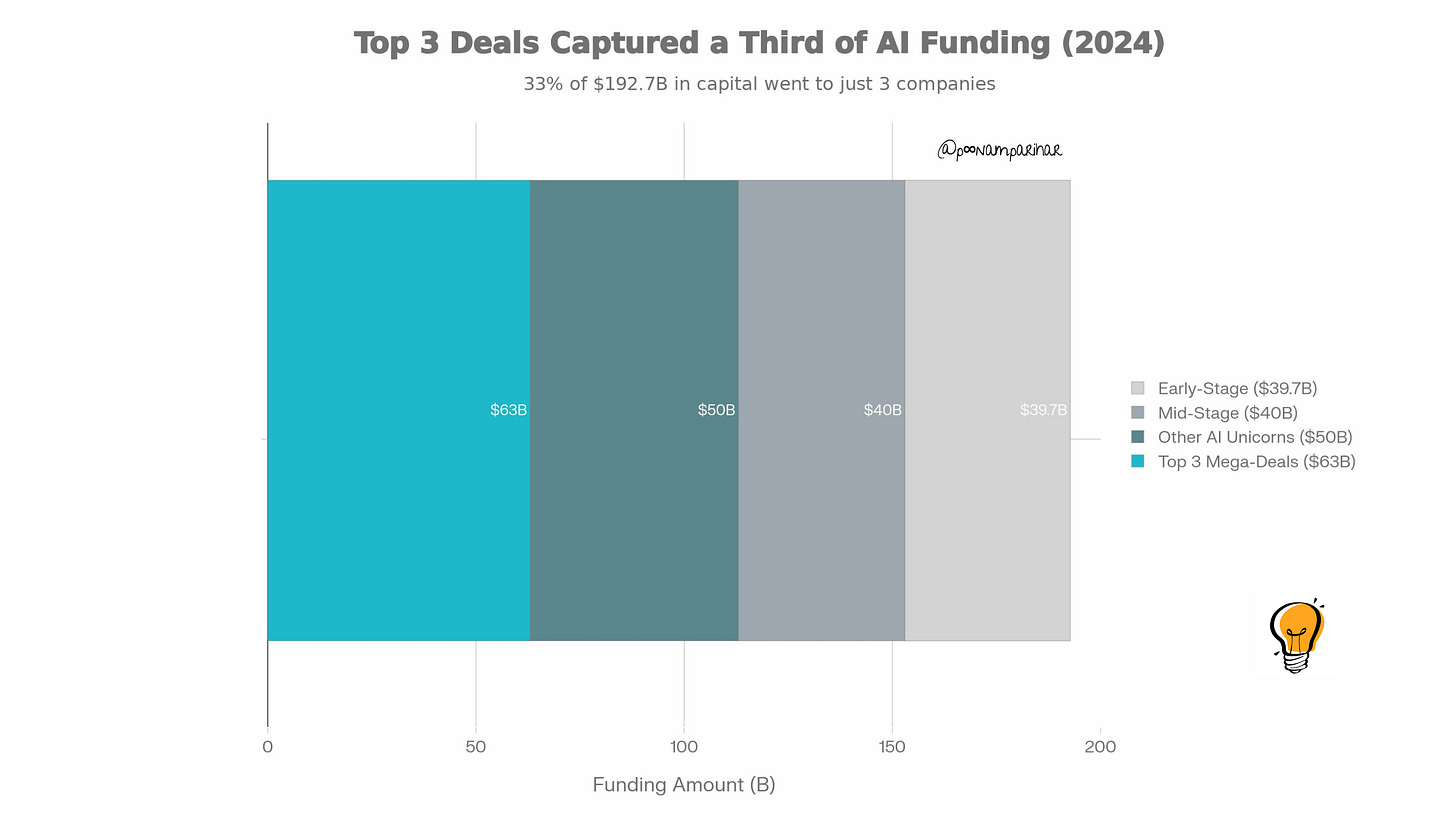

in 2025, 33% of all the VC money went to just three companies: OpenAI, Anthropic, and xAI.

The Funding Pyramid:

Top 3 Mega-Deals: $63 Billion (OpenAI, Anthropic, xAI)

Other AI Unicorns: $50 Billion

The Rest (1,200+ companies): $39.7 Billion

This extreme concentration means VCs now demand $1M+ ARR for Series A (up from $500K). You no longer have the luxury of a 6-month “experiment.” In 2026, you either find ROI in 6 weeks or you run out of runway before the next fundraise even starts.

3. The “High Performer” Secret

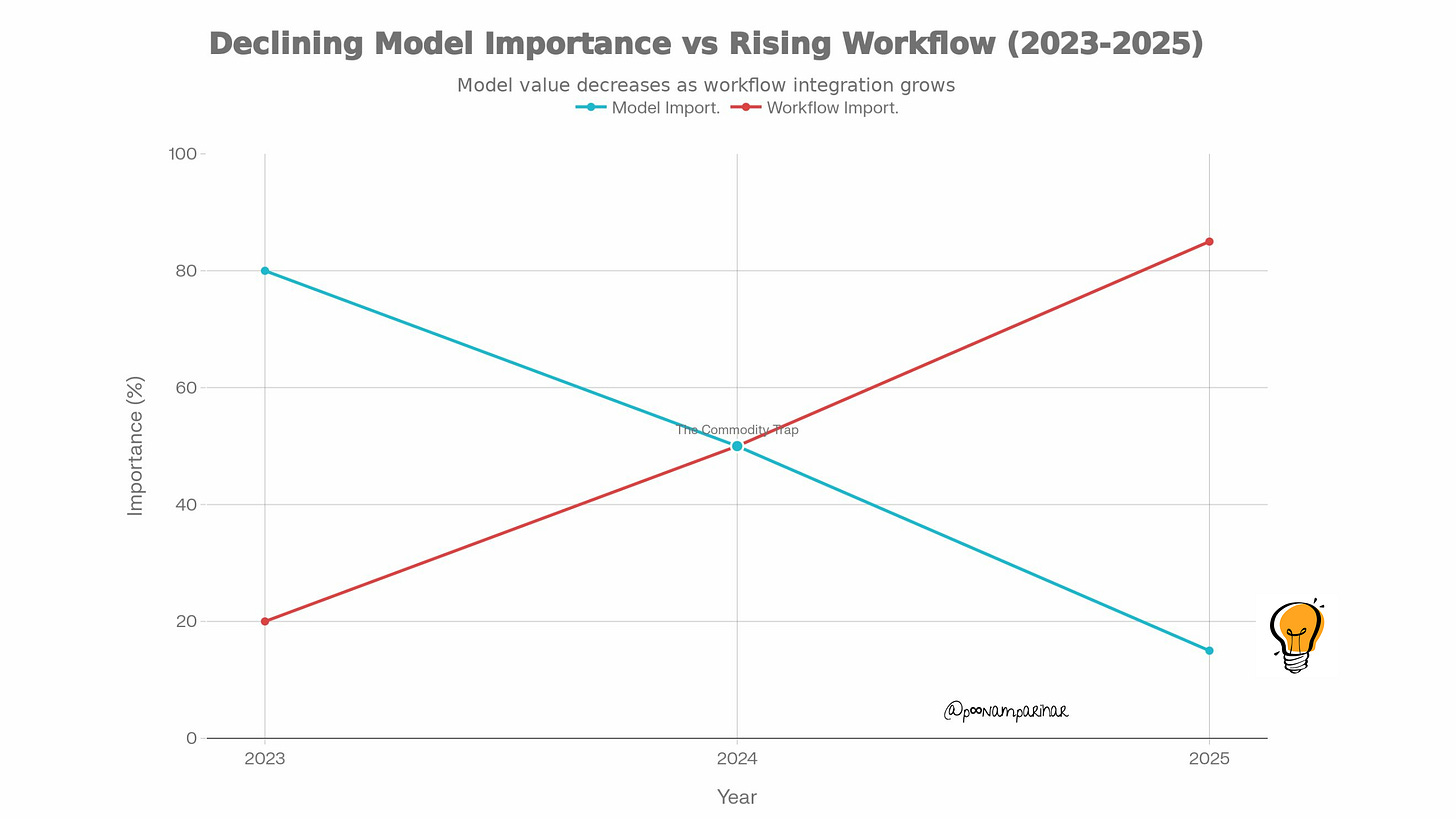

The most dangerous delusion in 2026 is believing that a ‘better model’ equals a better business. We are entering the era of Intelligence Parity, where the gap between the best proprietary model and the cheapest open-source alternative is narrowing every month.

If you compete on the Model Layer, you are fighting a war of attrition against giants with infinite GPUs. But if you compete on the Workflow Layer, you are building a ‘Nervous System’ for your customer.

A better model is just a brain transplant. it’s relatively easy to swap. A better workflow is a transplant of the spine and the habits of the team. That is where true defensibility lives. High performers in 2025 realized that a ‘good enough’ model embedded in a critical daily habit beats a ‘perfect’ model that lives in a separate browser tab every single time.

3 Reasons the “Best Model” Will Kill You

The better the underlying model becomes, the less valuable your “AI layer” becomes. If your value is “high accuracy,” you are one OpenAI update away from obsolescence.

This is why the 5% who won didn’t obsess over “the best model”, they obsessed over the most boring integration.

1. The “Better-is-Worse” Switching Cost: Custom-tuned models lock you into old infrastructure. When GPT-5 drops, competitors switch in a weekend. You spend six months re-tuning while your margins evaporate.

2. The “Intelligence Floor”: Expert-level intelligence is now an API call for everyone. “Our AI is smarter” is no longer a moat, it’s table stakes.

3. The “Model Burn Tax”: While the 5% used “good enough” models to power Workflow Anchors, the 95% spent their Series A on Model R&D.

The Spiky Take for 2026

Stop hiring ML Researchers. Start hiring Workflow Architects.

If you can replace your underlying AI model in 48 hours without the customer noticing, you have a Platform. If replacing the model breaks your entire business, you have a Technical Debt Trap.

Examples - Just What “Workflow Anchors” Actually Look Like?

Grammarly: Started as a “spell-check wrapper” but embedded into Gmail, Google Docs, Slack, and every text input on the web. Removing it breaks users’ daily writing flow.

Cursor: Not just “ChatGPT for code.” It learns your specific codebase, your team’s coding patterns, and your IDE shortcuts. Switching back to VS Code + ChatGPT feels like downgrading.

Notion AI: Embedded in the documents where teams already live. It's not a separate "AI tool"it's invisible infrastructure in your existing workflow.