What 2025 Taught Me Not to Do in 2026!

burned capital, failed pilots - expensive lessons for founders in 2025.

In 2025, AI captured 53% of all global venture capital, sucking in $259.7 billion while the non-AI startups fought over table scraps, literally. And the race to AI-adoption - almost everyone tried. 95% of companies that launched AI pilots failed to see any meaningful return on investment, and its not just “struggled”, they failed completely. The numbers seen throughout this year have been brutal.

I remember calling 2025 a wildebeest stampede during MWC , but the fact that this was the year AI’s promises collided head-on with operational reality, isn’t AI’s fault really. This wasn’t just another tech cycle if some of us thought it was, learning the lessons hard- way, but before we course correct for 2026, we need to clean up the wreckage that is everywhere.

Now that I am over the headlines and bigger picture, ( read my last post 👆 ) I want to focus on to some concrete numbers that can directly impact me and you, the founders/leaders. If you’re planning to build, scale, or invest in AI in 2026, this isn’t optional reading. These are expensive lessons written in hundreds of millions of dollars of burned capital, thousands of layoffs, and the corpses of once-promising unicorns. So let me walk you through what went wrong and more importantly, what you should never repeat.

Lesson 1: The AI Wrapper Death March

An “AI wrapper” is a startup that builds a simple interface on top of someone else’s AI model (like OpenAI’s GPT or Anthropic’s Claude). These companies don’t train their own AI or own proprietary data, they just make it prettier or easier to use for a specific task. The problem? When the underlying AI companies improve their own interfaces or add new features, wrappers become obsolete overnight.

So What happened: here’s some real examples of the carnage.

Builder.ai

Builder.ai was the poster child for this disaster. Once valued at $1.5 billion and backed by Microsoft, Qatar Investment Authority, and top-tier VCs, the company promised to make app development “as easy as ordering a pizza” using an AI assistant named “Natasha”.

The reality however was far messier. Investigations revealed that Builder.ai had hired 700 engineers in India to manually handle work that was supposedly being done by AI. The company’s AI capabilities were vastly overstated, revenues were allegedly inflated through fake transactions, and by mid-2025, Builder.ai filed for bankruptcy across multiple countries. The founder stepped down, creditors seized $37 million from company accounts, and more than 1,000 employees lost their jobs.

Other casualties of 2025 include:

Humane: Raised $241M for an AI wearable “Pin” that reviewers called “bad at almost everything it does.” Shut down in February 2025, selling assets to HP for $116M, less than half what investors put in

Noogata: Enterprise AI analytics with $28M in funding and marquee customers like PepsiCo. Couldn’t scale beyond pilots, missed milestones, and shut down

CodeParrot (YC W23): AI tool to convert designs into code. MRR peaked at $1,500, pivoted repeatedly, then shut down when founders couldn’t find product-market fit.

Tune AI: Built tools for fine-tuning LLMs, but cloud providers built similar features for FREE. Infrastructure costs stayed high while margins disappeared

Locale.ai: Geospatial AI with paying customers, but founder burnout led to principled shutdown.

Why This Matters for 2026?

AI startups now fall into two categories:

infrastructure players (who build the models and own the tech stack) and

application-layer wrappers (who build on top).

VCs know wrappers are risky, so they’re going to be harder to fund going forward. While the infrastructure companies may fail as well, but less frequently, and when they do fail, they’ve raised roughly twice the capital of wrapper companies.

The Mistake here: Building shallow applications on AI you don’t control, with no proprietary data advantage or deep workflow integration.

The Lesson: If you’re building on someone else’s models in 2026, you need one of three defensibility moats:

have proprietary data that makes your AI better,

do such deep integration into customer workflows that ripping you out is painful, or

network effects - that get stronger as more people use your product. Without at least one, you’re building a time bomb. PS - thats every time I hear about lovable 🧐 however specialized their AI agent what happens "vibe coding" could be commoditized?

Lesson 2: The Pilot Purgatory Problem - where 90% of AI initiatives get stuck

What happened: Companies launched hundreds of AI “experiments” that looked great in demos but died the moment they tried to scale them across the real organization.

The Numbers -

150 leader interviews, 350 employee surveys, and 300 public AI deployments - 95% of gen AI pilots failed to deliver measurable returns, according to MIT research.

80% of all AI projects fail, nearly double the failure rate of non-AI IT projects (RAND)

42% of AI initiatives were completely scrapped in 2025, sharply up from just 17% six months earlier (S&P Global)

Despite 88% of companies now using AI in at least one function, only 39% report any EBIT impact, and most of those say it’s less than 5%

Why Pilots Fail?

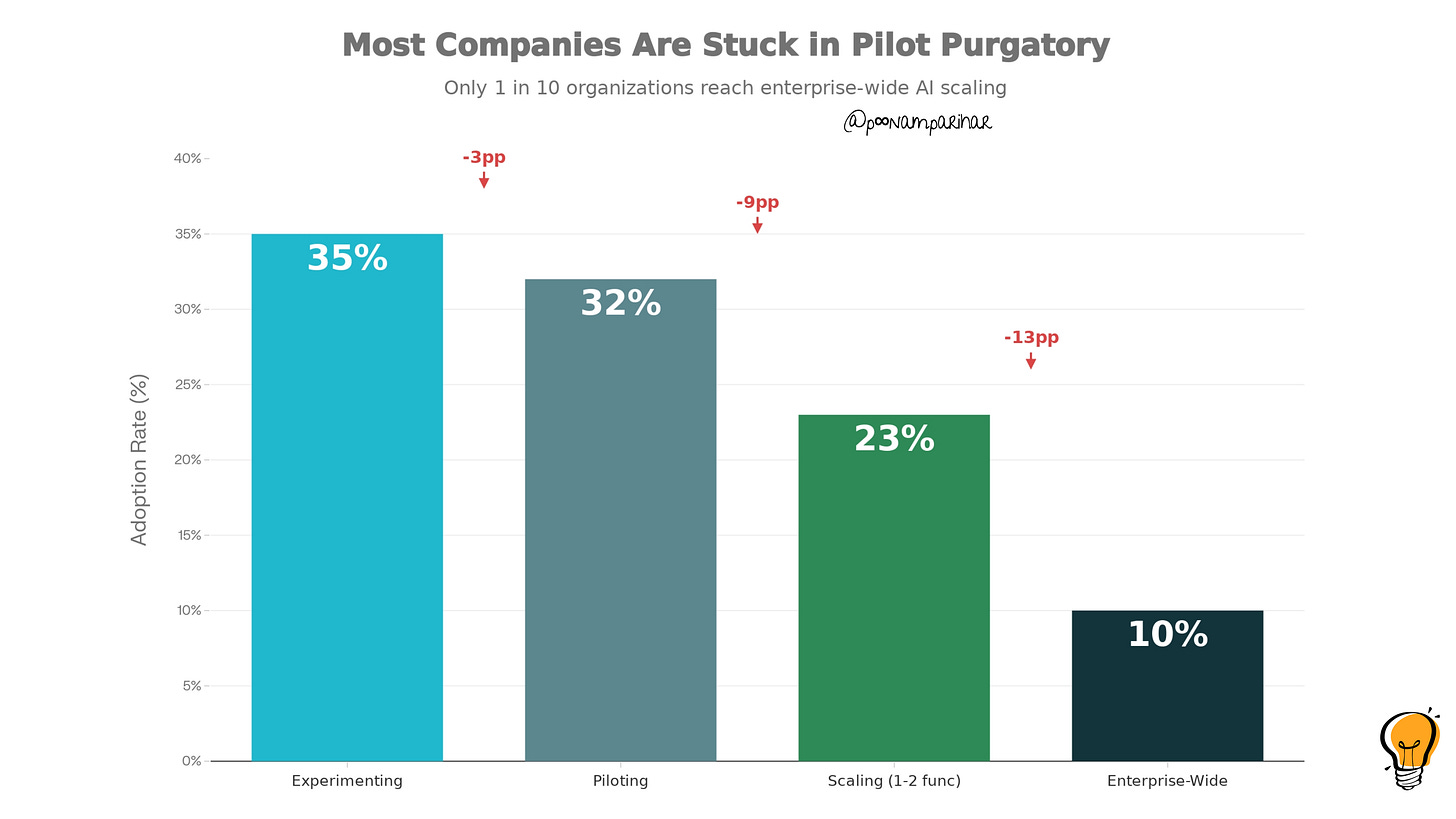

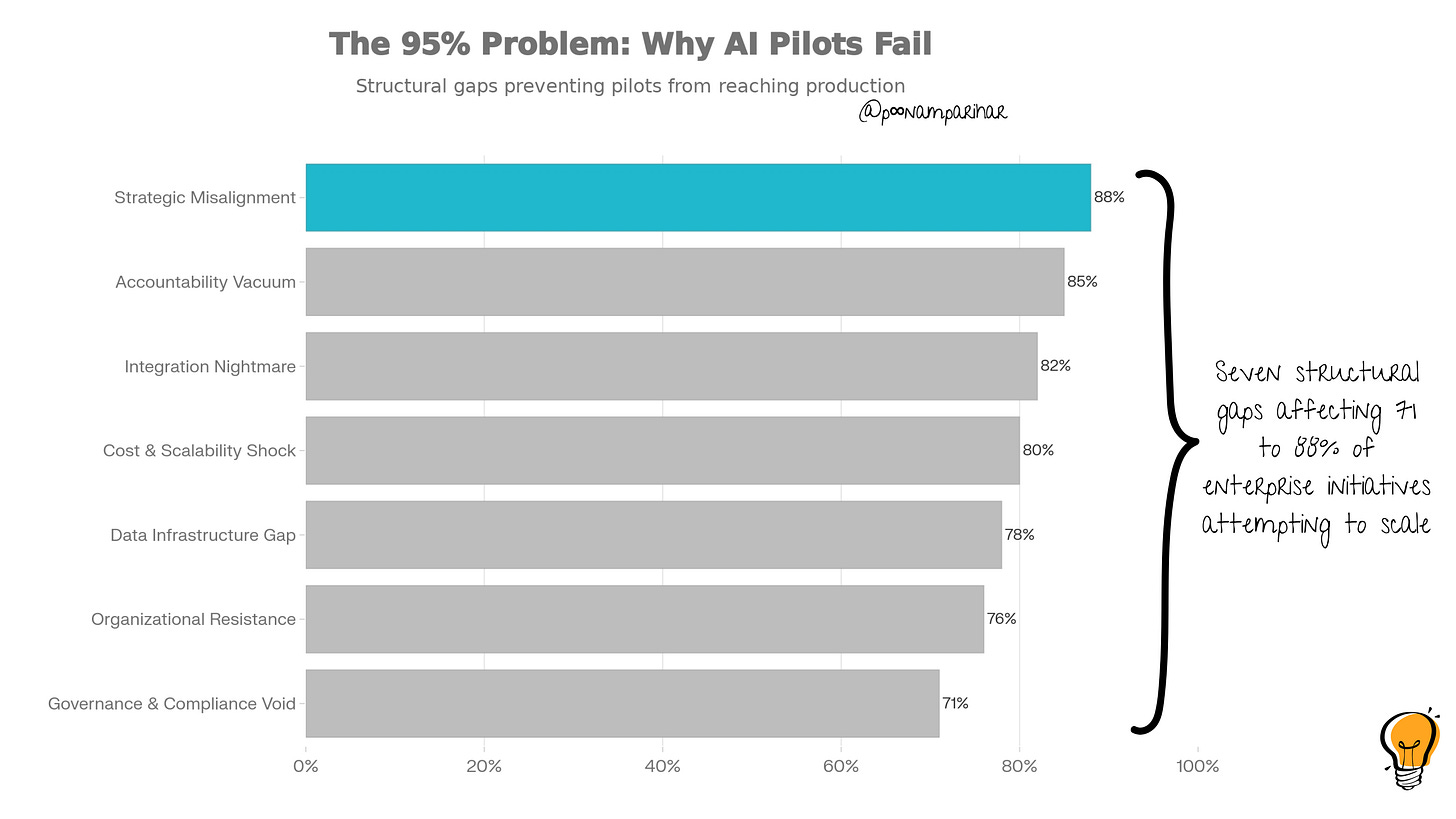

The AI adoption pipeline shows dramatic attrition: 67% of companies remain in experimentation or pilot phases, while only 10% achieve enterprise-wide scaling . A LinkedIn analysis by transformation consultants identified seven structural blockers that kill AI pilots between demo and deployment. - here, lets look at each of ‘em in detail!

1. nobody owns ROI

Accountability Vacuum (88% of failures)

No single person owns the P&L outcome but only the demos

Innovation teams build it, IT maintains it, business uses it, but nobody owns ROI

When leadership changes or budgets tighten, funding evaporates because there’s no champion

2. Data Infrastructure Gap (78%)

Data pipelines break constantly, causing model failures and destroying trust

No single source of truth, sales, finance, and operations - all have different definitions for the same metrics

and this is the most crucial. > Legacy systems can’t support real-time AI. they were never built for batch processing overnight, so not instant responses obviously.

3. Integration Nightmare (82%)

APIs are brittle, undocumented, rate-limited, or tied to the ancient monolithic systems

No clear handoff between proof-of-concept teams (who built the pilot) and production IT (who have to run it 24/7)

Retraining and model maintenance ownership is unclear, so model performance silently decays

4. Governance & Compliance Void (71%)

No model versioning, drift monitoring, or audit trails

Compliance teams block deployment because required controls aren’t in place

“Shadow AI” proliferates, which means employees use ChatGPT and other external tools without oversight, creating security and IP risks

5. Organizational Resistance (76%)

Frontline workers don’t trust AI-driven decisions, so they ignore or override them

Fear of job loss drives quiet sabotage

“Change management” consists of posters and workshops instead of real workflow redesign and incentive changes

6. Cost & Scalability Shock (80%)

What cost $5,000/month in a pilot suddenly costs $50,000/month in production

GPU, inference, and storage costs blow past projections

What worked for 100 users breaks completely when rolled out to 10,000 users across different regions

7. Strategic Misalignment (88%)

Executives demand results in weeks, but organizational reality requires years

Every department wants AI, but there’s no enterprise-level prioritization

Use cases chosen for “coolness factor” instead of actual business impact

CFOs cut funding when teams can’t explain where money is made or saved

Generic Tools Can’t Learn

MIT researchers discovered why ChatGPT works brilliantly for individuals but fails in enterprises: it doesn’t learn from your workflows. When you use ChatGPT personally,

‘you tolerate its quirks because you’re the one adapting. You rewrite prompts, ignore bad answers, and work around its limitations.’

But in a company with 5,000 employees, you can’t have everyone adapting differently. The AI needs to adapt to your specific workflows, and generic chatbots can’t do that.

The successful 5% build custom systems that:

Remember context from previous interactions

Learn from corrections and feedback

Integrate into existing tools (not separate dashboards)

Flag uncertainty instead of confidently hallucinating wrong answers

The False Positive Problem

If its the fomo, or something else, Companies are so desperate to “do AI”. there is just so much demand from enterprise companies to try the latest and greatest AI, sometimes there’s false positives of product market fit. You can get a lot of revenue with not having true ROI. In another word, you end up with impressive revenue numbers that hide the fact that customers will never renew or expand.

so when the pilot ends, so does the money.

The Mistake: Confusing pilot enthusiasm with product-market fit, and mistaking revenue without ROI for sustainable business.

The Lesson: If you can’t show that the pilot saved money, made money, or unlocked new revenue, it’s a science experiment, not a product.

Lesson 3: Follow the Money 💰

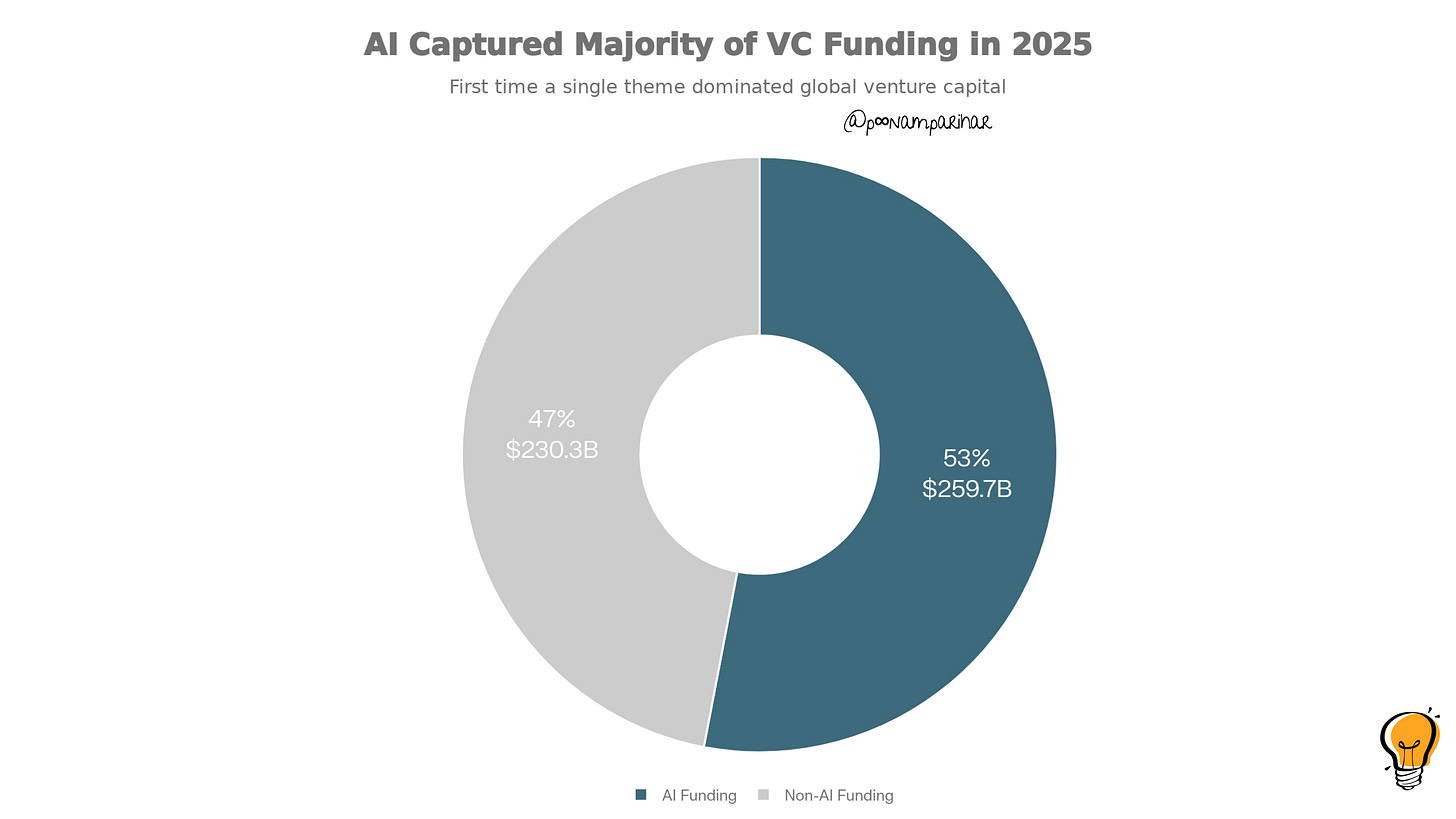

What happened: in 2025, AI captured the majority of all VC money for the first time in history, which sounds cool but where did it exactly go? - to just a handful of mega-companies while everyone else starved.

$259.7 billion out of $490 billion total was investment in AI

This is the first time ever a single technology theme captured the majority of venture dollars.

AI’s share hit 62.7% in Q3 2025 in USA.

The top three AI deals (Anthropic $13B, xAI $10B, Mistral AI $1.5B) represented more capital than thousands of smaller startups combined

So while the total deal count dropped 12% year-over-year, average late-stage deal sizes tripled to $1.55 billion, up from $481 million.

Early-stage and seed rounds saw no growth.

What This Means?

Other than the closed loop for investment that we have discussed in the last couple of posts, VCs are chasing “perceived safety” by betting on bigger on established players (OpenAI, Anthropic, xAI) rather than diversifying across hundreds of risky early-stage bets.

One general partner at a mid-sized VC said bluntly: “In practical terms, there’s simply no compelling new direction left. I find it surprising that many of my peers are still attempting to extract more value from large language models; I’ve moved past that”.

The Infrastructure vs. Application Divide

As infrastructure become more and more a priority, the shift is now from “let’s build another chatbot” to “let’s build the infrastructure that chatbots run on” explains why 62.7% of US VC dollars now go to AI, but most of that still going to late-stage infrastructure plays, so you’re a new startup, you need to -

Proprietary data moats: You have data nobody else has

Deep workflow integration: Ripping you out would break critical business processes

Technical defensibility: You’ve built LLMOps, RAG 2.0, or agentic workflows that are hard to replicate

The Mistake: Assuming you can raise venture capital for an application-layer AI startup the way you could in 2023-2024.

The Lesson: If you’re raising in 2026, you need to prove deep technical moats or prove you’re already generating strong unit economics.

The era of “we’ll figure out monetization later” is over for AI application companies. VCs are becoming “discerning”, their word for terrified.

Lesson 4: When the “AI” Was Humans All Along

What happened: well builder.ai happened. and few others too. -

Amazon’s “Just Walk Out” Technology: Amazon installed this system in physical stores, claiming AI used sensors to identify items shoppers picked up and automatically billed them. I actually tried this out, and I am still waiting for my bill to show up. so the system din;t really work. it required around 1,000 workers to manually check almost three-quarters of transactions. Amazon has eventually scrapped the technology.

Ryanair’s Customer Service Bot: The airline claimed its chatbot was powered by AI, but it actually used simple keyword matching technology from the 1990s, to respond to customer queries. nothing surprising here.

The Regulatory Crackdown

few regulatory cases that I could find -

The SEC settled its first AI-washing cases against investment firms Delphia and Global Predictions for false claims about AI usage

The FTC launched “Operation AI Comply” to target companies falsely advertising AI-powered products

The EU AI Act began enforcing stricter transparency requirements

Gartner analysts evaluated “thousands” of so-called agentic AI products and found only 130 genuinely met the criteria for agentic capabilities

Some Real-World Consequences

Air Canada was taken to court after its chatbot gave misleading information on bereavement fares, and the court ruled the company was liable for its AI’s mistakes.

This established legal precedent: companies are responsible for what their AI says, even if it hallucinates.

Why This Happens?

The gap in public knowledge about what AI can actually do creates opportunity for deception. Most people can’t distinguish between:

True machine learning

Simple automation rebranded as “AI-powered”

Keyword-matching systems from the 1990s labeled as “intelligent”

Human workers pretending to be AI (the Wizard of Oz approach)

As one analyst put it: “AI washing exploits the gap in public knowledge, making products and services seem more advanced than they are for example smart home appliances in this case and financial planning tools ”.

The Mistake: Overstating AI capabilities to raise funding or win customers, assuming nobody would look under the hood.

The Lesson:

Build trust through transparency, not hype.

Show your model architecture.

Explain your training data.

Demonstrate live.

Lesson 5: What the 5% High Performer did differently

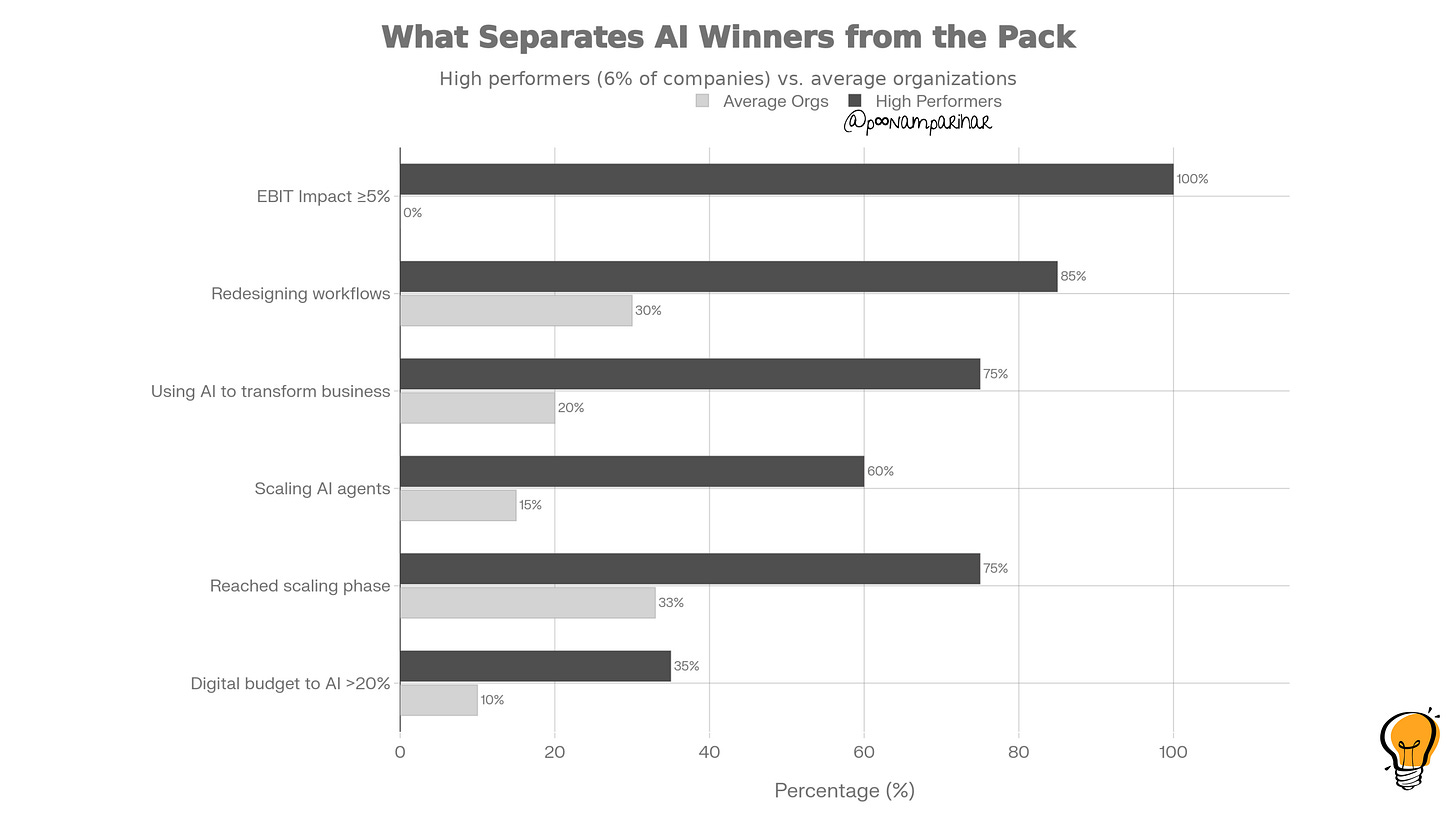

What happened: about 6% of respondents in McKinsey’s survey actually achieved breakthrough results. Yes. and these AI high performers dramatically outpaced average companies across all key metrics, with the largest gap in measurable EBIT impact.

Who Are the High Performers?

as per McKinsey the AI high performers are the organizations achieving:

At least 5% EBIT improvement directly attributable to AI

“Significant” qualitative value (faster innovation, better decisions, customer satisfaction)

The chart below shows that these high performers aren’t just slightly better, they’re 3-10x better across every dimension:

What did they do differently?

Strategic Ambition:

75% of high performers aim to use AI to transform their entire business, vs. 20% of average companies

100% have measurable EBIT impact, vs. 0% of average organizations

Execution Discipline:

85% redesign workflows around AI, vs. 30% of others

60% are scaling AI agents across functions, vs. 15% of others

75% have reached the scaling phase, vs. 33% of average companies

Investment Commitment:

35% allocate more than 20% of their digital budgets to AI, vs. 10% of others

High performers invest in technology and talent, not just on software licenses.

The Five Things High Performers Do

1. They Start with Business Outcomes, Not Technology

2. They Fundamentally Redesign Workflows

3. They Build Cross-Functional Execution Pods

4. They Run Purposeful Pilots with Ruthless Discipline

5. They Invest Heavily in Governance and Change Management

The Leadership Difference

Role model using AI tools themselves

Make AI adoption a formal part of performance reviews

Attend weekly standup meetings for critical AI projects

Publicly celebrate teams that redesign workflows successfully

The Lesson:

Copy the high performer playbook exactly.

Don’t innovate on execution. Use their exact frameworks: single-metric pilots, cross-functional pods, ruthless kill criteria, and workflow redesign.

The problem isn’t that execution frameworks don’t exist, it’s that 95% of companies ignore them.

Lesson 6: The AI Job Paradox

What happened: AI drove nearly 55,000 layoffs in the US alone in 2025, while simultaneously creating massive demand for AI talent. So what is really happening, seek high-skill AI expertise and kill the a middle-skill roles.

The Layoff Numbers

According to Challenger, Gray & Christmas:

AI was directly cited as the reason for 54,883 US job cuts in 2025

Total US job cuts hit 1.17 million in 2025 - the highest since the COVID-19 pandemic in 2020

October alone saw 153,000 cuts, with November adding 71,000 more

Who Got Hit Hardest?

Amazon: Cut 14,000 corporate jobs (4% of white-collar staff)

Microsoft: Eliminated approximately 15,000 jobs throughout 2025, including 9,000 in July alone.

Salesforce: 4,000 customer support positions with AI assistance (9k to 4K per the CEO)

IBM: CEO Arvind Krishna told Wall Street that AI chatbots had replaced “several hundred” human resources employees.

Other Major Cuts:

Workday: 8.5% reduction (1,750 jobs)

Scale AI: 14% cut (200 employees) plus 500 contractors

CrowdStrike: Cited AI as a factor in layoffs

Deepwatch: Cut 60-80 employees, citing AI as a factor

The Hiring While Firing paradox

Despite massive layoffs, most organizations hired for AI-related roles over the past year, and the most in-demand positions were:

Software engineers specialized in AI/ML

Data engineers who can build AI-ready data pipelines

ML engineers who understand model deployment

Prompt engineers and AI application developers

What this signals at is AI eliminating routine work but at the same time creating demand for high-skill technical roles.

What CEOs Are Actually Saying

Amazon’s Andy Jassy (June 2025): “AI will lead to fewer individuals in some current roles and more individuals taking on different types of jobs”.

Amazon’s Beth Galetti (October 2025): “This generation of AI represents the most transformative technology since the Internet... A leaner organization with fewer layers is necessary for us to act swiftly”.

Salesforce’s Marc Benioff (September 2025): On cutting 4,000 support roles with AI: “I require fewer personnel”.

The Workforce Size Debate

McKinsey’s survey shows divided expectations on AI’s workforce impact:

32% of leaders expect workforce reductions of 3% or more in the next year

43% expect no change in total headcount

13% expect increases of 3% or more

The Reality:

Middle-management coordination roles shrink as AI handles workflow orchestration.

Routine, repetitive roles disappear.

High-skill technical and strategic roles expand.

The Mistake: Assuming your current workforce can simply “learn AI” without major retraining investment.

The Lesson:

If you’re building AI products, factor in massive displacement anxiety among potential customers’ workforces.

Transparently retrain displaced workers for new roles and involving frontline staff in redesign, not just automating them away.

SO a quick recap for the 2026 Playbook: 5 Things Not to Do

1. Don’t Build AI Wrappers Without Deep Moats

2. Don’t Confuse Pilot Revenue with Product-Market Fit

3. Don’t Skip the Seven Structural Gaps

4. Don’t AI-Wash Your Capabilities

5. Don’t Underinvest in Talent and Governance

What to Do Instead: The High Performer Formula

Very simple do what the 5% do and this time copy-paste works!

Start with business outcomes, not technology: Define the dollar metric you’re moving before writing a line of code

Build cross-functional pods with end-to-end ownership: No handoffs between innovation and operations

Run 6-10 week pilots with single KPIs and kill criteria: Ruthlessly stop anything that doesn’t move the metric

Redesign workflows from scratch with AI at the center: Don’t add AI to broken processes

Invest in governance, change management, and talent: These aren’t overhead they’re the difference between 5% success and 95% failure

👉 In the next post, lets expand on the how of this.

Conclusion: The Expensive Education

2026 won’t reward optimism. It will reward execution.

2025 was the year the AI market grew up. The unicorns built on hype filed for bankruptcy. The pilots that should have become products stayed experiments. More than half the money flooded in to AI went to giants.

But what we learned that AI success isn’t about model capabilities, it’s about operational excellence. building deep moats, and running disciplined pilots could get you the ROI expected.

We learned that the gap between pilot and production isn’t 6 months of work, it’s 3-5 years of organizational operating system upgrades most enterprises haven’t even started yet. The companies that succeed will be those that learned from 2025’s expensive mistakes and copied the high performer playbook exactly.