$12 Billion for a Fine‑Tuning API?

Mira Murati, Thinking Machines, and Tinker API. the one AI Bubble story that went largely unnoticed in 2025, plus why it actually matters!

Fine‑tuning infra is a genuine bottleneck

Fine‑tuning infra is one of the many hard AI‑infra problems. It sits in the model‑development / personalization layer of the AI stack. it comes after a foundation model has been pre‑trained, and before that adapted model is deployed and served to users, as the step where you specialize a generic LLM with your own data, tasks, and tone. Beyond fine‑tuning, teams still struggle with data pipelines, GPU‑efficient serving, security/compliance, observability, and integration into messy legacy systems.

But fine‑tuning infra is one of the main pain point, because most teams don’t struggle with ideas for customizing models, they struggle with the plumbing needed to actually run those experiments. Fine‑tuning large LLMs means coordinating high‑end GPUs, fast storage, and low‑latency networking while keeping utilization high and costs under control, which demands deep distributed‑systems expertise that many orgs simply don’t have. Even “lighter” approaches like LoRA still hit GPU memory limits, data‑pipeline bottlenecks, and orchestration complexity, so without a solid infra layer, teams waste 30–50% of training time on underutilized hardware and operational glitches instead of iterating on models.

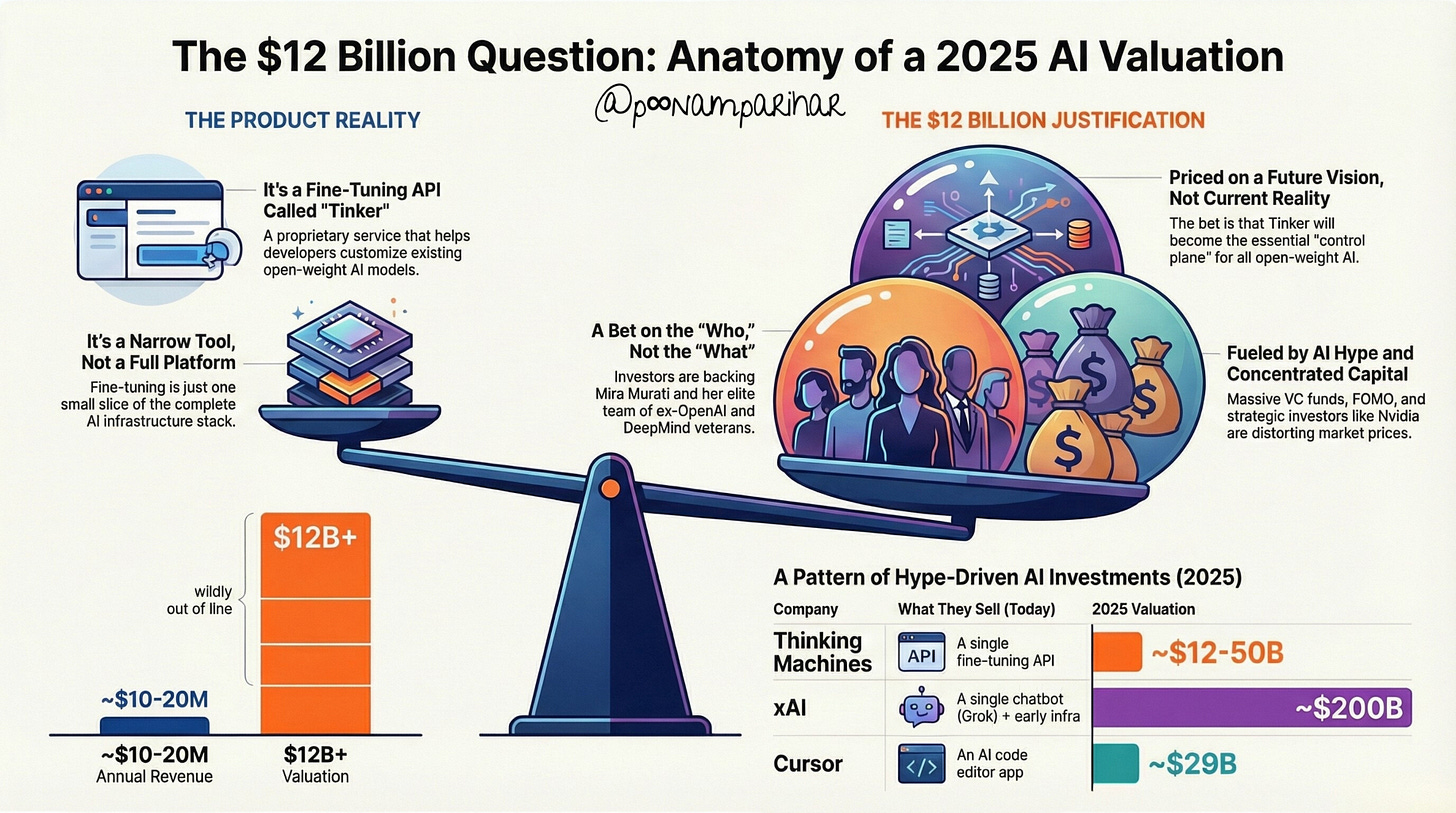

But can a narrow tool, ( not a full platform ) and a small slice of AI infrastructure stack justifies a $12B valuation?

The last time Mira Murati really broke through my news feed on linkedIn was in August 2025. Fortune ran and amplified MIT’s NANDA report claiming that around 95% of generative‑AI pilots at companies were failing, a story that went viral for its stark headline about the ‘GenAI divide’ and that was before anyone could get their hands on the report to analyze the sample data size. around the same day another Fortune piece from few weeks ( end of June - earlier July timeline) before on Thinking Machines’ $2 billion seed round at a roughly $12 billion valuation showed up and was presented as a milestone / record breaking / largest raise for a female founder in frontier tech. No sign of AI boom still being alive on this one, while the NANDA piece became the go‑to reference for skepticism about enterprise AI, and overall AI hype.

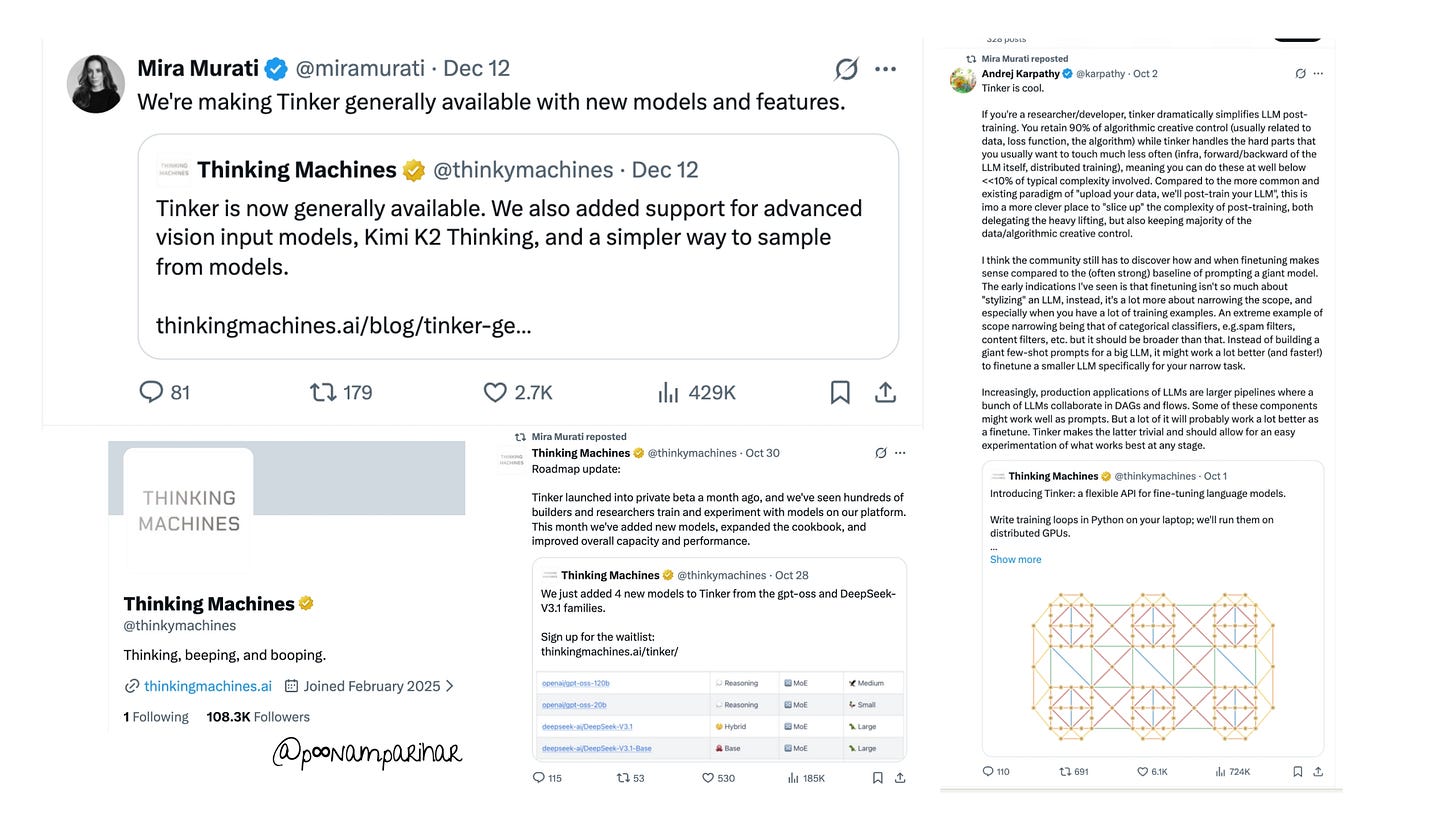

So when I saw bunch of updates on Tinker, including the latest one from 2 days ago, about both Tinker’s general availability and Mira Murati eyeing for $50B valuation, I wanted to dig deeper.

SO What is Tinker Exactly?

If you ask people outside the AI infra bubble what Tinker does, most won’t know. And that’s partly because the answer is boring: it’s an API for fine-tuning and training large language models.

Fine-tuning which I already explained above, and that might have bored you already? - is one of the least sexy parts of AI. It’s not building a frontier model. It’s not creating the next GPT.

It’s the infrastructure work that happens after a model is built

the process of taking a base model and adapting it for a specific task, domain, or organization.

Today, if you want to fine-tune a model, you have a few options. You can try to do it yourself, or you can use one of the big cloud providers like AWS, Google Cloud, Azure but then you’re locked into their ecosystem and their pricing.

Tinker’s pitch is simpler

write your training loop in Python, upload your data, and Tinker handles the heavy lifting. The company manages the GPU orchestration, the fault tolerance, the storage, the scaling. You keep control of your algorithms and your data flows. You pay per token for what you use.

It’s useful. It solves a real problem. Research labs are already using it.

But it’s also... not revolutionary.

It’s managed infrastructure on top of open-weight models. Think of it like Databricks for LLMs, but narrower and earlier-stage.

So here’s the tension that sits at the heart of this entire story: VCs have given Mira Murati $12 billion for a fine-tuning API because they believe “the vision”, that this wedge will expand into a full control plane for how open-weight models are customized, trained, aligned, and deployed, even though the current product is just one slice of that vision.

The Tension:

Product shipped: One API for fine-tuning

Vision marketed: A control plane for how all open-weight models get customized and deployed

There’s a MASSIVE GAP between what exists and what’s being priced

Here’s where it gets more interesting -

Not Open Source (Despite the Framing)

( and I wrote in length about open source here and here specially model gap and agentic AI open frameworks)

I needed to clarify something that kept confusing me: Tinker gets marketed with “open” language, but it’s not actually open source.

What’s Proprietary:

Tinker’s core infrastructure is closed and proprietary

Runs on Thinking Machines’ servers in their cloud

You don’t own or control the training infrastructure

What’s Open:

The models it supports are open-weight (Llama, Qwen, Kimi etc.)

They publish the “Tinker Cookbook” as open-source (example implementations)

The framing emphasizes “not locked into closed systems”

The Reality Check:

The business model is classic SaaS infra: proprietary service, open-weight model support, usage-based pricing.

The “open” positioning is more about marketing than product architecture.

I dont; think I need to go in length about who’s Mira Murati but some details may help, make the case.

Who is Mira Murati?

Mira Murati spent the last six years at OpenAI running product. she was the CTO, the product lead for ChatGPT, for RLHF (Reinforcement Learning from Human Feedback, the breakthrough technique that made LLMs usable), and for every major release that turned OpenAI from a research lab into a trillion-dollar company and defined the frontier of consumer AI.

When you run OpenAI’s product, you’re not just shipping features. You’re shaping how hundreds of millions of people interact with AI. You’re learning what works, what breaks, how to build alignment and safety into systems that billions will use. So You’re in the room when the most important technical decisions in AI are made. and That’s valuable yes, worth a $12 billion bet on Day 1? Hmm.

She didn’t launch Thinking Machines alone however. Her co-founders include six other OpenAI veterans, plus researchers from Mistral AI and DeepMind. Her investors include VC firms like a16z and Accel, but also strategic checkbooks from Nvidia, Cisco, ServiceNow, AMD, and Jane Street. The cap table reads like a who’s-who of the companies -

that have the most to gain if this lab becomes another foundational piece of the AI stack.

So it understand why a fine-tuning API is worth $12 billion before it has a business model to show, I guess we have to understand who built it. - the AI celebrities?

So the first thing to understand:

this wasn’t VC capital making a bet on a product.

It was VC capital making a bet on a team and a narrative, the idea that these specific people, at this specific moment, could launch the next foundational AI company. yes?

and Why Do VCs Care So Much? Why $12 Billion for a Fine-Tuning API?

This is the question that started my whole investigation. When I looked at the funding timeline, it looked insane. and the answers I found paint a picture of a market that’s either brilliantly optimized or fundamentally broken, depending on well, who you actually ask. I am asking AI, mostly. so far! yes.

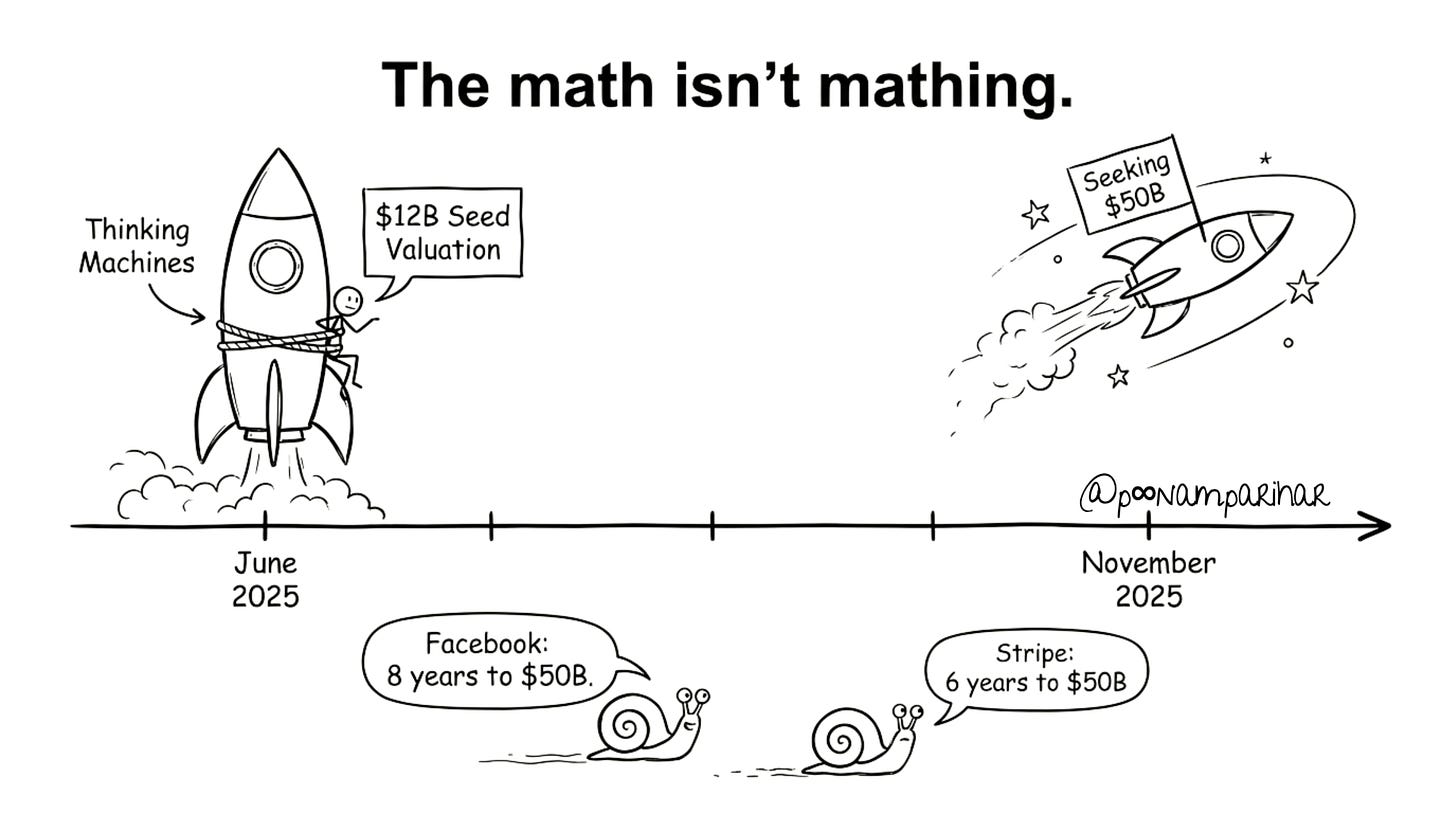

The Capital Trajectory:

June 2025: Raised $2 billion at $10 billion valuation (seed round - largest ever in that category ( AI din’t care to highlight a woman here btw))

July 2025: Valuation hit $12 billion

November 2025: In talks to raise at ~$50 billion valuation

For Reference:

Facebook: 8 years to $50B valuation

Stripe: 6 years to $50B+ valuation

Thinking Machines: 11 months to $50B+ “in talks” the company was founded in January 2025.

With one product shipped. That had been live for two months.

So VCs’ Real Bet - Even if Tinker flops, this team is valuable enough to acquire!

Team Pedigree = Optionality

Mira Murati: Former OpenAI CTO, led ChatGPT and RLHF

Six other OpenAI veterans on the founding team

Signal to investors: “This group could launch the next frontier AI lab”

Narrative: “Even if Tinker flops, this team is valuable enough to acquire”

Strategic Positioning

Positioned as the control layer for open-weight model training

If successful, becomes a chokepoint in the AI stack (like Snowflake for data)

Market framing: “Whoever owns model customization infrastructure owns the future”

Real Market Tailwinds

Fine-tuning and orchestration services forecast at $10–15B by 2033

~30% compound annual growth expected

Every AI deployment needs model customization, alignment, and experimentation

Capital Concentration & FOMO

40% of all VC exit value in 2025 comes from AI

Most of that concentrates in a handful of “obvious” bets

Large funds need massive checks; they can’t find enough mega-deals

Fear of missing out is stronger than fear of overpaying

Strategic Investor Incentives

Nvidia (strategic investor) benefits from selling GPUs to Thinking Machines

Big cloud providers benefit from infra that locks in open-weight workflows

These aren’t purely financial bets; they’re ecosystem plays

The Bottom Line:

Actual traction: Minimal (early adopter researchers, a few enterprise pilots)

What’s being priced: The optionality that this team becomes indispensable

The gap between these: Enormous

Now that we’ve already answered the question about $12B I wanted to understand -

What’s the Business Plan is Really?

I looked at what Murati and the team publicly state about their strategy.

The Official Narrative:

“Democratize access to advanced model customization”

“Enable researchers and developers to experiment without massive GPU clusters”

Focus on “meta-learning” and “superhuman learners” rather than just bigger base models

Implicit bet: “The value isn’t in the biggest model; it’s in who controls how models are customized”

And this is interesting because it’s implicitly against the frontier model thesis that OpenAI and Anthropic are pursuing. Murati is betting that the next wave of AI value comes from infrastructure, not from who builds the best base model.

Why Fine-Tuning as the First Product?

If you’re going to build a multi-billion dollar platform, why start with fine-tuning? It seems narrow.

The Strategic Logic:

It’s the highest-friction point as stated in the beginning in open-weight model development

Every lab, every enterprise, every developer building on Llama/Qwen/DeepSeek hits this problem

Fine-tuning infrastructure is genuinely hard: GPU orchestration, distributed training, fault tolerance

If you own the access point where people customize models, you control a huge slice of downstream value

The Bet Underlying the Valuation:

Today: Tinker is a fine-tuning API

Tomorrow: Expands to hosting, routing, monitoring, alignment tooling

Endgame: Becomes the control plane for open-weight AI development

The $12B+ price tag assumes that transition from “wedge” to “platform”

The Problem with This Logic:

Big clouds (AWS, Google Cloud, Azure) are already commoditizing fine-tuning

The longer Thinking Machines stays “just” a fine-tuning API, the more vulnerable it is to this commoditization

They have maybe 2–3 years to expand before this window closes

And about Controlling the AI Infrastructure -

This below is a full stack from hardware up through deployment. Tinker is just a slice of it.

The Full AI Infrastructure Stack:

Compute layer: GPUs, TPUs, specialized chips

Storage and networking: Distributed systems, high-speed interconnects

Orchestration: How jobs get scheduled and run

Data pipelines and management

MLOps and observability

Model training and fine-tuning

Model serving and inference

Monitoring and alerting

So when VCs call Tinker “AI infrastructure,” what do they actually mean?

The Honest Assessment:

Is it AI infrastructure? Technically, yes (it’s part of the stack)

Is it the AI infrastructure? No, it’s a slice

Is calling it “infrastructure” generous? Absolutely

Why It Still Gets That Label:

If Thinking Machines expands to hosting, inference, orchestration, and monitoring, it could become broader

Investors are pricing the optionality of that expansion

It’s a bet on a future position, not the current product

Comparable Analogy:

Kubernetes started as “just” container orchestration

It expanded into a foundational platform for distributed systems

Today, knowing Kubernetes is a table-stakes requirement for infra engineers

So the question now - Is Tinker the Kubernetes of open-weight model training, or is it a more niche tool?

Quick look a the the Money Trail and Who Really Wins

Funding Breakdown (approximately $2B):

a16z (traditional VC): ~$500M

Nvidia (strategic hardware): ~$600M

Accel (traditional VC): ~$400M

Other corporates (ServiceNow, Cisco, AMD): ~$350M

Jane Street (prop trading firm): ~$150M

I already wrote about Nvidia’s engineered demand on linkedIn from ecosystem perspective, but then I din’t pay much attention to its Thinking Machines investment. it’s not very far form the original game though, but just it reiterate -

Nvidia isn’t investing to get 5-10x returns on equity

Nvidia is investing because Thinking Machines will buy Nvidia GPUs

Every dollar of Nvidia investment that flows back as GPU purchases is revenue for Nvidia

This is called “engineering demand” and it’s entirely legal and rational

The Flywheel:

Nvidia invests in startups → startups buy Nvidia GPUs → Nvidia revenue grows → Nvidia stock up → Nvidia invests in more startups → repeat

It’s not a conspiracy; it’s capital and incentives aligning

The Implication:

The $12B valuation is partly driven by strategic investors locking in customer/ecosystem relationships

But all this makes the valuation even less connected to “fair financial pricing”

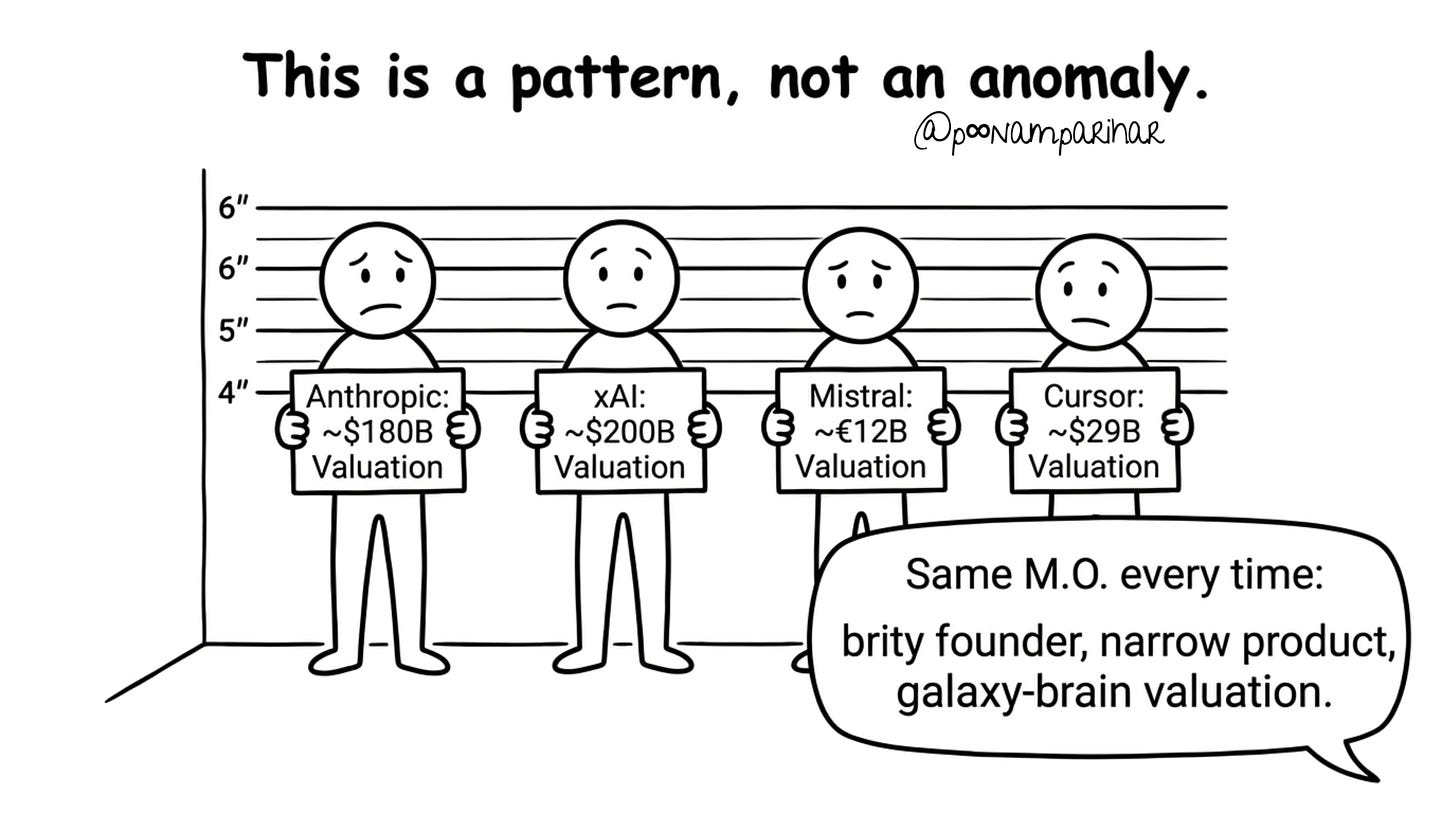

One of my key questions that followed in this research was : is Thinking Machines unique, or is this pattern everywhere?

The Answer: This pattern is everywhere in 2025’s AI funding.

You’re Not Alone—And That’s the Problem.

The Pattern: ( image above)

All valued in the billions despite being early-stage or single-product

All have founder pedigree (ex-OpenAI, ex-FAANG, high-profile billionaires)

All betting on “big future narrative” over current traction

All getting special treatment vs. “normal” startups with real revenue

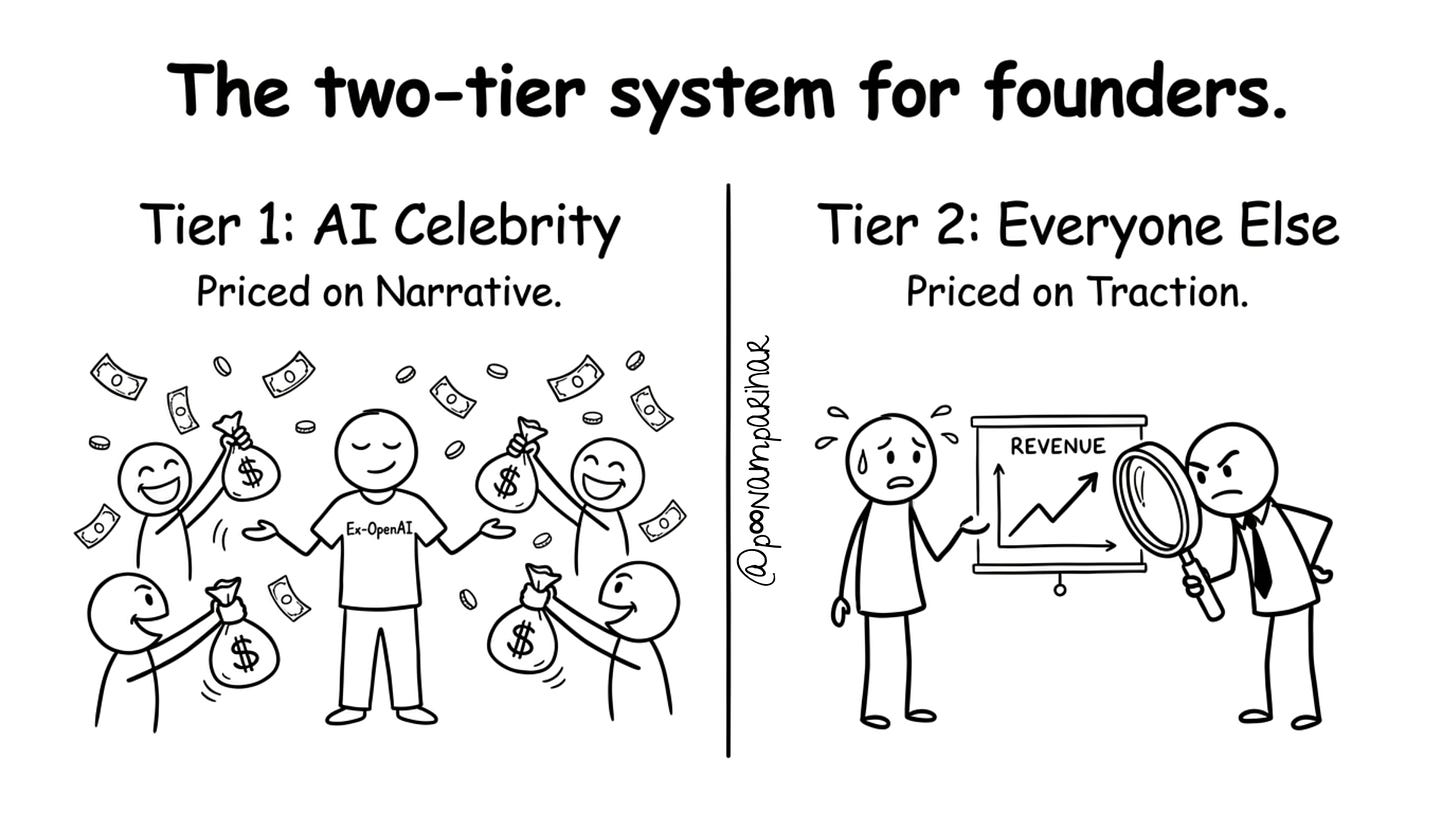

The Two-Tier System

This is where it gets really unfair.

Tier 1: AI Lab / Infra (Celebrity Founders)

Founders: ex-OpenAI, ex-Anthropic, high-profile billionaires

Stage: Pre-product or single product

Valuation multiples: Basically “what can we get away with asking?”

VC behavior: “How much do we need to commit to get in?”

Reality: Narrative and FOMO drive the round

Tier 2: Everything Else (Normal Founders)

Founders: Competent people without OpenAI pedigree

Stage: Revenue, product-market fit

Valuation multiples: 10–50x revenue (traditional startup metrics)

VC behavior: “Prove this isn’t a fad and show unit economics”

Reality: You need to actually execute

The Gap:

Mira Murati gets $12B on a team and a narrative

A normal team with the same product would struggle to raise $100M

This is the venture capital market in 2025: radically unfair based on founder brand

To Summarize to all - and

Why This Matters Beyond Tinker?

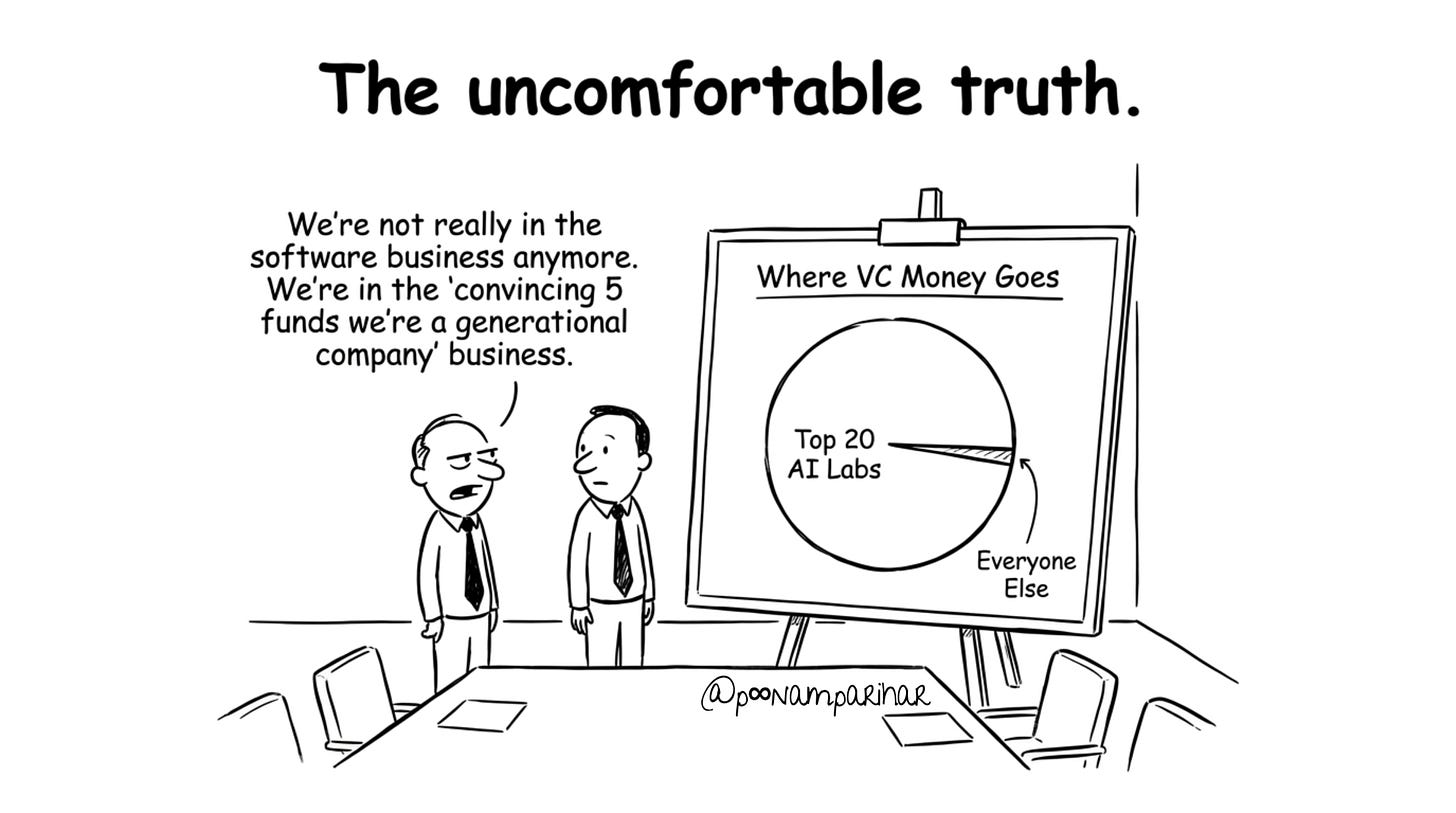

Capital concentration: Most VC dollars flowing to ~20 AI “obvious” bets

Capital is concentrating in fewer hands

The biggest mega-funds control most of the money

They’re making bigger bets on fewer companies

Within AI, this is extreme: 40% of 2025 VC exit value from AI, but flowing to tiny number of companies

Two-tier system: Celebrity founders get priced on optionality; everyone else needs real traction

The Numbers:

Roughly 60% of all VC capital flowing to AI now goes to top 20 AI labs/startups

Next 30% goes to the next 100 AI companies

Final 10% goes to all other startups

What This Creates:

If you’re Mira Murati: You can raise billions on reputation

If you’re not: You’re competing for scraps

Systemic incentive misalignment: Nvidia is the most obvious example, but it’s not unique. also -

Nvidia’s plan is Smart, Not Nefarious:

It’s legal and transparent

It’s actually quite rational

But it means the “valuation” reflects partly supply chain integration, not just financial returns

The Implication:

The $12B valuation is inflated by strategic value, not just equity value.

So Where’s This All Heading?

First for Tinker and Mira Murati. - The Most Likely Scenarios

Based on everything I’ve learned, here are the probability-weighted outcomes:

Scenario 1: Moderate Success (50% probability)

Tinker becomes a real infra business with solid customer base

Revenue grows to $500M–$1B annually by 2030

Never justifies $50B valuation on fundamentals

Investors mark it as a “win” anyway because the team proved the thesis

Murati remains influential in AI

Scenario 2: Strategic Acquisition (35% probability)

In 3–4 years, market cools, valuations normalize

Large tech company or hyperscaler acquires Thinking Machines

Reason: Get the team + plug Tinker into their infra

Price: $5–20B (depending on market conditions)

Outcome: Murati gets high-level AI role at acquirer

Scenario 3: Overfunded Drift (15% probability)

Tinker never achieves product-market fit at scale

Company stays well-capitalized but doesn’t grow explosively

Big clouds commoditize fine-tuning before Thinking Machines expands

Slow fade or acqui-hire in 5–7 years

Murati’s brand survives; she lands a top role elsewhere

My Estimate:

Scenario 1 or 2 is most likely. Scenario 3 is possible if execution falters. Scenario 4 (explosive success) would require frontier-lab-level execution, which is rare.

What This All Reveals About 2025

My tiny weekend investigation of Thinking Machines somewhat turned into a much larger story about how capital, narrative, and incentives are shaping AI right now.

The Core Truth:

Thinking Machines is getting $12 billion partly because Mira Murati is genuinely talented and has proven track record

But it’s also getting that money because of hype, FOMO, strategic investor incentives, and capital concentration

The product (fine-tuning API) is real but narrow

The valuation is disconnected from fundamentals

And yet this isn’t fraud, it’s the logical output of the current venture capital incentive structure

What This Means:

For builders: If you’re not in the “obvious” AI category, you’re playing a different game with much harsher metrics

For investors: You’re probably overpaying if you’re investing in 2025 AI at these valuations

For the market: Capital misallocation on this scale usually ends badly

The Uncomfortable Truth:

Mira Murati probably deserves some level of premium valuation based on her track record. But $12B for a fine-tuning API that’s been live for two months? That’s not a premium. That’s the financial equivalent of FOMO dressed up as venture capital.

Did all of the above really solve the $12 Billion Question?

I was just curious why no one is talking about tinker that much? well no that its generally available? it will be? But the real story is that, -

The silence around Tinker isn’t a bug - it’s a feature. Tinker is infrastructure. It doesn’t change how people use AI. It’s valuable for researchers, but it’s not a cultural moment.

Tinker is real – A useful product that solves a genuine problem for researchers and ML teams

The valuation is not justified by current traction – It’s priced on optionality, narrative, and founder brand

This isn’t unique – Anthropic, xAI, Mistral, Cursor all follow similar patterns

Strategic incentives matter – Nvidia and others aren’t purely financial investors

Capital is extremely concentrated – A tiny number of AI bets soak up most of the money

It’s probably not a scam – But it’s also not transparent investing

What however make a real story is how we got to a place where a fine-tuning API can command a $12 billion valuation. That story reveals something about the state of venture capital, the concentration of AI funding, and the narratives we tell ourselves about which companies matter.

Thinking Machines may or may not become a solid infra company, or gets acquired by AWS or Google in 3–4 years. 2025 becomes the time capital got really crazy for AI. In fact honestly the real question isn’t whether Thinking Machines will succeed or fail. It’s whether an entire venture capital ecosystem that can value a fine-tuning API at $12 billion is working the way it’s supposed to.

I think the answer is no.

The problem with treating fine-tuning infrastructure as the core value layer is that fine-tuning itself only shifts the model’s statistical behavior toward a domain — it doesn’t introduce structural optimization or any higher-level rules that guarantee reasoning quality or stability. Even a base model without fine-tuning already contains strong implicit constraints from pre-training and alignment, so in many cases the real bottleneck is not “how to fine-tune faster”, but “how to ensure predictable, reliable behavior at the system level”. Fine-tuning changes preference; it doesn’t solve consistency, verification, or control — and that’s where the real long-term challenge lies.

More developments as on Jan 15, 2026. two co-founders exiting Thinking machines and going back to Open AI plus Open AI's current acquisitions, all here in notes - https://substack.com/@pariharpoonam/note/c-200178894?r=5xcg67&utm_source=notes-share-action&utm_medium=web